吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (7): 2393-2401.doi: 10.13229/j.cnki.jdxbgxb.20231128

• 计算机科学与技术 • 上一篇

基于对比学习文本感知的小样本遥感图像分类

- 吉林大学 计算机科学与技术学院,长春 130012

Few-shot remote sensing image classification based on contrastive learning text perception

- College of Computer Science and Technology,Jilin University,Changchun 130012,China

摘要:

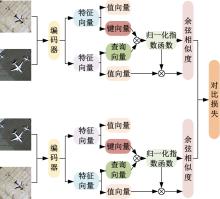

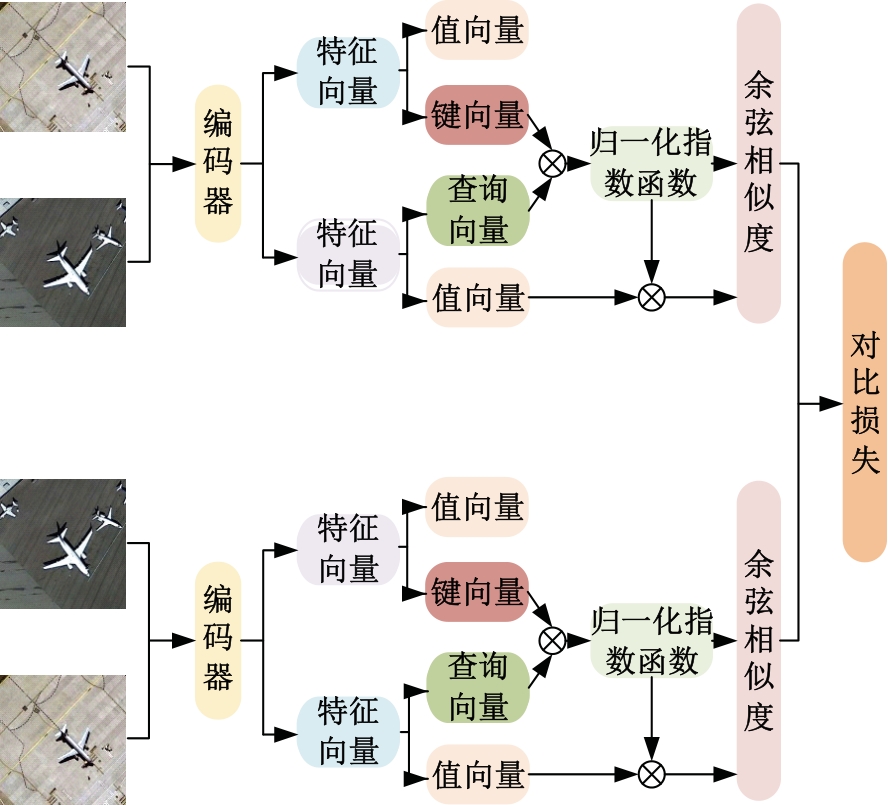

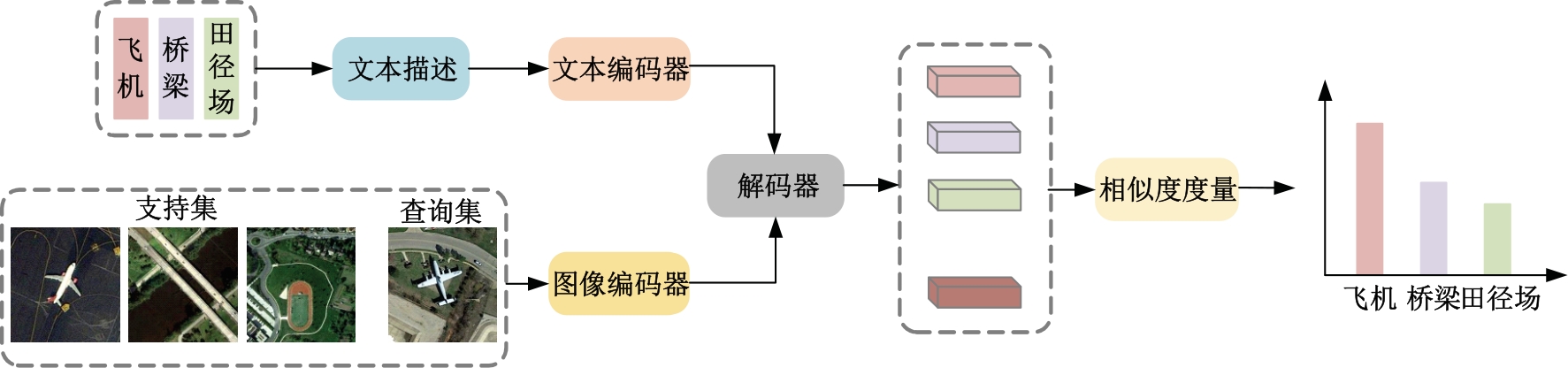

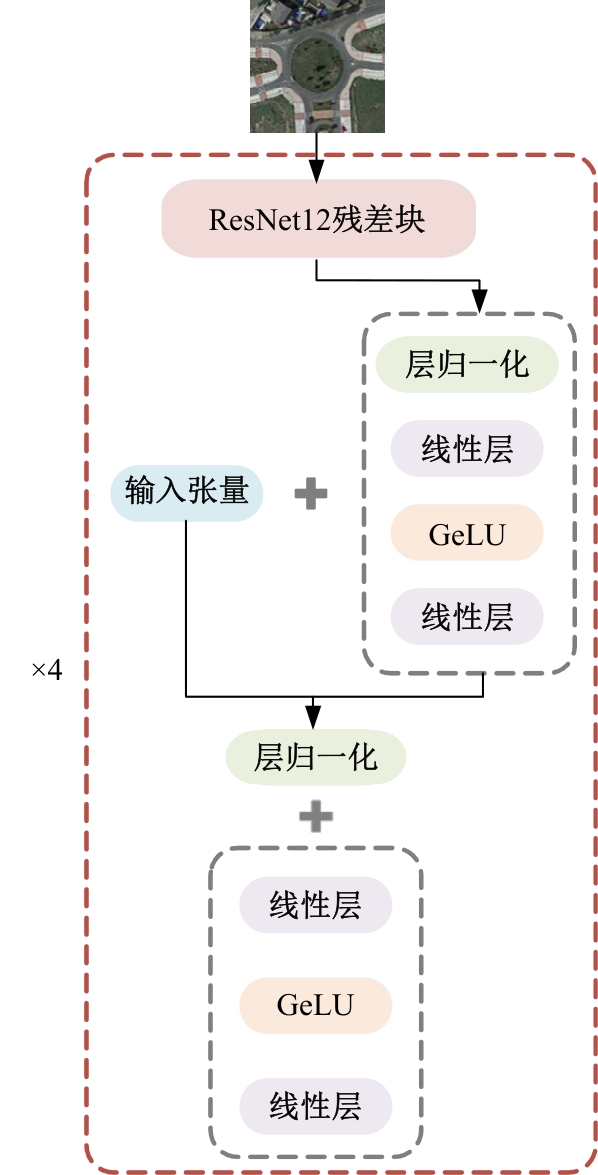

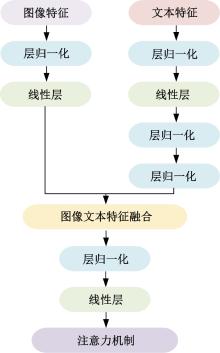

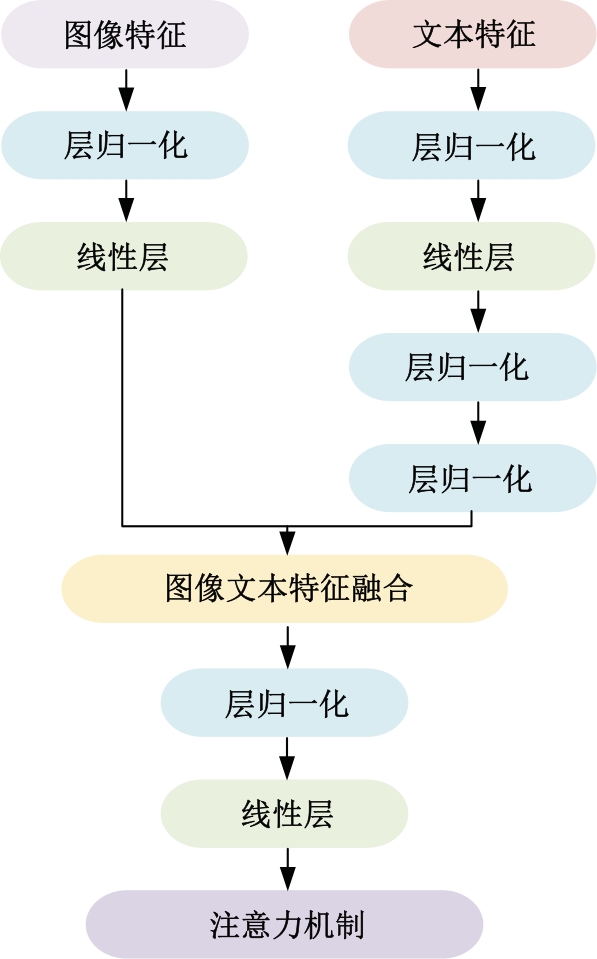

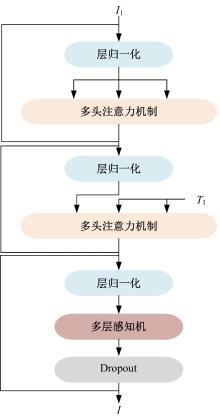

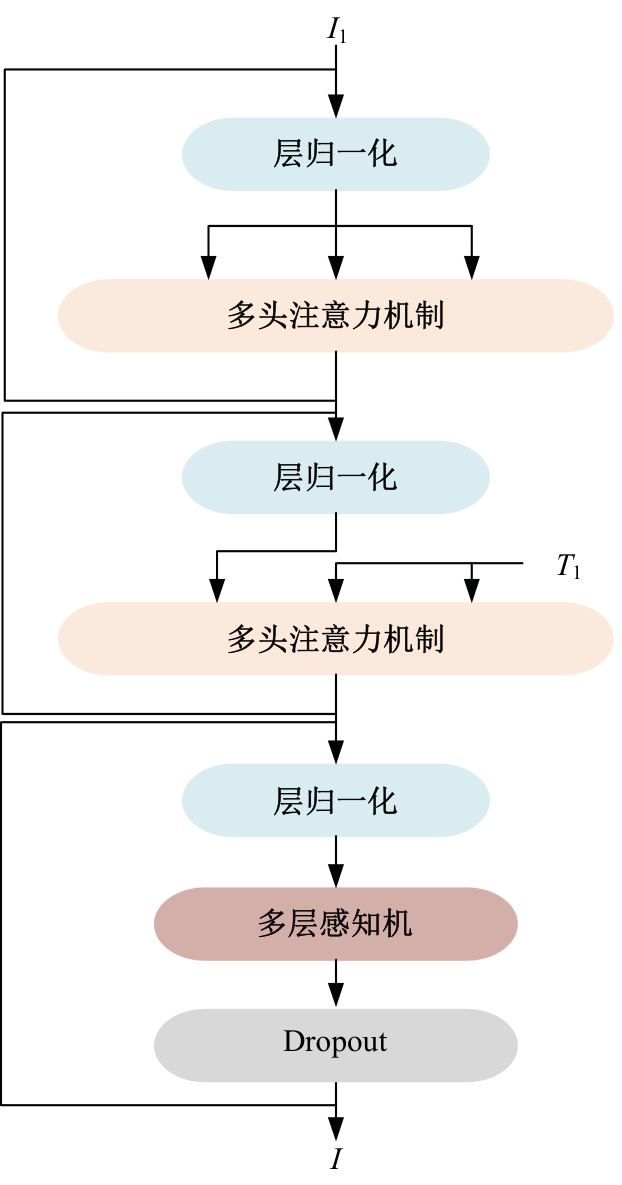

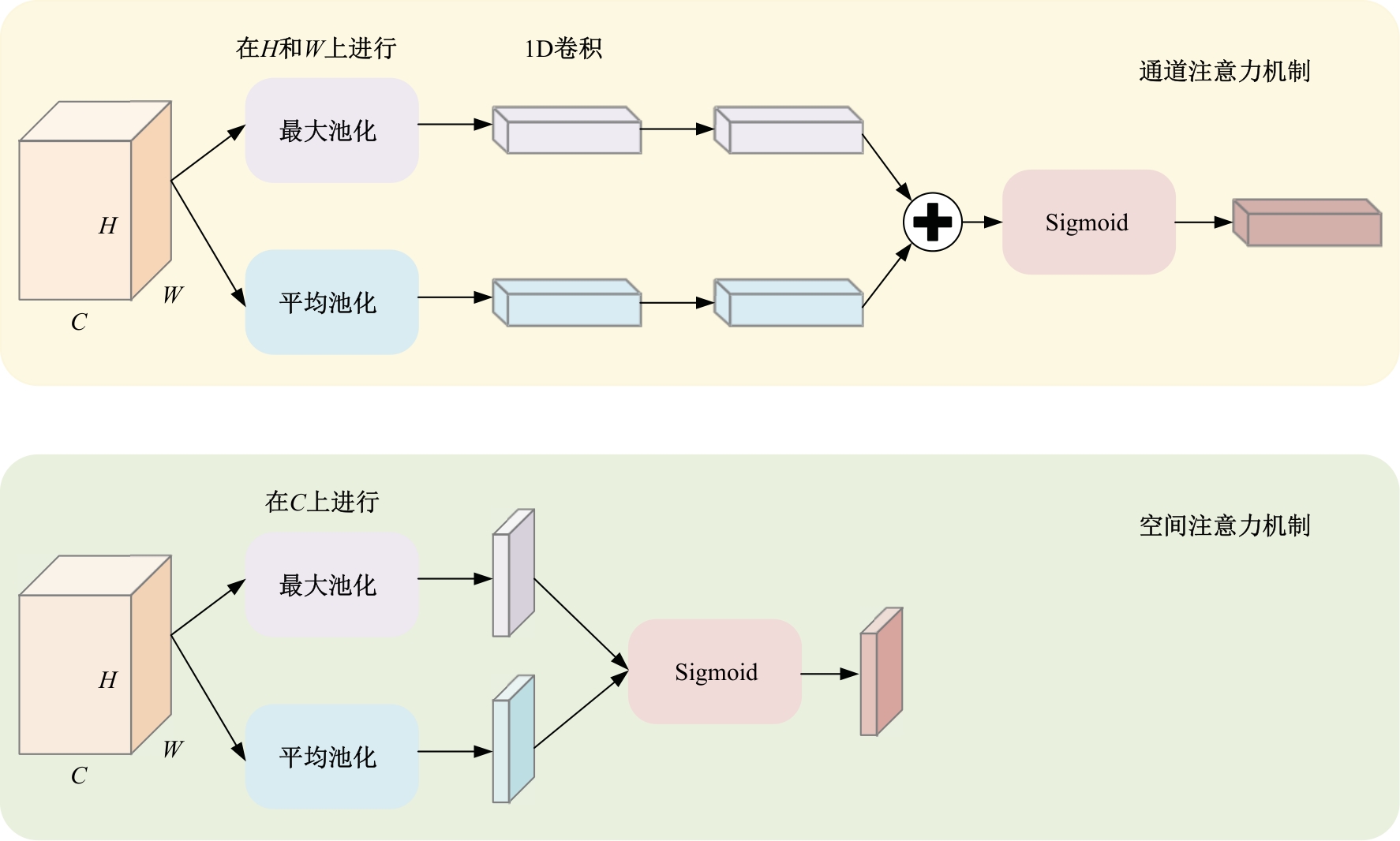

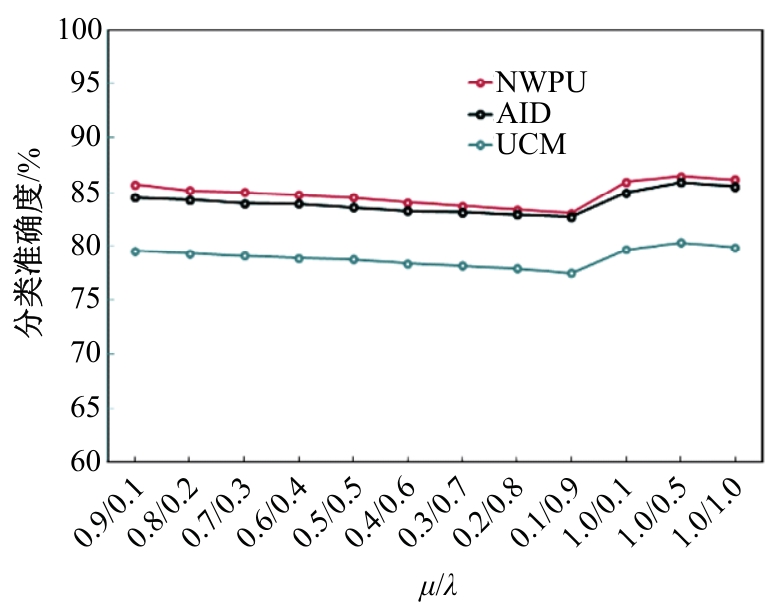

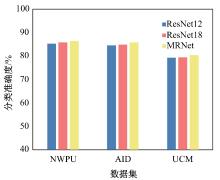

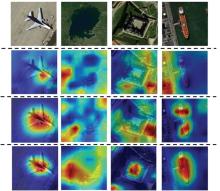

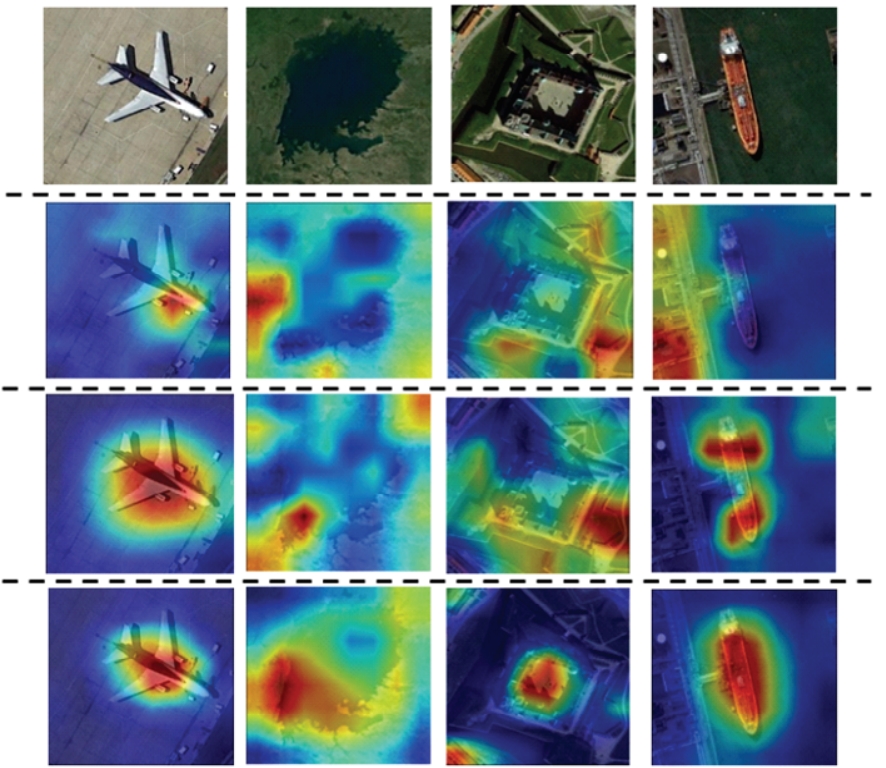

针对现有方法主要利用遥感图像单一模态解决同种类别相似度低的问题,本文提出了一种基于多模态学习的遥感图像分类方法。首先,修正图像的空间特征并利用对比学习进行图像编码器的预训练进而生成图像特征,利用文本编码器生成文本特征。其次,引入特征解码器获取文本感知的视觉特征,在特征融合阶段提出了一个新的注意力机制方法。再次,设计了一个新的图像编码器用于提高分类精度。最后,通过计算支持集和查询集之间的相似性进一步进行类别预测。在NWPU-RESISC45、AID和UC Merced数据集上进行实验,其5-way 5-shot准确度分别达到86.46%、85.89%和80.32%,优于现有的小样本遥感图像分类方法。

中图分类号:

- TP751.1

| [1] | Li L, Han J, Yao X, et al.DLA-MatchNet for few-shot remote sensing image scene classification[J].IEEE Transactions on Geoscience and Remote Sensing, 2020, 59 (9): 7844-7853. |

| [2] | Li X, Shi D, Diao X, et al.SCL-MLNet: boosting few-shot remote sensing scene classification via self-supervised contrastive learning[J].IEEE Transactions on Geoscience and Remote Sensing, 2021, 60: 1-12. |

| [3] | 崔璐, 张鹏, 车进.基于深度神经网络的遥感图像分类算法综述[J]. 计算机科学,2018, 45 (6): 50-53. |

| Cui Lu, Zhang Peng, Che Jin. A review of deep neural network based remote sensing image classification algorithms[J]. Computer Science,2018, 45(6): 50-53. | |

| [4] | Gong T, Zheng X, Lu X.Meta self-supervised learning for distribution shifted few-shot scene classification[J].IEEE Geoscience and Remote Sensing Letters, 2022, 19: 1-5. |

| [5] | Yuan Z, Tang C, Yang A, et al.Few-shot remote sensing image scene classification based on metric learning and local descriptors[J].Remote Sensing,2023, 15 (3): No.831. |

| [6] | Snell J, Swersky K, Zemel R.Prototypical networks for few-shot learning[J].Advances in Neural Information Processing Systems, 2017, 30:No. 05175. |

| [7] | Sung F, Yang Y, Zhang L, et al. Learning to compare: relation network for few-shot learning[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA, 2018: 1199-1208. |

| [8] | Ye H J, Hu H, Zhan D C, et al. Few-shot learning via embedding adaptation with set-to-set functions[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle,USA, 2020: 8808-8817. |

| [9] | Cheng G, Cai L, Lang C, et al.SPNet: Siamese-prototype network for few-shot remote sensing image scene classification[J].IEEE Transactions on Geoscience and Remote Sensing,2021, 60: 1-11. |

| [10] | Cheng K, Yang C, Fan Z, et al. TeAw: Text-aware few-shot remote sensing image scene classification[C]∥IEEE International Conference on Acoustics, Speech and Signal Processing(ICASSP), Rhodes Island, Greece, 2023: 1-5. |

| [11] | Khosla P, Teterwak P, Wang C, et al.Supervised contrastive learning[J].Advances in Neural Information Processing Systems, 2020, 33: 18661-18673. |

| [12] | Vaswani A, Shazeer N, Parmar N, et al.Attention is all you need[J].Advances in Neural Information Processing Systems, 2017, 30: No. 03762. |

| [13] | Radford A, Kim J W, Hallacy C, et al. Learning transferable visual models from natural language supervision[C]∥International Conference on Machine Learning, Jeju Island, Repubic of Korea, 2021: 8748-8763. |

| [14] | Li Y, Zhu Z, Yu J-G, et al.Learning deep cross-modal embedding networks for zero-shot remote sensing image scene classification[J].IEEE Transactions on Geoscience and Remote Sensing, 2021, 59(12): 10590-10603. |

| [15] | He K, Zhang X, Ren S, et al. Deep residual learning for image recognition[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 770-778. |

| [16] | Tolstikhin I O, Houlsby N, Kolesnikov A, et al.Mlp-mixer: an all-mlp architecture for vision[J].Advances in Neural Information Processing Systems,2021, 34: 24261-24272. |

| [17] | Cheng G, Han J, Lu X.Remote sensing image scene classification: benchmark and state of the art[J].Proceedings of the IEEE, 2017, 105 (10): 1865-1883. |

| [18] | Xia G S, Hu J, Hu F, et al.AID: a benchmark data set for performance evaluation of aerial scene classification[J].IEEE Transactions on Geoscience and Remote Sensing, 2017, 55(7): 3965-3981. |

| [19] | Yang Y, Newsam S. Bag-of-visual-words and spatial extensions for land-use classification[C]∥Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, USA,2010: 270-279. |

| [20] | Hu J, Shen L, Sun G. Squeeze-and-excitation networks[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA, 2018: 7132-7141. |

| [21] | Wang Q, Wu B, Zhu P, et al. ECA-Net: Efficient channel attention for deep convolutional neural networks[C]∥Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, Online, 2020: 11534-11542. |

| [22] | Woo S, Park J, Lee J-Y, et al. Cbam: Convolutional block attention module[C]∥Proceedings of the European Conference on Computer Vision(ECCV), Glasgow, England, 2018: 3-19. |

| [23] | Fu R, Hu Q, Dong X, et al.Axiom-based grad-cam: Towards accurate visualization and explanation of cnns[J/OL].[2023-10-11].,2020 |

| [1] | 袁靖舒,李武,赵兴雨,袁满. 基于BERTGAT-Contrastive的语义匹配模型[J]. 吉林大学学报(工学版), 2025, 55(7): 2383-2392. |

| [2] | 车翔玖,李良. 融合全局与局部细粒度特征的图相似度度量算法[J]. 吉林大学学报(工学版), 2025, 55(7): 2365-2371. |

| [3] | 周丰丰,郭喆,范雨思. 面向不平衡多组学癌症数据的特征表征算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2089-2096. |

| [4] | 王健,贾晨威. 面向智能网联车辆的轨迹预测模型[J]. 吉林大学学报(工学版), 2025, 55(6): 1963-1972. |

| [5] | 车翔玖,孙雨鹏. 基于相似度随机游走聚合的图节点分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2069-2075. |

| [6] | 车翔玖,武宇宁,刘全乐. 基于因果特征学习的有权同构图分类算法[J]. 吉林大学学报(工学版), 2025, 55(2): 681-686. |

| [7] | 梁礼明,周珑颂,尹江,盛校棋. 融合多尺度Transformer的皮肤病变分割算法[J]. 吉林大学学报(工学版), 2024, 54(4): 1086-1098. |

| [8] | 李晓旭,安文娟,武继杰,李真,张珂,马占宇. 通道注意力双线性度量网络[J]. 吉林大学学报(工学版), 2024, 54(2): 524-532. |

| [9] | 拉巴顿珠,扎西多吉,珠杰. 藏语文本标准化方法[J]. 吉林大学学报(工学版), 2024, 54(12): 3577-3588. |

| [10] | 叶育鑫,夏珞珈,孙铭会. 增强现实环境中基于假想键盘的手势输入方法[J]. 吉林大学学报(工学版), 2024, 54(11): 3274-3282. |

| [11] | 车娜,朱奕明,赵剑,孙磊,史丽娟,曾现伟. 基于联结主义的视听语音识别方法[J]. 吉林大学学报(工学版), 2024, 54(10): 2984-2993. |

| [12] | 薛珊,张亚亮,吕琼莹,曹国华. 复杂背景下的反无人机系统目标检测算法[J]. 吉林大学学报(工学版), 2023, 53(3): 891-901. |

| [13] | 时小虎,吴佳琦,吴春国,程石,翁小辉,常志勇. 基于残差网络的弯道增强车道线检测方法[J]. 吉林大学学报(工学版), 2023, 53(2): 584-592. |

| [14] | 王振,杨宵晗,吴楠楠,李国坤,冯创. 基于生成对抗网络的序列交叉熵哈希[J]. 吉林大学学报(工学版), 2023, 53(12): 3536-3546. |

| [15] | 周丰丰,颜振炜. 基于混合特征的特征选择神经肽预测模型[J]. 吉林大学学报(工学版), 2023, 53(11): 3238-3245. |

|

||