吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (8): 2722-2731.doi: 10.13229/j.cnki.jdxbgxb.20240012

• 计算机科学与技术 • 上一篇

RAUGAN:基于循环生成对抗网络的红外图像彩色化方法

- 长春理工大学 电子信息工程学院,长春 130022

RAUGAN:infrared image colorization method based on cycle generative adversarial networks

- School of Electronic and Information Engineering,Changchun University of Science and Technology,Changchun 130022,China

摘要:

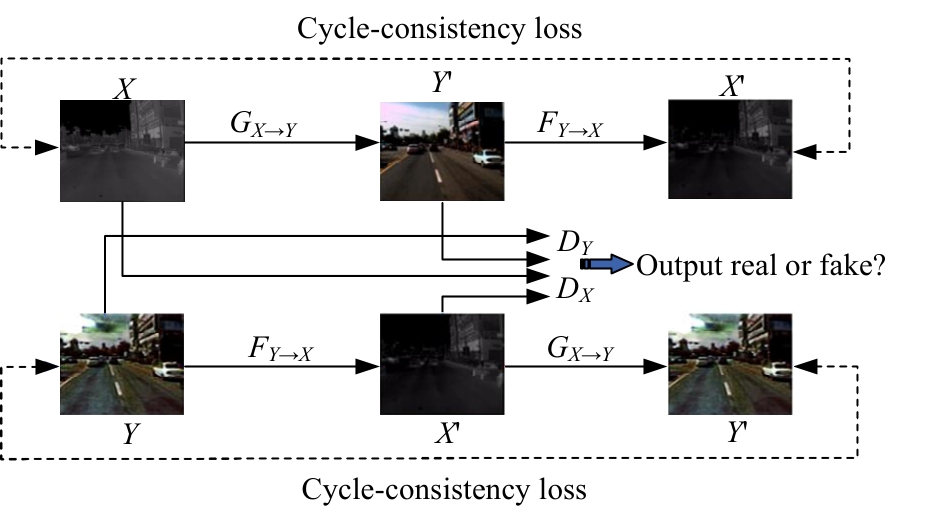

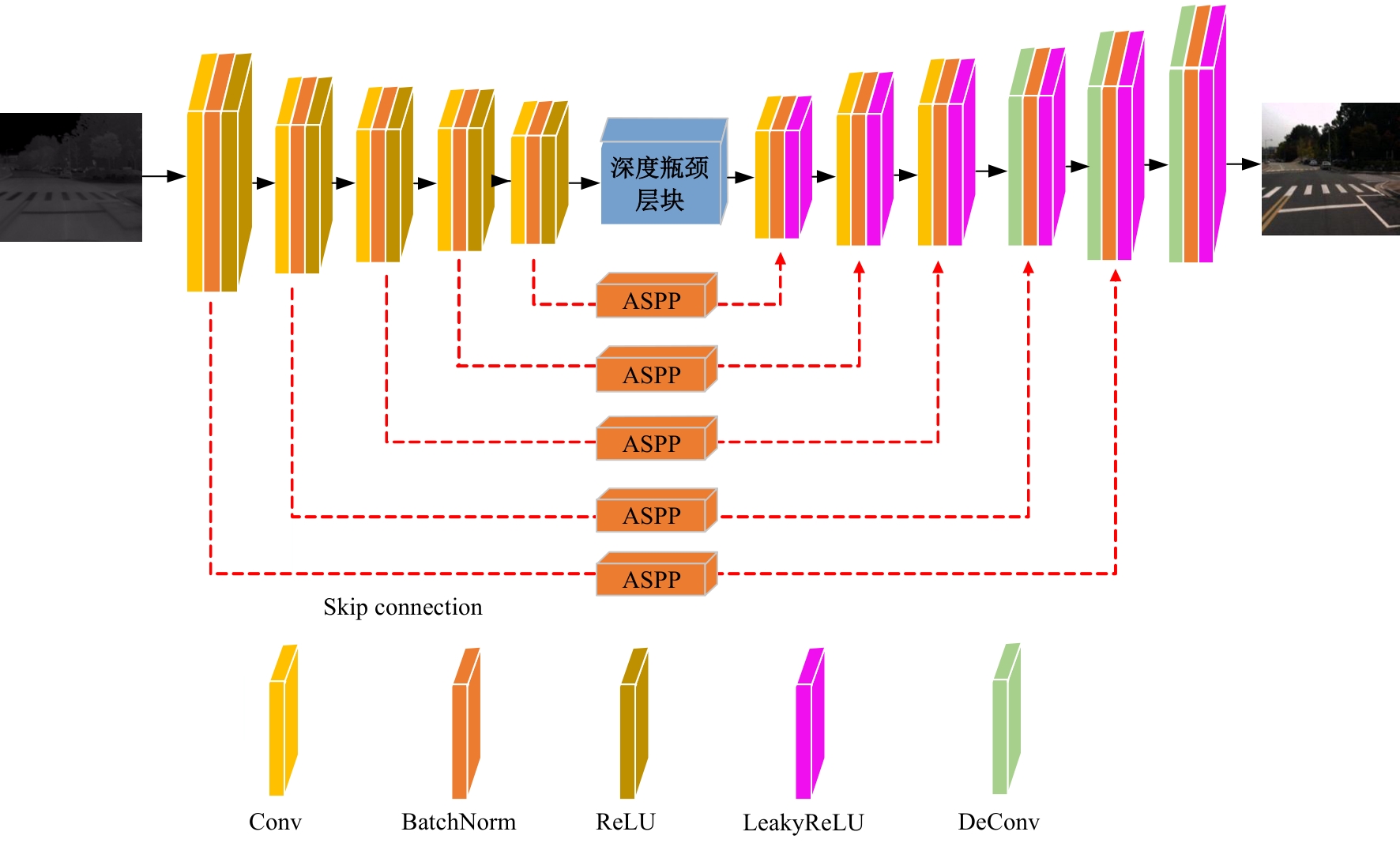

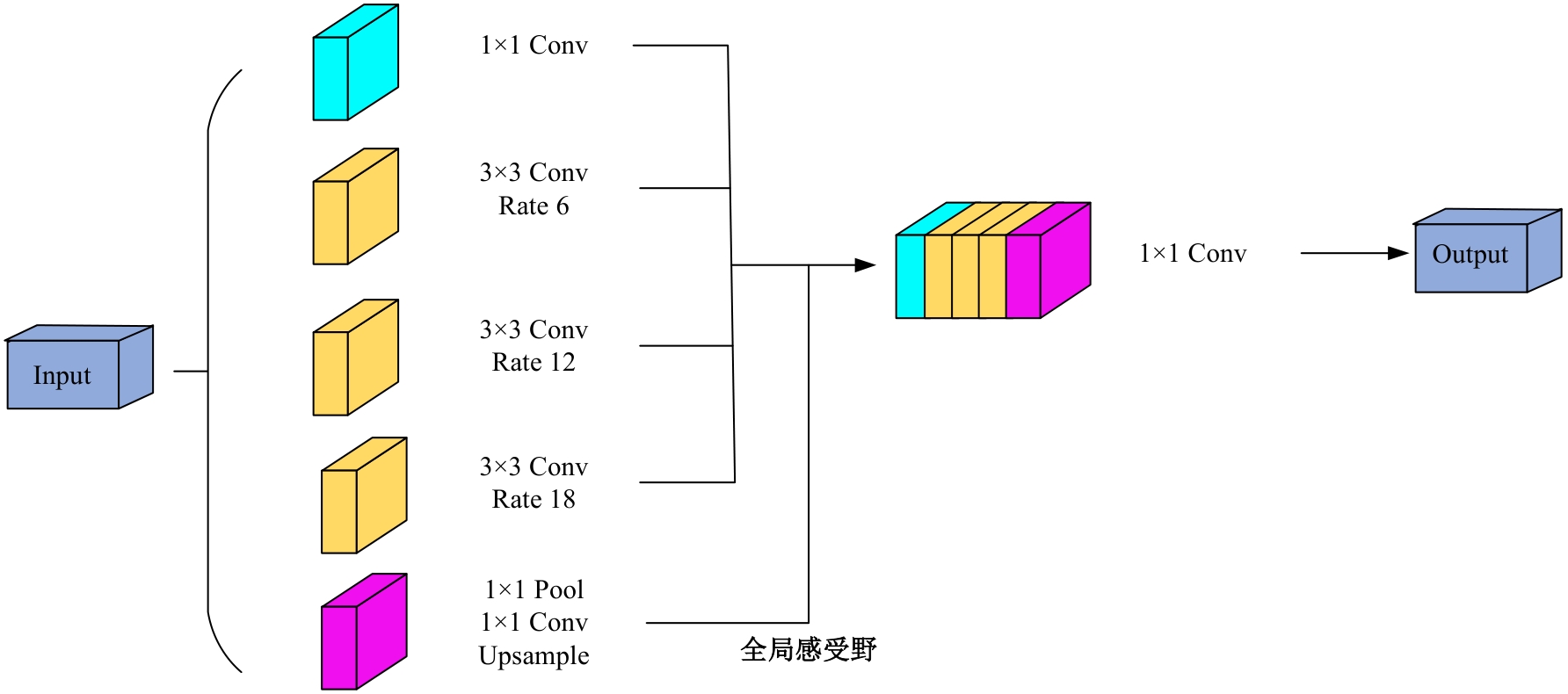

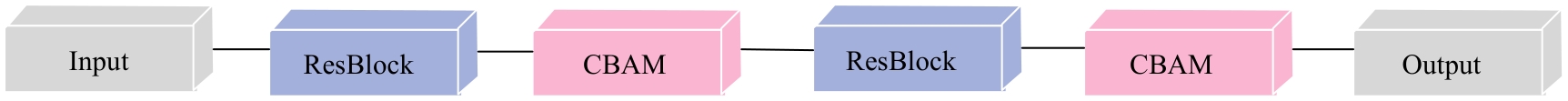

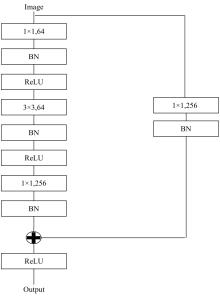

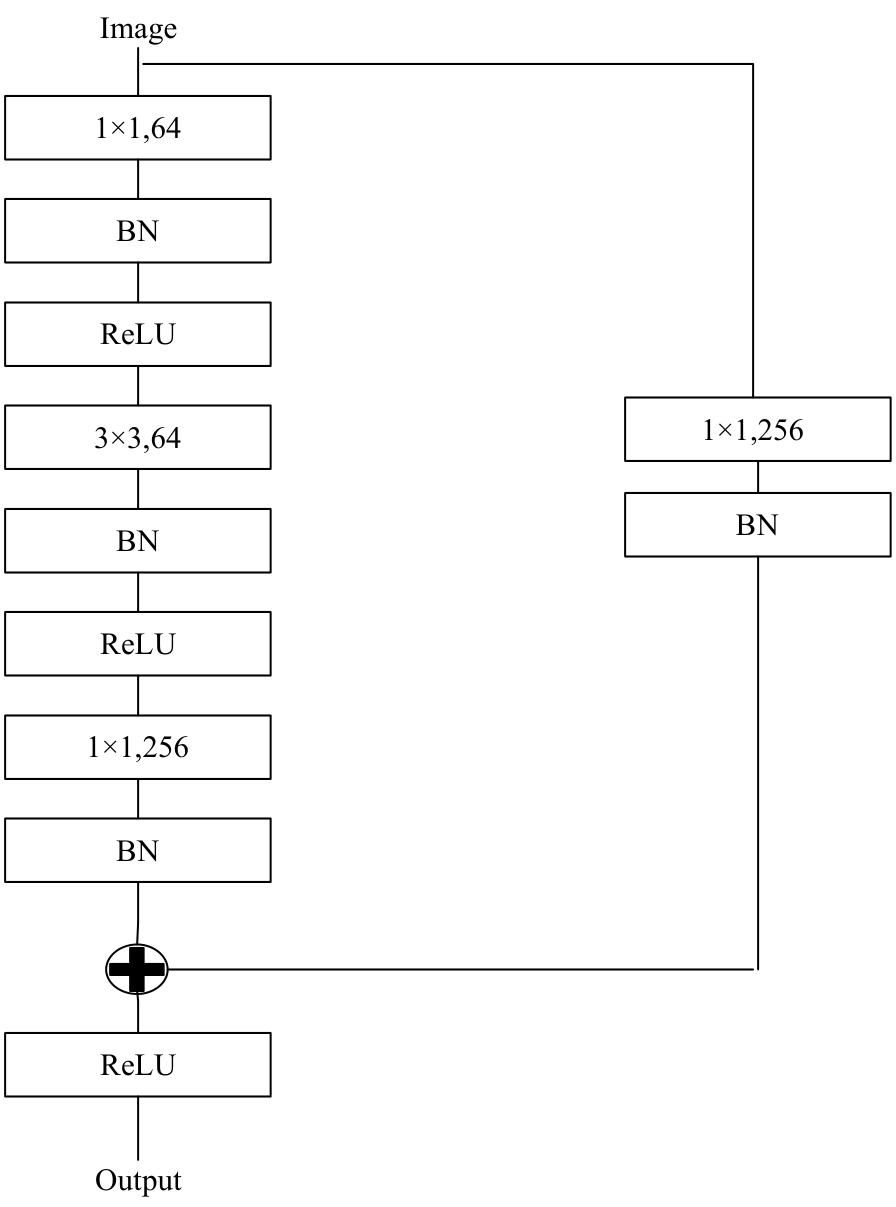

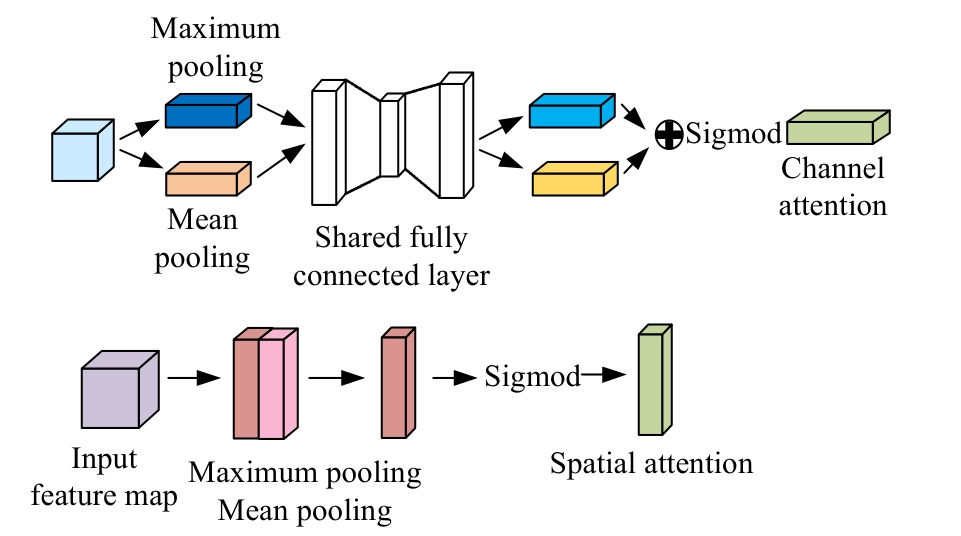

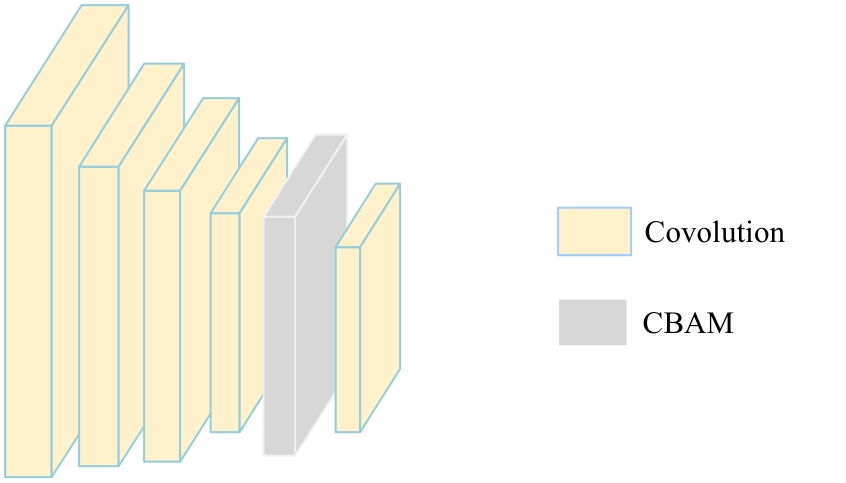

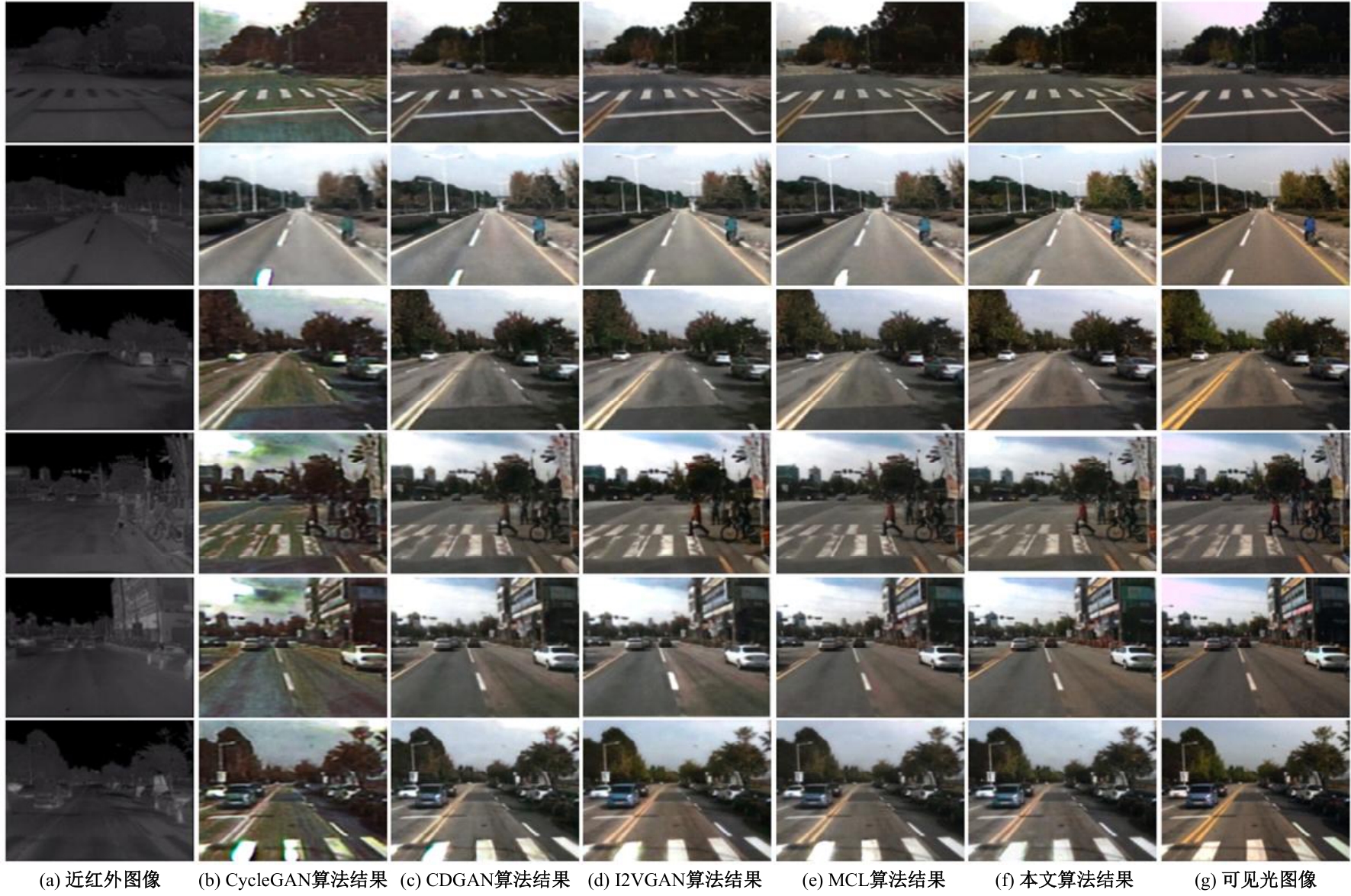

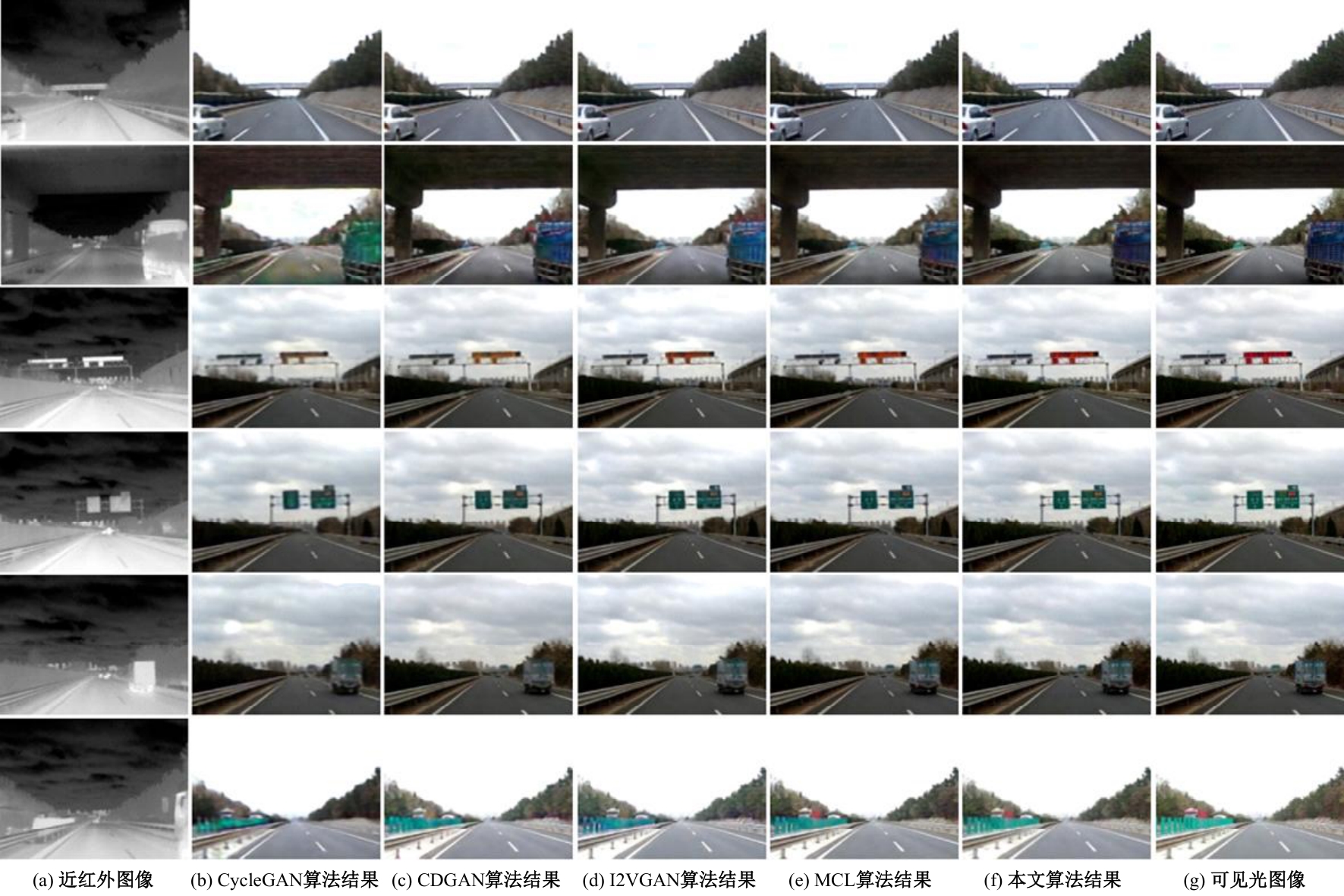

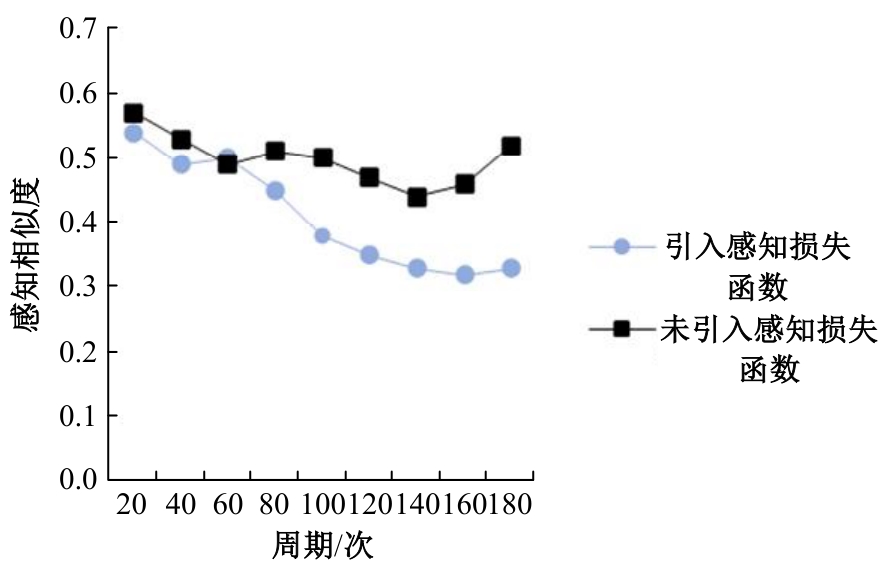

针对近红外图像彩色化过程中的色彩失真、语义模糊和纹理形状不清晰的问题,提出了一种红外图像彩色化方法(RAUGAN)。该算法首先改进了CycleGAN网络的生成器,设计并融合了一种Res-ASPP-Unet网络,将空洞空间金字塔池化(ASPP)在原始UNet的Skip connection结构处连接,使解码分支中的不同尺度输出特征图都能与编码器中对应的输出特征图相结合;其次,设计了由残差块与通道和空间注意力模块(CBAM)构成的深度瓶颈层块替换UNet网络中的瓶颈层,用于增强局部区域特征,提高其识别能力;最后,在判别网络中引用感知损失函数从而解决色彩恢复失真的问题。实验结果表明:该方法彩色化效果明显优于其他方法。

中图分类号:

- TP391.41

| [1] | Reinhard E, Adhikhmin M, Gooch B, et al. Color transfer between images[J]. IEEE Computer Graphics and Applications, 2001, 21(5): 34-41. |

| [2] | Welsh T, Ashikhmin M, Mueller K. Transferring color to greyscale images[J]. ACM Transactions on Graphics, 2002, 21(3): 277-280. |

| [3] | Levin A, Lischinski D, Weiss Y. Colorization using optimization[J]. ACM Transactions on Graphics, 2004, 23(3): 689-694. |

| [4] | Goodfellow I J, Pouget A J, Mirza M, et al.Generative adversarial nets[C]∥Advances in Neural Information Processing Systems, Montréal, Canada, 2014:2672-2680. |

| [5] | Isola P, Zhu J, Zhou T, et al. Image-to-image translation with conditional adversarial networks[C]∥Proceedings of the IEEE Conference on ComputerVision and Pattern Recognition, Las Vegas, USA, 2016: 1125-1134. |

| [6] | Park T, Liu M Y, Wang T C, et al. Semantic imagesynthesis with spatially-adaptive normalization[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019: 2337-2346. |

| [7] | Wang T C, Liu M Y, Zhu J Y, et al. High-resolutionimage synthesis and semantic manipulation withconditional gans[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA, 2018: 8798-8807. |

| [8] | Huang X, Liu M Y, Belongie S, et al. Multimodal unsupervised image-to-image translation[C]∥Proceedings of the European Conference on Computer Vision, Munichi, Germany, 2018:172-189. |

| [9] | Lee H Y, Tseng H Y, Huang J B, et al. Diverse image-to-image translation via disentangled representations[C]∥Proceedings of the European Conference on Computer Vision, Munichi, Germany, 2019:35-51. |

| [10] | Yi Z, Zhang H, Tan P, et al. Dualgan: unsupervised dual learning for image-to-image translation[C]∥Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 2017: 2849-2857. |

| [11] | Suárez P L, Sappa A D, Vintimilla B X. Infrared image colorization based on a triplet dcgan architecture[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, USA, 2017: 212-217. |

| [12] | 王晓宇.基于颜色迁移的图像彩色化算法研究[D]. 长春: 长春理工大学电子信息工程学院, 2020. |

| Wang Xiao-yu. Research on image colorization algorithm based on color migration[D]. Changchun: School of Electronic and Information Engineering, Changchun University of Science and Technology, 2020. | |

| [13] | Li S, Han B F, Yu Z J, et al. I2VGAN:unpaired Infrared to-visible video translation[C]∥Proceedings of the 29th ACM International Conference on Multimedia, New York,USA, 2021:3061-3069. |

| [14] | 高美玲, 段锦, 莫苏新, 等. 基于空洞循环卷积的近红外图像彩色化方法[J]. 光学技术, 2022, 48(6):742-748. |

| Gao Mei-ling, Duan Jin, Mo Su-xin, et al. Near infrared image colorization method based on dilated-cycle convolution[J]. Optical Technique, 2022, 48(6): 742-748. | |

| [15] | Chen J, Chen J, Chao H, et al. Image blind denoising with generative adversarial network based noise modeling[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA, 2018:3155-3164. |

| [16] | 陈雪云, 许韬, 黄小巧. 基于条件生成对抗网络的医学细胞图像生成检测方法[J]. 吉林大学学报: 工学版, 2021, 51(4): 1414-1419. |

| Chen Xue-yun, Xu Tao, Huang Xiao-qiao. Detection method of medical cell image generation basedon conditional generative adversarial network[J]. Journal of Jilin University (Engineering and Technology Edition), 2021, 51(4): 1414-1419. | |

| [17] | 王小玉, 胡鑫豪, 韩昌林. 基于生成对抗网络的人脸铅笔画算法[J]. 吉林大学学报: 工学版, 2021, 51(1): 285-292. |

| Wang Xiao-yu, Hu Xin-hao, Han Chang-lin. Face pencil drawing algorithms based on generative adversarial network[J]. Journal of Jilin University (Engineering nd Technology Edition), 2021, 51(1): 285-292. | |

| [18] | Monday H N, Li J, Nneji G U, et al. A wavelet convolutional capsule network with modified super resolution generative adversarial network for fault diagnosis and classification[J]. Complex & Intelligent Systems, 2022, 8: 4831-4847. |

| [19] | 彭晏飞, 张平甲, 高艺, 等. 融合注意力的生成式对抗网络单图像超分辨率重建[J]. 激光与光电子学进展, 2021, 58(20): 182-191. |

| Peng Yan-fei, Zhang Ping-jia, Gao Yi, et al. Attention fusion generative adversarial network for single-image super-resolution reconstruction[J]. Laser &Optoelectronics Progress, 2021, 58(20): 182-191. | |

| [20] | Liang C C, George P, Iasonas K, et al. DeepLab: semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected CRFs[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018, 40(4): 834-848. |

| [21] | Woo S, Park J, Lee J Y, et al. CBAM: convolutional block attention module[C]∥Proceedings of the 15thEurope an Conference on Computer Visdom, Munich, Germany, 2018: 3-19. |

| [22] | Li C, Wan D M. Precomputed real-time texture synthesis with markovian generative adversarial networks[C]∥European Conference on Computer Vision of IEEE, Amsterdam, The Netherlands, 2016:702-716. |

| [23] | Wang S, Park J, Kim N, et al. Multispectral pedestrian detection: benchmark dataset and baseline[C]∥IEEE Conference on Computer Vision and Pattern Recognition, Boston, USA, 2015: 1037-1045. |

| [24] | Park T, Liu M Y, Wang T C, et al. Semantic image synthesis with spatially-adaptive normalization[J]. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019:2337-2346. |

| [25] | Zhu J Y, Park T, Isola P, et al. Unpaired image-to-image translation using cycle-consistent adversarial network[C]∥IEEE International Conference on Computer Vision, Venice, Italy, 2017: 2223-2232. |

| [26] | Kancharagunta B K, Ram S D. CDGAN: cyclic discriminative generative adversarial networks forimage-to-image transformation[J]. Journal of Visual Communication and Image Representation, 2022, 82:103382. |

| [27] | Gou Y. Multi-feature contrastive learning for unpaired image-to-image translation[J]. Intelligent Systems, 2023, 9: 4111-4122. |

| [1] | 艾青林,刘元宵,杨佳豪. 基于MFF-STDC网络的室外复杂环境小目标语义分割方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2681-2692. |

| [2] | 张宇飞,王丽敏,赵建平,贾智尧,李明洋. 基于中心选择大逃杀优化算法的机器人逆运动学求解[J]. 吉林大学学报(工学版), 2025, 55(8): 2703-2710. |

| [3] | 刘琼昕,王甜甜,王亚男. 非支配排序粒子群遗传算法解决车辆位置路由问题[J]. 吉林大学学报(工学版), 2025, 55(7): 2464-2474. |

| [4] | 车翔玖,李良. 融合全局与局部细粒度特征的图相似度度量算法[J]. 吉林大学学报(工学版), 2025, 55(7): 2365-2371. |

| [5] | 李文辉,杨晨. 基于对比学习文本感知的小样本遥感图像分类[J]. 吉林大学学报(工学版), 2025, 55(7): 2393-2401. |

| [6] | 庄珊娜,王君帅,白晶,杜京瑾,王正友. 基于三维卷积与自注意力机制的视频行人重识别[J]. 吉林大学学报(工学版), 2025, 55(7): 2409-2417. |

| [7] | 赵宏伟,周伟民. 基于数据增强的半监督单目深度估计框架[J]. 吉林大学学报(工学版), 2025, 55(6): 2082-2088. |

| [8] | 王健,贾晨威. 面向智能网联车辆的轨迹预测模型[J]. 吉林大学学报(工学版), 2025, 55(6): 1963-1972. |

| [9] | 陈海鹏,张世博,吕颖达. 多尺度感知与边界引导的图像篡改检测方法[J]. 吉林大学学报(工学版), 2025, 55(6): 2114-2121. |

| [10] | 周丰丰,郭喆,范雨思. 面向不平衡多组学癌症数据的特征表征算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2089-2096. |

| [11] | 车翔玖,孙雨鹏. 基于相似度随机游走聚合的图节点分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2069-2075. |

| [12] | 刘萍萍,商文理,解小宇,杨晓康. 基于细粒度分析的不均衡图像分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2122-2130. |

| [13] | 王友卫,刘奥,凤丽洲. 基于知识蒸馏和评论时间的文本情感分类新方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1664-1674. |

| [14] | 赵宏伟,周明珠,刘萍萍,周求湛. 基于置信学习和协同训练的医学图像分割方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1675-1681. |

| [15] | 申自浩,高永生,王辉,刘沛骞,刘琨. 面向车联网隐私保护的深度确定性策略梯度缓存方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1638-1647. |

|

||