吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (12): 3840-3851.doi: 10.13229/j.cnki.jdxbgxb.20240397

• 交通运输工程·土木工程 • 上一篇

双分支特征自适应融合的车道线检测方法

- 重庆交通大学 交通运输学院,重庆 400074

Method of lane detection based on adaptive fusion of double branch features

Tian-min DENG( ),Peng-fei XIE,Yang YU,Yue-tian CHEN

),Peng-fei XIE,Yang YU,Yue-tian CHEN

- School of Traffic and Transportation,Chongqing Jiaotong University,Chongqing 400074,China

摘要:

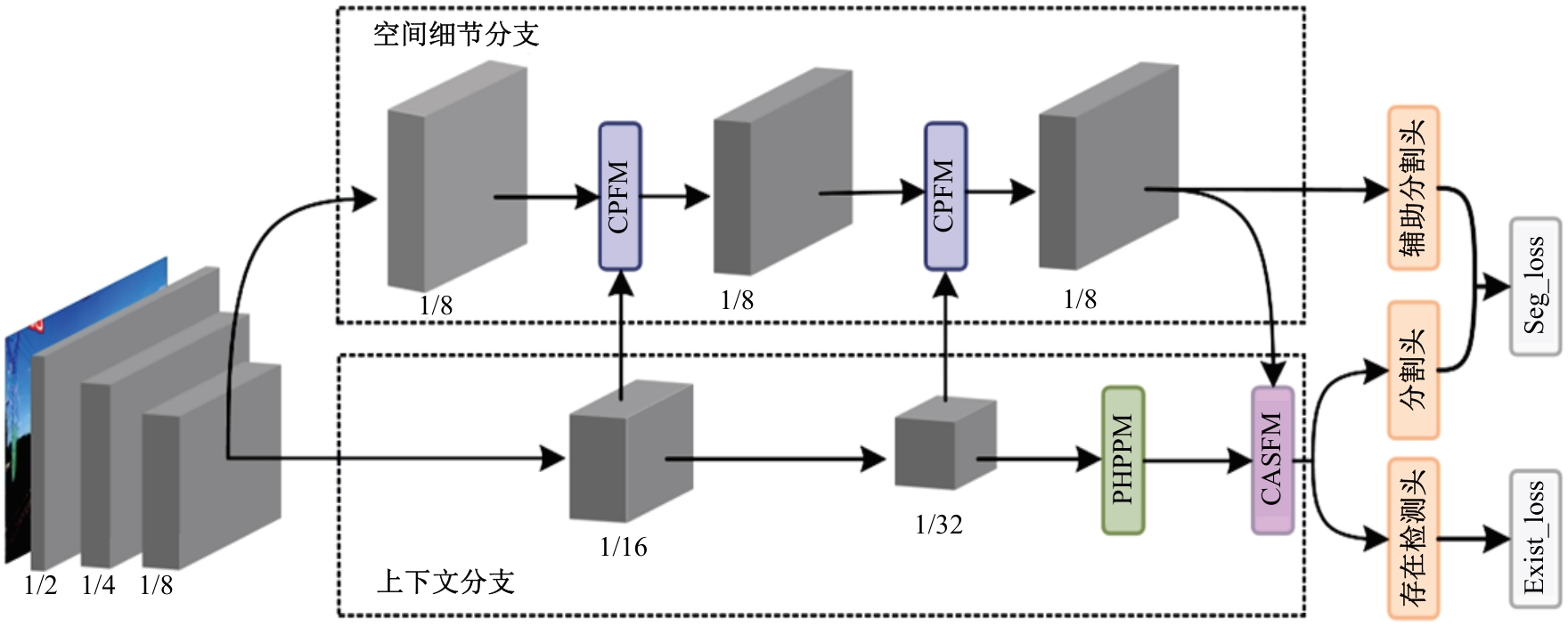

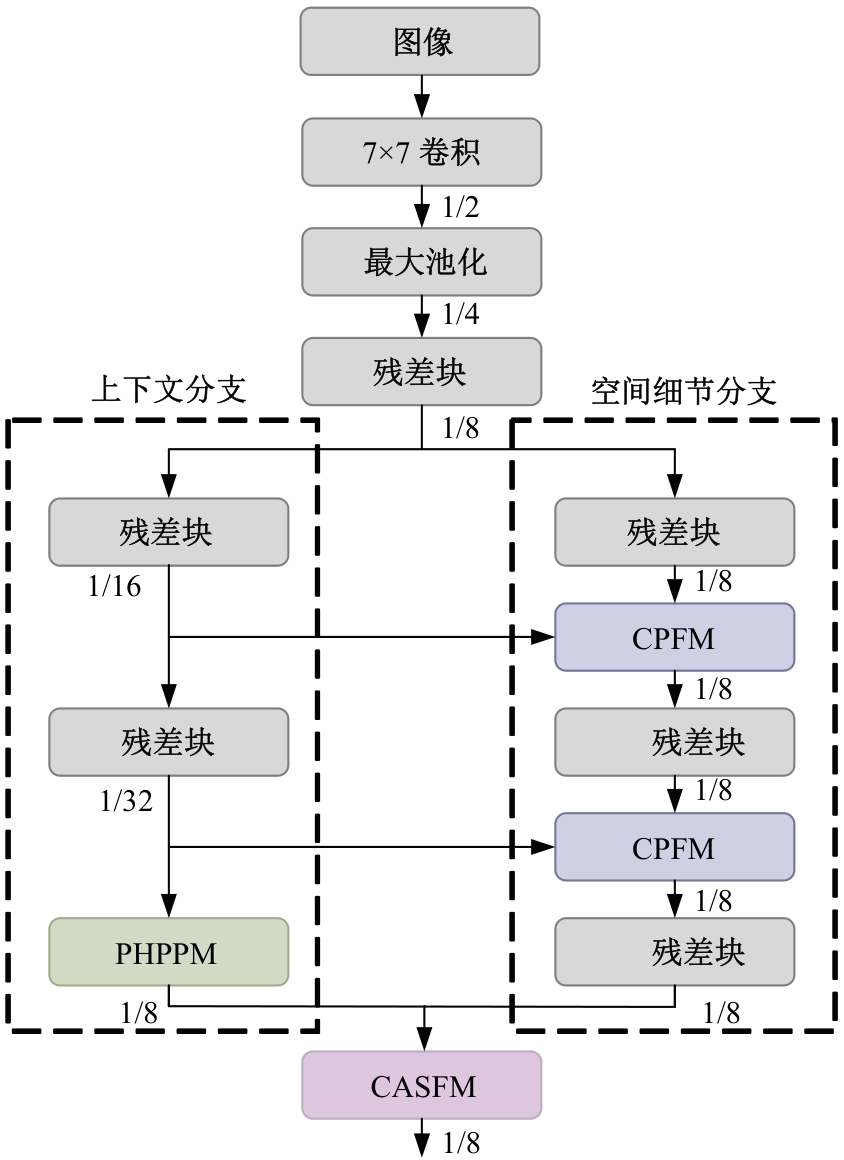

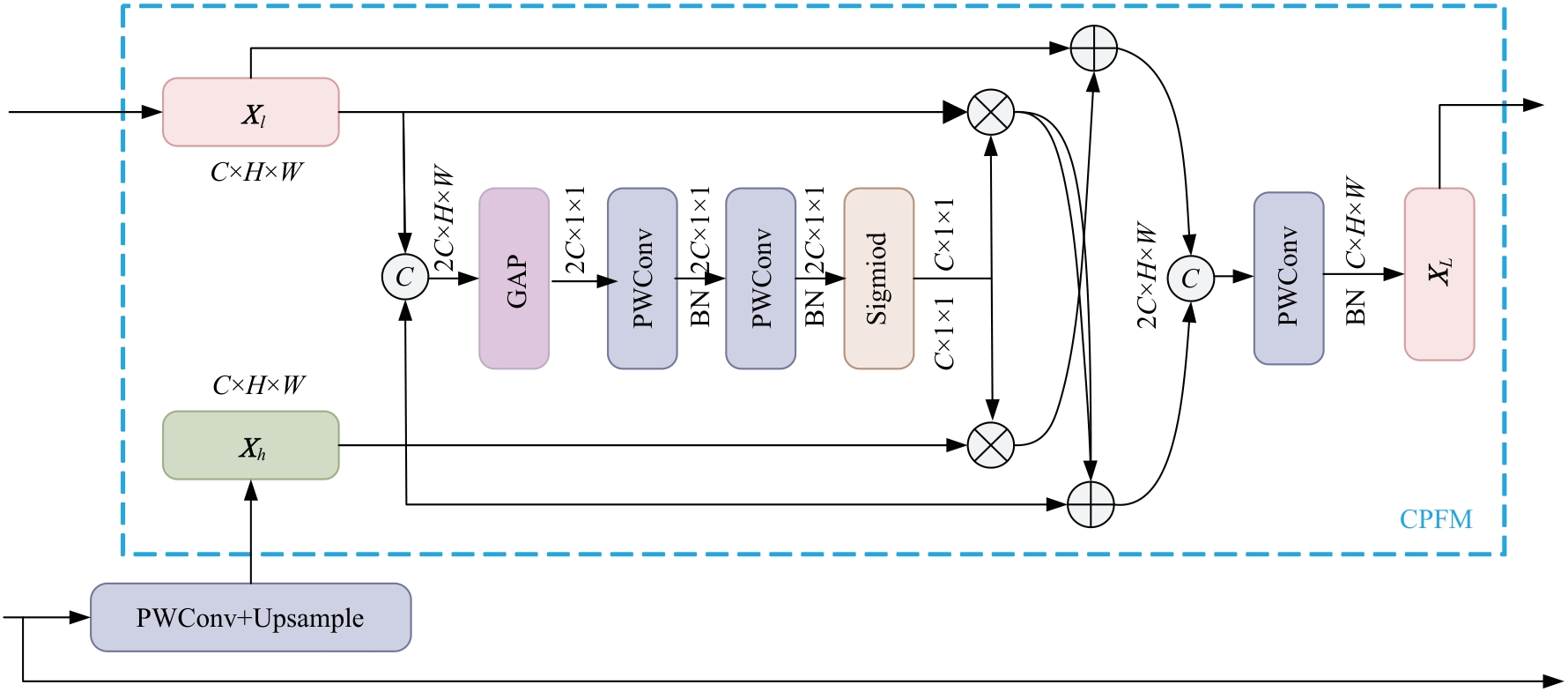

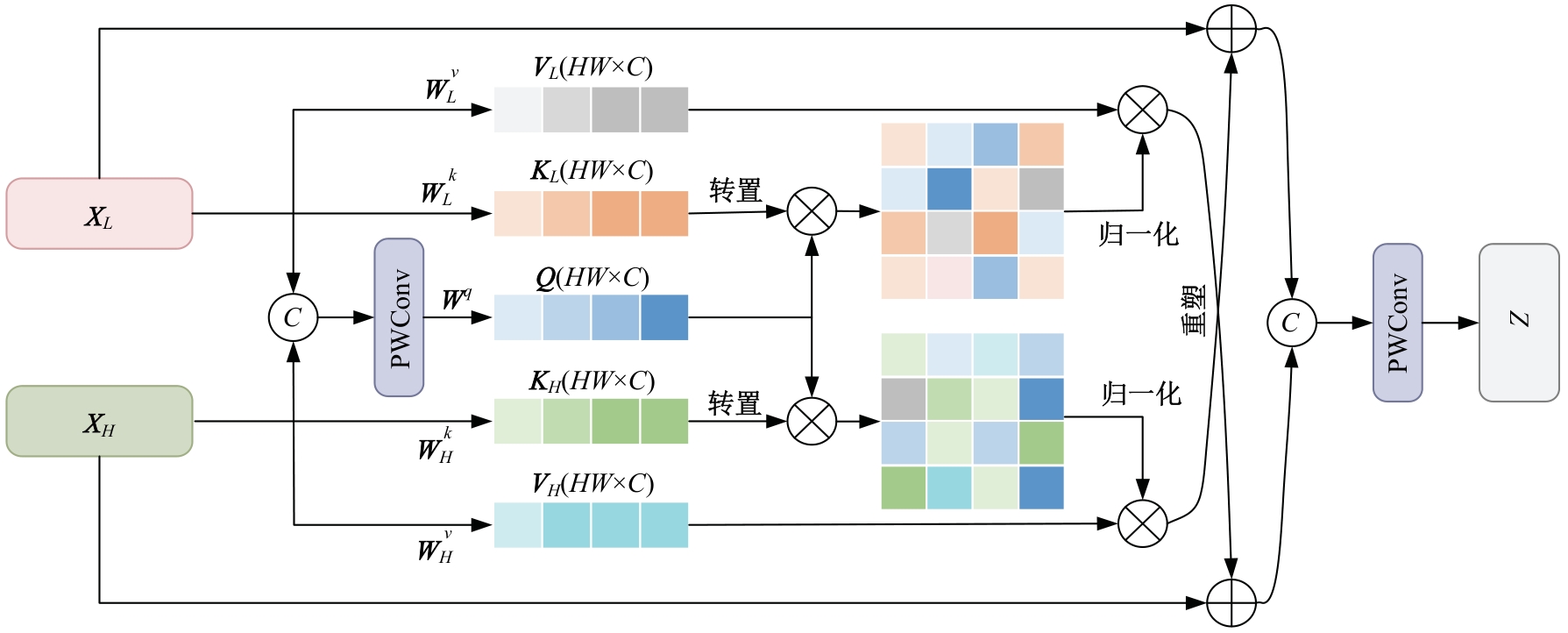

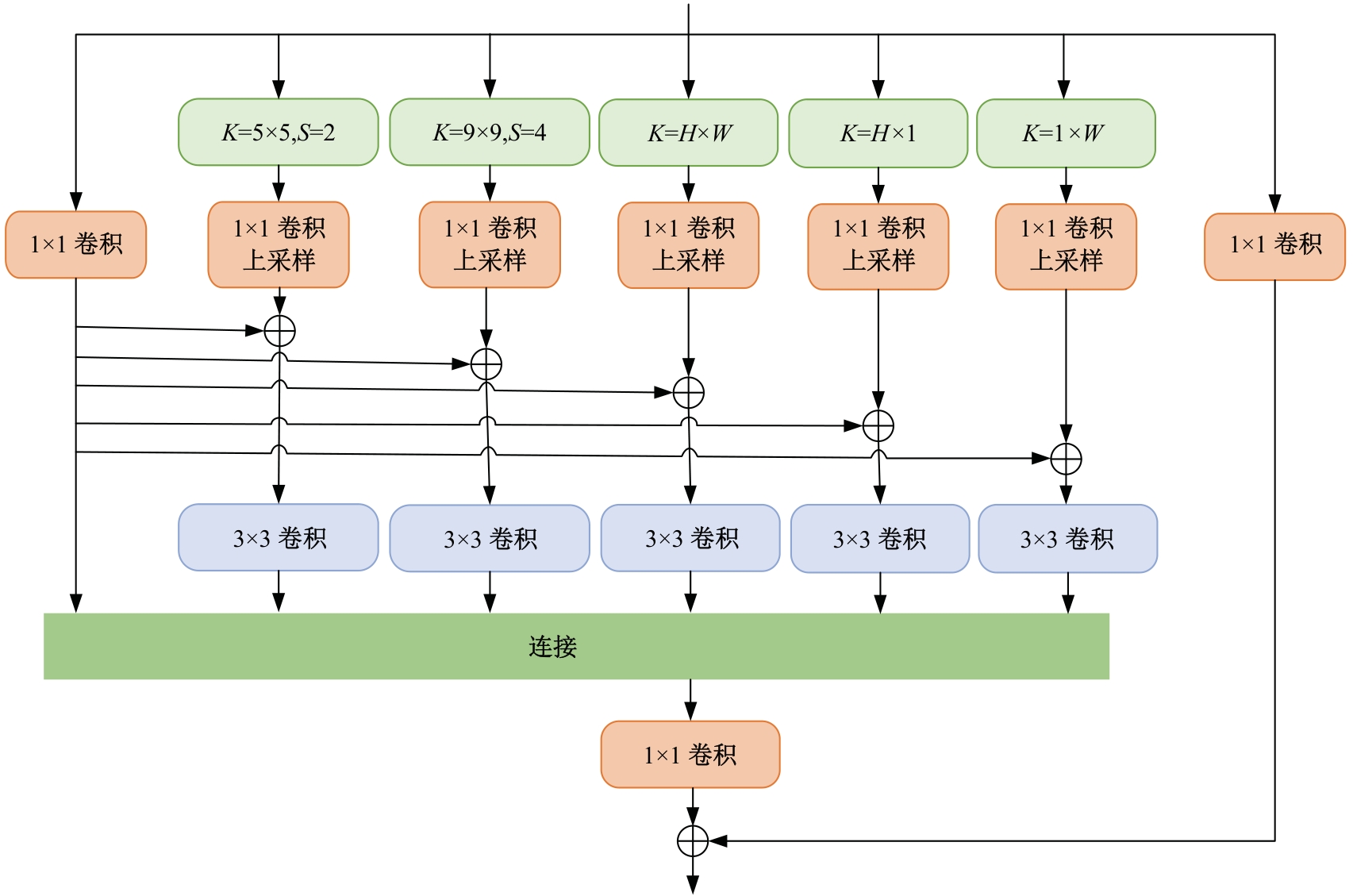

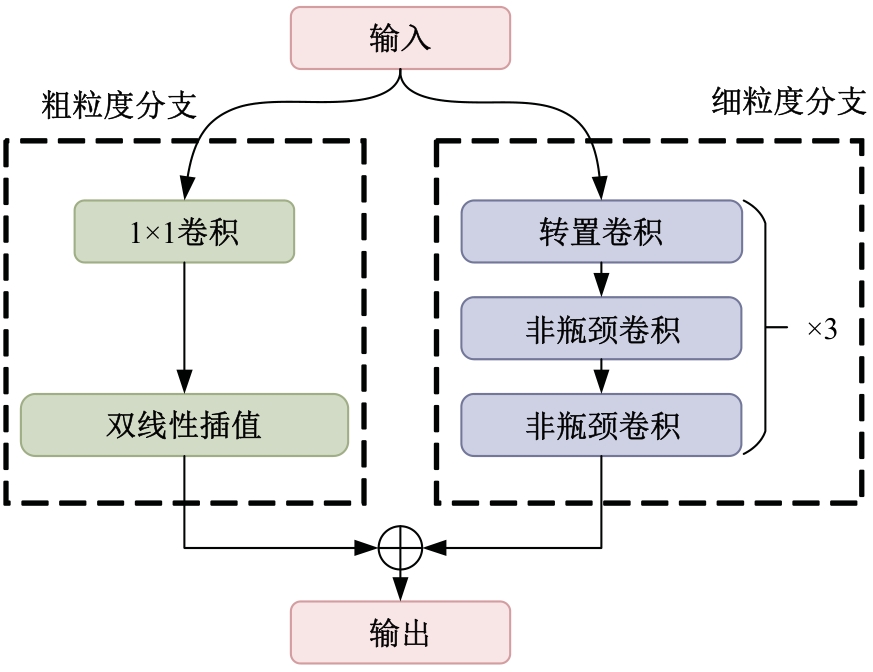

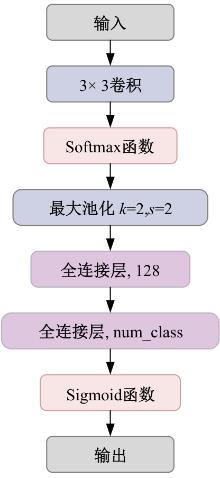

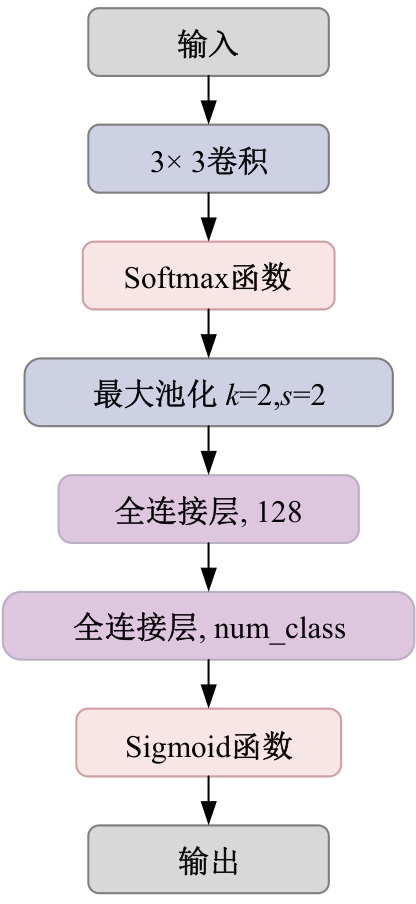

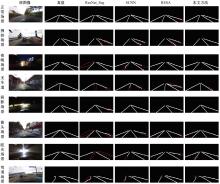

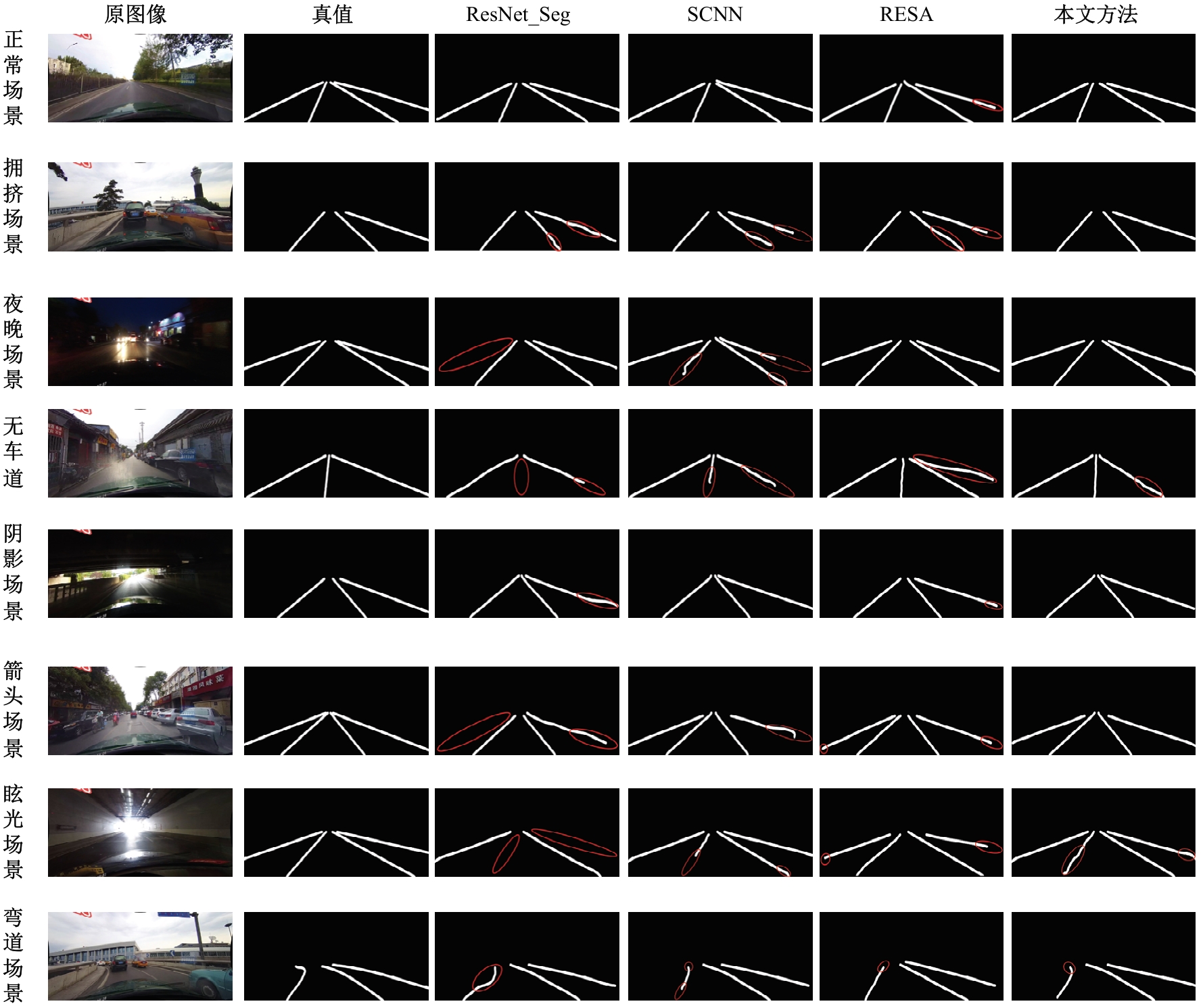

为解决深浅层特征直接融合易导致的特征腐蚀和淹没问题,实现复杂环境车道线精准检测,提出了双分支特征自适应融合的车道线检测方法。首先,设计了双分支特征提取网络,提升复杂环境车道线特征提取能力,减少空间细节信息损失;其次,构建了特征自适应融合模块,利用通道注意力与自注意力引导特征选择和融合,自适应地调整融合过程,优化特征图的通道和空间语义信息;此外,改进的并行混合金字塔池化模块更符合道路细长、大跨度特性,多方向捕获远程上下文关系;最后,本文方法在TuSimple、CULane和Curvelanes数据集进行了实验测试,F1分别达到了96.93%、76.48%和83.21%,实验结果表明:本文方法能有效应对遮挡、阴影等复杂场景车道线检测任务,其性能相对于主流分割类车道线检测方法有显著提升。

中图分类号:

- TP391.4

| [1] | Sun T Y, Tsai S J, Chan V, et al. HSI color model based lane-marking detection[C]∥IEEE Intelligent Transportation Systems Conference,Toronto, Canada, 2006: 1168-1172. |

| [2] | 赵颖, 王书茂, 陈兵旗. 基于改进Hough变换的公路车道线快速检测算法[J]. 中国农业大学学报, 2006(3): 104-108. |

| Zhao Ying, Wang Shu-mao, Chen Bing-qi. Fast lane detection algorithm based on improved Hough transform[J]. Journal of China Agricultural University, 2006(3):104-108. | |

| [3] | 蔡创新, 邹宇, 潘志庚, 等. 基于多特征融合和窗口搜索的新型车道线检测算法[J]. 江苏大学学报: 自然科学版, 2023, 44(4): 386-391. |

| Cai Chuang-xin, Zou Yu, Pan Zhi-geng, et al. Novel lane detection algorithm based on multi-feature fusion and windows searching[J]. Journal of Jiangsu University (Natural Science Edition), 2023, 44(4): 386-391. | |

| [4] | 杨金鑫, 范英, 谢纯禄. 基于行距离及粒子滤波的车道线识别算法[J]. 江苏大学学报: 自然科学版, 2020, 41(2): 138-142, 198. |

| Yang Jin-xin, Fan Ying, Xie Chun-lu. Lane detection algorithm based on row distance and particle filter[J]. Journal of Jiangsu University (Natural Science Edition), 2020, 41(2): 138-142, 198. | |

| [5] | Tabelini L, Berriel R, Paixao T M, et al. Polylanenet: lane estimation via deep polynomial regression[C]∥25th International Conference on Pattern Recognition (ICPR),Milan, Italy, 2021: 6150-6156. |

| [6] | Liu R, Yuan Z, Liu T, et al. End-to-end lane shape prediction with transformers[C]∥Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision,Waikoloa, USA, 2021: 3693-3701. |

| [7] | Feng Z, Guo S, Tan X, et al. Rethinking efficient lane detection via c ourve modeling[C]∥Proceedings of the IEEE/CVF Conferencen Computer Vision and Pattern Recognition,New Orleans,USA,2022: 17041-17049. |

| [8] | Qin Z, Wang H, Li X. Ultra fast structure-aware deep lane detection[C]∥Computer Vision-ECCV : 16th European Conference, Glasgow, UK, 2020: 276-291. |

| [9] | 张云佐, 郑宇鑫, 武存宇, 等. 基于双特征提取网络的复杂环境车道线精准检测[J].吉林大学学报: 工学版, 2024, 54(7): 1894-1902 . |

| Zhang Yun-zuo, Zheng Yu-xin, Wu Cun-yu, et al. Accurate detection of lane lines in complex environment based on dual feature extraction network [J]. Journal of Jilin University (Engineering and Technology Edition), 2024,54 (7): 1894-1902 . | |

| [10] | 时小虎, 吴佳琦, 吴春国, 等. 基于残差网络的弯道增强车道线检测方法[J]. 吉林大学学报: 工学版, 2023, 53(2): 584-592. |

| Shi Xiao-hu, Wu Jia-qi, Wu Chun-guo, et al. Detection method of enhanced lane lines in curves based on residual network [J]. Journal of Jilin University (Engineering and Technology Edition), 2023,53(2):584-592. | |

| [11] | Liu L, Chen X, Zhu S, et al. CondLaneNet: a top-to-down lane detection framework based on conditional convolution[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision,Montreal, Canada, 2021:3753-3762. |

| [12] | Pan X, Shi J, Luo P, et al. Spatial as deep: spatial CNN for traffic scene understanding[C]∥Proceedings of the AAAI Conference on Artificial Intelligence,New Orleans, USA,2018:7276-7283. |

| [13] | Su J, Chen C, Zhang K, et al. Structure guided lane detection[J/OL].[2024-04-05]. |

| [14] | Zheng T, Fang H, Zhang Y, et al. RESA: recurrent feature-shift aggregator for lane detection[J]. Proceedings of the AAAI Conference on Artificial Intelligence, 2021, 35(4): 3547-3554. |

| [15] | Hou Y, Ma Z, Liu C, et al. Learning lightweight lane detection CNNs by self attention distillation[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision,Seoul, Korea (South),2019: 1013-1021. |

| [16] | Xu H, Wang S, Cai X, et al. Curvelane-nas: unifying lane-sensitive architecture search and adaptive point blending[C]∥Computer Vision-ECCV : 16th European Conference, Glasgow, UK, 2020: 689-704. |

| [17] | Pan H, Hong Y, Sun W, et al. Deep dual-resolution networks for realtime and accurate semantic segmentation of traffic scenes[J]. IEEE Transactions on Intelligent Transportation Systems, 2022, 24(3): 3448-3460. |

| [18] | Bae W, Yoo J, Chul Ye J. Beyond deep residual learning for image restoration: persistent homology-guided manifold simplification[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Honolulu, USA, 2017:1141-1149. |

| [19] | Cheng M, Su J, Li L, et al. A-DFPN: adversarial learning and deformation feature pyramid networks for object detection[C]∥IEEE 5th International Conference on Image, Vision and Computing (ICIVC),Beijing, China, 2020: 11-18. |

| [20] | Liu S, Qi L, Qin H, et al. Path aggregation network for instance segmentation[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,Salt Lake City, USA, 2018: 8759-8768. |

| [21] | Vaswani A, Shazeer N, Parmar N, et al. Attention is all you need[C]∥NIPS'17: Proceedings of the 31st International Conference on Neural Information Processing Systems,Long Beach, USA,2017: 6000-6010. |

| [22] | Zhao H, Shi J, Qi X, et al. Pyramid scene parsing network[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,Honolulu, USA, 2017: 6230-6239. |

| [23] | TuSimple. TuSimple lane detection benchmark[DB/OL]. [2024-04-06]. |

| [24] | Ghafoorian M, Nugteren C, Baka N, et al. EL-GAN: embedding loss driven generative adversarial networks for lane detection[C]∥Proceedings of the European Conference on Computer Vision (ECCV) Workshops,Munich, Germany, 2018: 256-272. |

| [25] | Yoo S, Lee H S, Myeong H, et al. End-to-end lane marker detection via row-wise classification[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Seattle, USA, 2020:4335-4343. |

| [26] | Tabelini L, Berriel R, Paixao T M, et al. Keep your eyes on the lane: real-time attention-guided lane detection[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2021: 294-302. |

| [27] | Ko Y, Lee Y, Azam S, et al. Key points estimation and point instance segmentation approach for lane detection[J]. IEEE Transactions on Intelligent Transportation Systems, 2021, 23(7): 8949-8958. |

| [28] | Chen Z, Liu Q, Lian C.PointLaneNet: efficient end-to-end CNNs for accurate real-time lane detection[C]∥IEEE Intelligent Vehicles Symposium (IV), Paris, France, 2019: 2563-2568. |

| [29] | Qin Z Q, Zhang P Y, Li X, et al. Ultra fast deep lane detection with hybrid anchor driven ordinal classification[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2022, 46(5): 2555-2568. |

| [1] | 霍震,金立生,华强,贺阳. 基于边缘特征引导的智能汽车语义分割方法[J]. 吉林大学学报(工学版), 2025, 55(9): 3032-3041. |

| [2] | 艾青林,刘元宵,杨佳豪. 基于MFF-STDC网络的室外复杂环境小目标语义分割方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2681-2692. |

| [3] | 张宇飞,王丽敏,赵建平,贾智尧,李明洋. 基于中心选择大逃杀优化算法的机器人逆运动学求解[J]. 吉林大学学报(工学版), 2025, 55(8): 2703-2710. |

| [4] | 朴燕,康继元. RAUGAN:基于循环生成对抗网络的红外图像彩色化方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2722-2731. |

| [5] | 刘琼昕,王甜甜,王亚男. 非支配排序粒子群遗传算法解决车辆位置路由问题[J]. 吉林大学学报(工学版), 2025, 55(7): 2464-2474. |

| [6] | 车翔玖,李良. 融合全局与局部细粒度特征的图相似度度量算法[J]. 吉林大学学报(工学版), 2025, 55(7): 2365-2371. |

| [7] | 李文辉,杨晨. 基于对比学习文本感知的小样本遥感图像分类[J]. 吉林大学学报(工学版), 2025, 55(7): 2393-2401. |

| [8] | 庄珊娜,王君帅,白晶,杜京瑾,王正友. 基于三维卷积与自注意力机制的视频行人重识别[J]. 吉林大学学报(工学版), 2025, 55(7): 2409-2417. |

| [9] | 赵宏伟,周伟民. 基于数据增强的半监督单目深度估计框架[J]. 吉林大学学报(工学版), 2025, 55(6): 2082-2088. |

| [10] | 王健,贾晨威. 面向智能网联车辆的轨迹预测模型[J]. 吉林大学学报(工学版), 2025, 55(6): 1963-1972. |

| [11] | 陈海鹏,张世博,吕颖达. 多尺度感知与边界引导的图像篡改检测方法[J]. 吉林大学学报(工学版), 2025, 55(6): 2114-2121. |

| [12] | 周丰丰,郭喆,范雨思. 面向不平衡多组学癌症数据的特征表征算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2089-2096. |

| [13] | 车翔玖,孙雨鹏. 基于相似度随机游走聚合的图节点分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2069-2075. |

| [14] | 刘萍萍,商文理,解小宇,杨晓康. 基于细粒度分析的不均衡图像分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2122-2130. |

| [15] | 王友卫,刘奥,凤丽洲. 基于知识蒸馏和评论时间的文本情感分类新方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1664-1674. |

|

||