吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (9): 3032-3041.doi: 10.13229/j.cnki.jdxbgxb.20250454

• 计算机科学与技术 • 上一篇

基于边缘特征引导的智能汽车语义分割方法

- 1.燕山大学 车辆与能源学院,河北 秦皇岛 066004

2.燕山大学 河北省特种运载装备重点实验室,河北 秦皇岛 066004

3.比亚迪汽车工业有限公司,广东 深圳 518118

Edge feature⁃guided semantic segmentation method for intelligent vehicle

Zhen HUO1( ),Li-sheng JIN1,2(

),Li-sheng JIN1,2( ),Qiang HUA3, HEYang1

),Qiang HUA3, HEYang1

- 1.School of Vehicle and Energy,Yanshan University,Qinhuangdao 066004,China

2.Hebei Key Laboratory of Special Carrier Equipment,Yanshan University,Qinhuangdao 066004,China

3.BYD Auto Industry Company Limited,Shenzhen 518118,China

摘要:

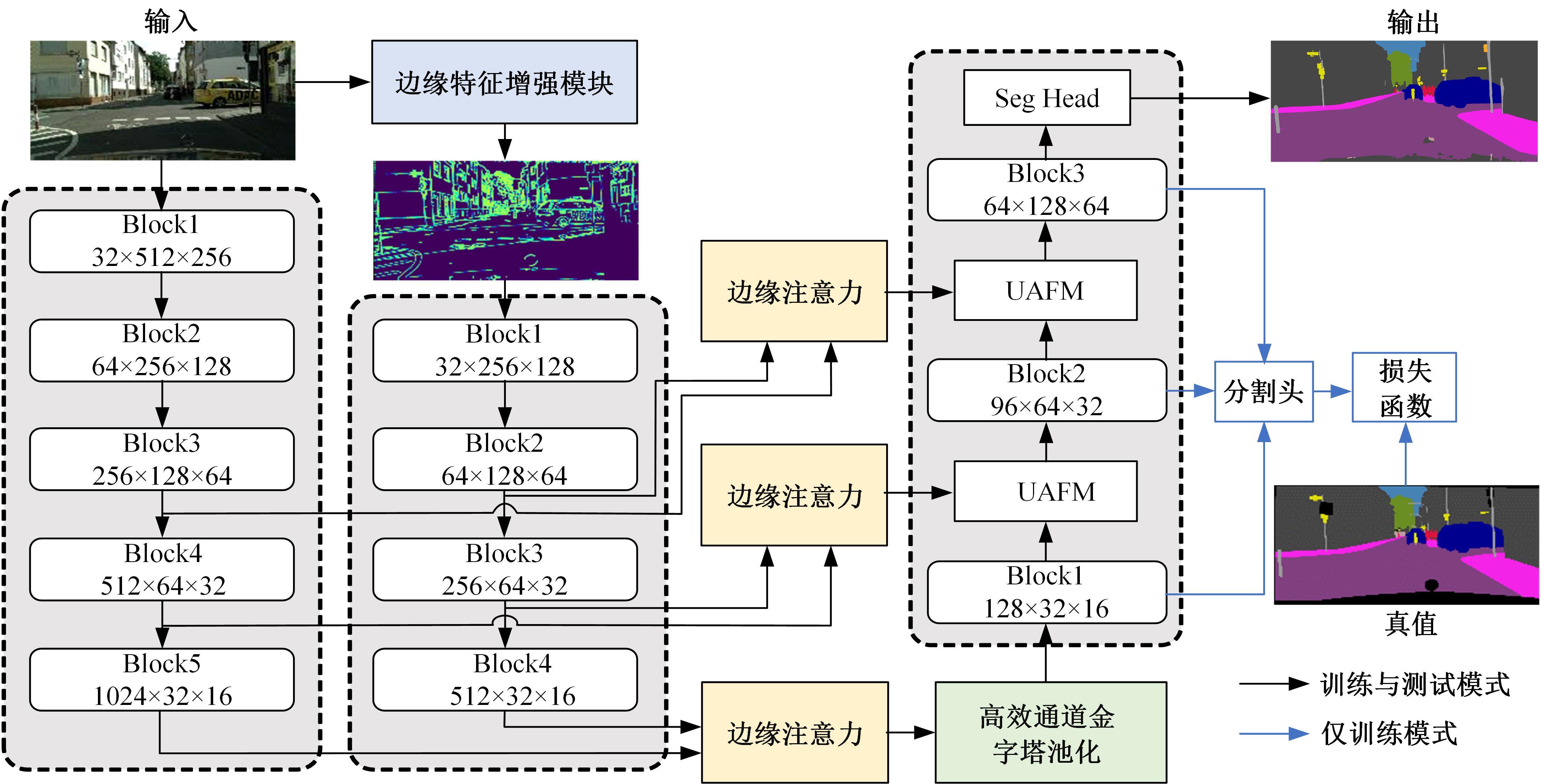

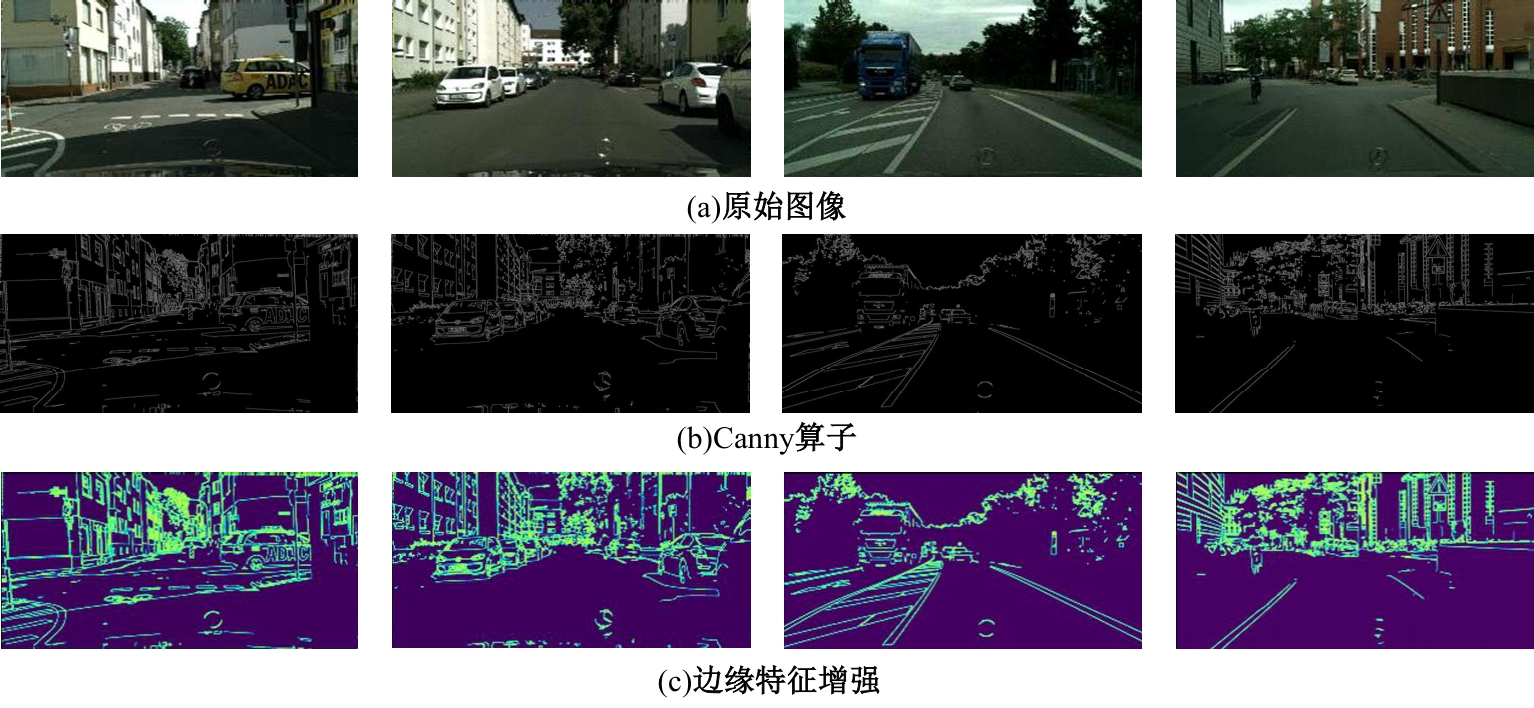

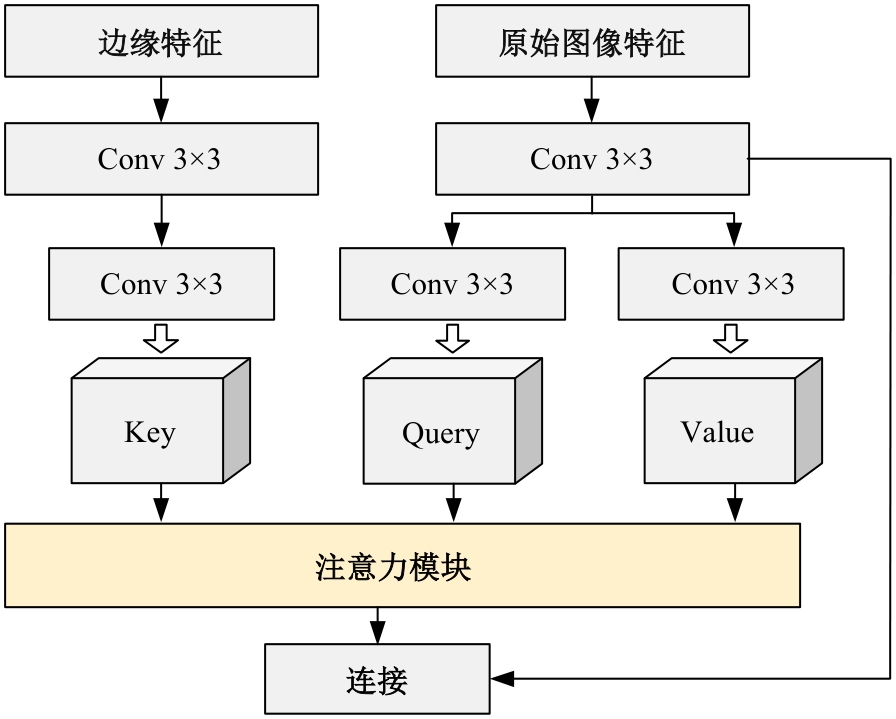

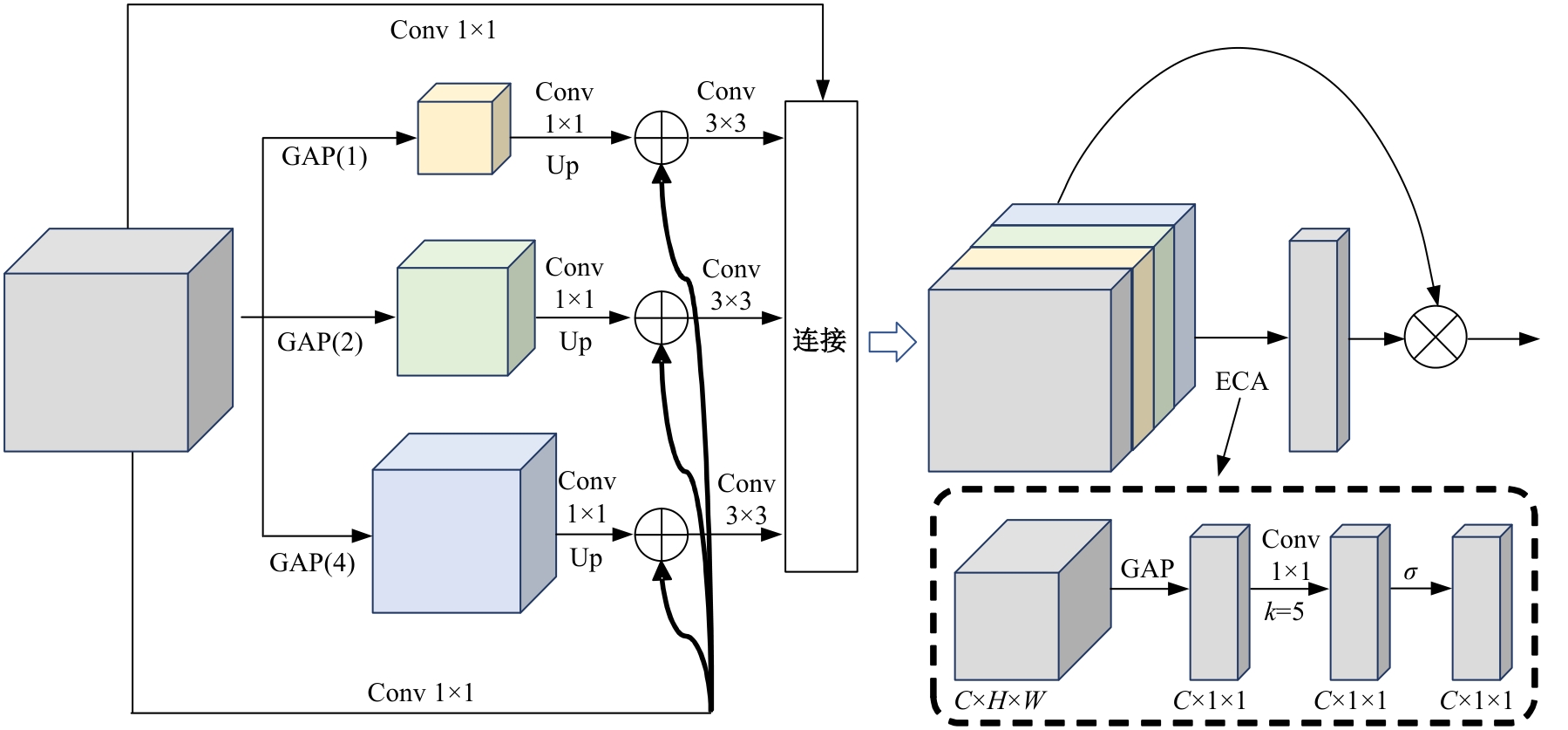

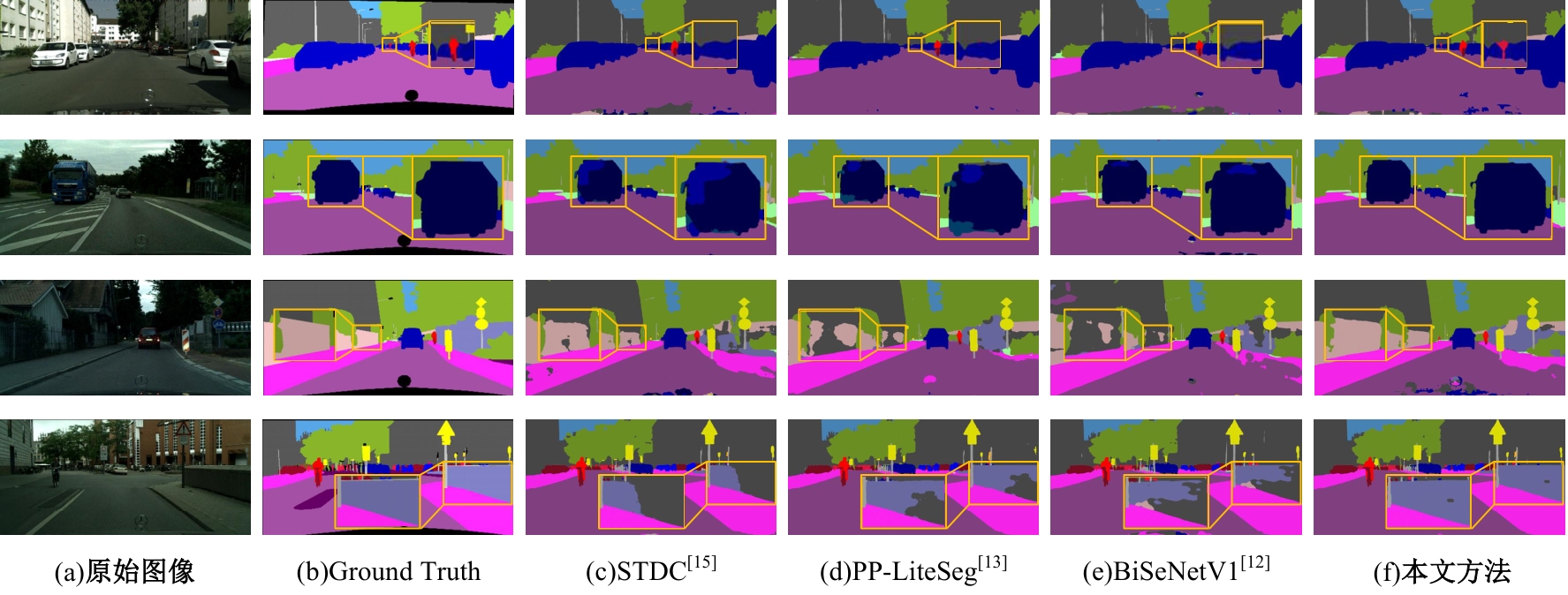

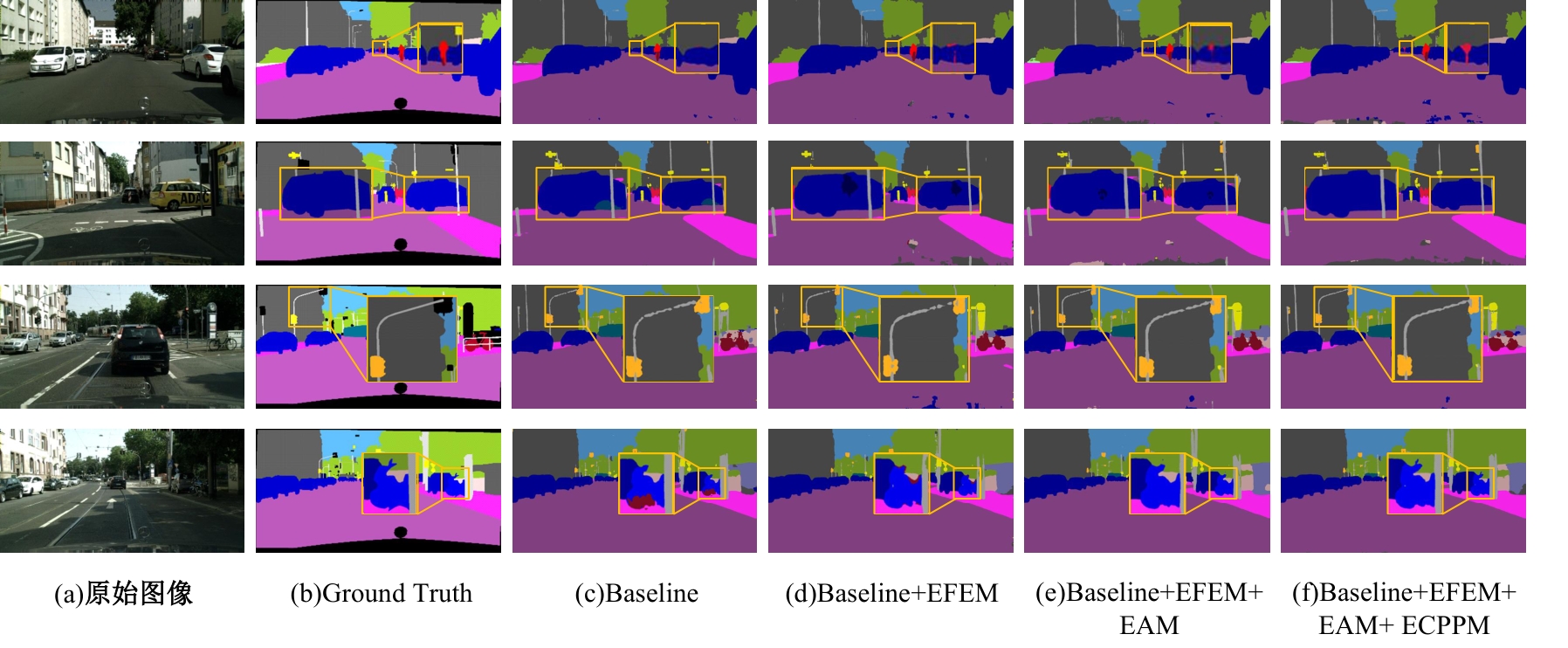

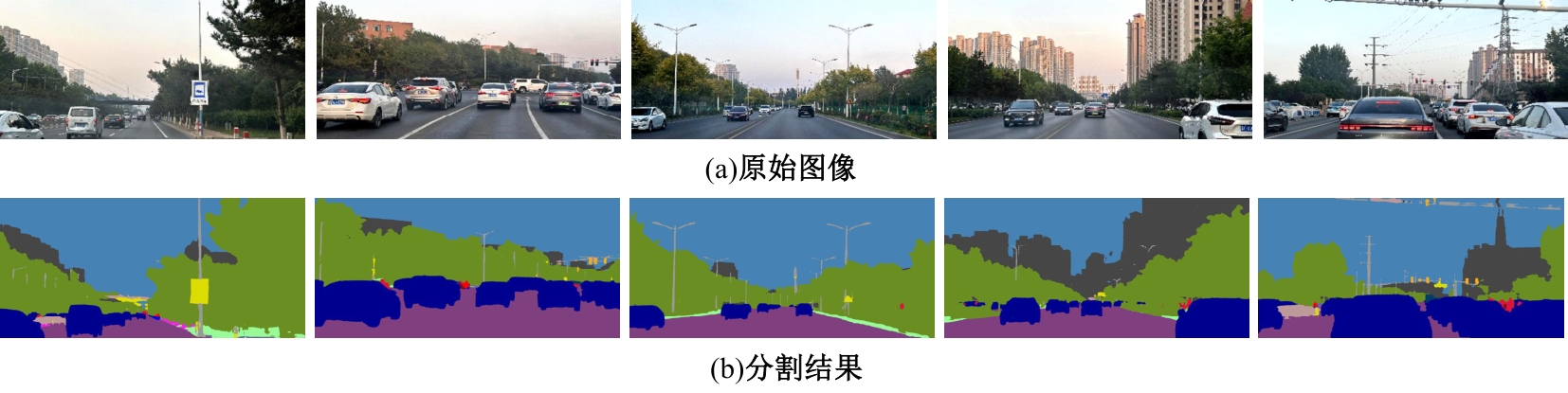

针对现有语义分割算法在自动驾驶场景中未能充分挖掘目标边缘特征的问题,提出了一种基于边缘特征引导的智能汽车语义分割方法。首先,采用边缘特征增强模块挖掘原始图像的边缘信息作为编码器的并行输入,增强神经网络的边缘特征表达。其次,提出边缘注意力模块融合原始图像特征和边缘特征,平衡图像不同区域原始特征与边缘特征在语义分割任务的关注度。最后,在解码器中设计了高效通道金字塔池化模块,提高不同尺度池化后上下文语义特征的利用效率。基于Cityscapes数据集的实验结果表明:本文方法的语义分割性能达到76.1%的MIoU和132.6 f/s的算法运行速度,与基线算法PP-LiteSeg相比,MIoU提升了2.2%,实现了语义分割精度与实时性方面的平衡。

中图分类号:

- U491.2

| [1] | 闵莉, 董冰洁, 安冬. 基于多注意力机制与跨特征融合的语义分割算法[J]. 计算机工程, 2024, 50(8): 282-289. |

| Min Li, Dong Bing-jie, An Dong. Semantic segmentation algorithm based on multi-attention mechanism and cross-feature fusion[J]. Computer Engineering, 2024, 50(8): 282-289. | |

| [2] | Liang Y, Xu Z, Rasti S, et al. On the use of a semantic segmentation micro-service in AR devices for UI placement[C]∥2022 IEEE Games, Entertainment, Media Conference, Bridgetown, Barbados, 2022: 1-6. |

| [3] | Yang L, Bai Y, Ren F, et al. Lcfnets: compensation strategy for real-time semantic segmentation of autonomous driving[J]. IEEE Transactions on Intelligent Vehicles, 2024, 9(4): 4715-4729. |

| [4] | Zhou Z, Rahman Siddiquee M M, Tajbakhsh N, et al. Unet++: a nested U-net architecture for medical image segmentation[C]∥Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support, Granada, Spain, 2018: 3-11. |

| [5] | Mo Y, Wu Y, Yang X, et al. Review the state-of-the-art technologies of semantic segmentation based on deep learning[J]. Neurocomputing, 2022, 493: 626-646. |

| [6] | Muhammad K, Hussain T, Ullah H, et al. Vision-based semantic segmentation in scene understanding for autonomous driving: recent achievements, challenges, and outlooks[J]. IEEE Transactions on Intelligent Transportation Systems, 2022, 23(12): 22694-22715. |

| [7] | Shelhamer E, Long J, Darrell T. Fully convolutional networks for semantic segmentation[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2016, 39(4): 640-651. |

| [8] | Ronneberger O, Fischer P, Brox T. U-net: convolutional networks for biomedical image segmentation[C]∥Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 2015: 234-241. |

| [9] | Chen L C, Papandreou G, Kokkinos I, et al. Deeplab: semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2017, 40(4): 834-848. |

| [10] | Zhao H S, Shi J P, Qi X J, et al. Pyramid scene parsing network[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,Honolulu, USA, 2017: 6230-6239,. |

| [11] | Paszke A, Chaurasia A, Kim S, et al. Enet: a deep neural network architecture for real-time semantic segmentation[J/OL].[2025-05-10]. |

| [12] | Yu C, Wang J, Peng C, et al. BiSeNet: bilateral segmentation network for real-time semantic segmentation[C]∥Proceedings of the European Conference on Computer Vision (ECCV),Munich, Germany, 2018: 334-349. |

| [13] | Peng J, Liu Y, Tang S, et al. Pp-liteseg: a superior real-time semantic segmentation model[J/OL]. [2025-05-10]. |

| [14] | Xu J, Xiong Z, Bhattacharyya S P. PIDNet: a real-time semantic segmentation network inspired by PID controllers[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, Canada,2023: 19529-19539. |

| [15] | Takikawa T, Acuna D, Jampani V, et al. Gated-SCNN: Gated shape cnns for semantic segmentation[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Korea, 2019: 5228-5237. |

| [16] | Yu L, Yao A, Duan J. Improving semantic segmentation via decoupled body and edge information[J]. Entropy, 2023, 25(6): No. 891. |

| [17] | Ding L, Goshtasby A. On the Canny edge detector[J]. Pattern Recognition, 2001, 34(3): 721-725. |

| [18] | Fan M, Lai S, Huang J, et al. Rethinking bisenet for real-time semantic segmentation[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition,Nashville, USA, 2021: 9711-9720. |

| [19] | Niu Z, Zhong G, Yu H. A review on the attention mechanism of deep learning[J]. Neurocomputing, 2021, 452: 48-62. |

| [20] | Wang Q, Wu B, Zhu P, et al. ECA-Net: efficient channel attention for deep convolutional neural networks[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 11531-11539. |

| [21] | Cordts M, Omran M, Ramos S, et al. The cityscapes dataset for semantic urban scene understanding[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,Las Vegas, USA, 2016: 3213-3223. |

| [22] | Zhou Q, Wang L, Gao G, et al. Boundary-guided lightweight semantic segmentation with multi-scale semantic context[J]. IEEE Transactions on Multimedia, 2024, 26: 7887-7900. |

| [23] | 江晟,路启,夏淼磊,等.基于特征融合注意力的RGB-D语义分割算法[J/OL]. [2025-05-10]. |

| [24] | Perazzi F, Pont-Tuset J, McWilliams B, et al. A benchmark dataset and evaluation methodology for video object segmentation[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, USA, 2016: 724-732. |

| [25] | Yu C, Gao C, Wang J, et al. Bisenet v2: bilateral network with guided aggregation for real-time semantic segmentation[J]. International Journal of Computer Vision, 2021, 129: 3051-3068. |

| [26] | Han H Y, Chen Y C, Hsiao P Y, et al. Using channel-wise attention for deep CNN based real-time semantic segmentation with class-aware edge information[J]. IEEE Transactions on Intelligent Transportation Systems, 2020, 22(2): 1041-1051. |

| [27] | Tsai T H, Tseng Y W. BiSeNet V3: bilateral segmentation network with coordinate attention for real-time semantic segmentation[J]. Neurocomputing, 2023, 532: 33-42. |

| [28] | Liu Q, Wang C, Li Z, et al. Attention based lightweight asymmetric network for real-time semantic segmentation[J]. Engineering Applications of Artificial Intelligence, 2024, 130: No.107736. |

| [1] | 于营,王春平,寇人可,杨博雄,王雷,赵福军,付强. 多时相高分辨率卫星遥感图像语义分割算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2131-2137. |

| [2] | 才华,王玉瑶,付强,马智勇,王伟刚,张晨洁. 基于注意力机制和特征融合的语义分割网络[J]. 吉林大学学报(工学版), 2025, 55(4): 1384-1395. |

| [3] | 郭洪艳,张家铭,刘俊,胡云峰. 面向智能汽车-行人交互的虚拟测试场景构建[J]. 吉林大学学报(工学版), 2024, 54(9): 2511-2519. |

| [4] | 谢宪毅,张明君,金立生,周彬,胡涛,白宇飞. 考虑舒适度的智能汽车人工蜂群轨迹规划方法[J]. 吉林大学学报(工学版), 2024, 54(6): 1570-1581. |

| [5] | 阙云,季雪,蒋子平,戴伊,王叶飞,陈嘉. GAN数据增强下路面裂缝语义分割算法[J]. 吉林大学学报(工学版), 2023, 53(11): 3166-3175. |

| [6] | 张玮,张树培,罗崇恩,张生,王国林. 智能汽车紧急工况避撞轨迹规划[J]. 吉林大学学报(工学版), 2022, 52(7): 1515-1523. |

| [7] | 陈雪云,贝学宇,姚渠,金鑫. 基于G⁃UNet的多场景行人精确分割与检测[J]. 吉林大学学报(工学版), 2022, 52(4): 925-933. |

| [8] | 张琳, 章新杰, 郭孔辉, 王超, 刘洋, 刘涛. 未知环境下智能汽车轨迹规划滚动窗口优化[J]. 吉林大学学报(工学版), 2018, 48(3): 652-660. |

|

||