吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (10): 3372-3383.doi: 10.13229/j.cnki.jdxbgxb.20231460

• 计算机科学与技术 • 上一篇

融合多源时空信息鸟瞰图的未来实例分割预测

- 1.中国民航大学 科技创新研究院,天津 300300

2.中国民航大学 计算机科学与技术学院,天津 300300

3.中国民航大学 民航智慧机场理论与系统重点实验室,天津 300300

Future instance segmentation prediction based on bird’s eye view of multi-source spatiotemporal information fusion

Xia FENG1,2,3( ),Shuang CHEN2,3,Min LU2,3,Hai-chao ZUO2,3

),Shuang CHEN2,3,Min LU2,3,Hai-chao ZUO2,3

- 1.Institute of Science and Technology Innovation,Civil Aviation University of China,Tianjin 300300,China

2.College of Computer Science and Technology,Civil Aviation University of China,Tianjin 300300,China

3.Key Laboratory of Civil Aviation Smart Airport Theory and System,Civil Aviation University of China,Tianjin 300300,China

摘要:

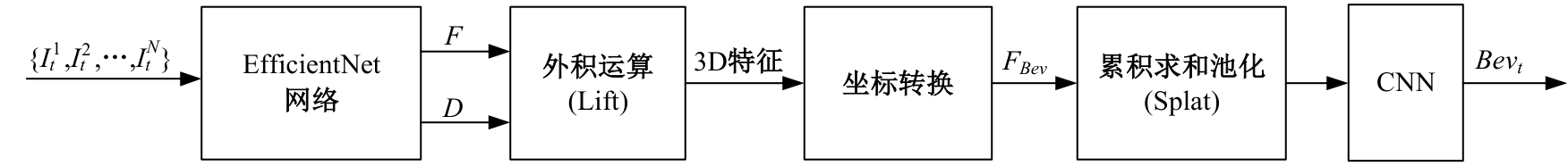

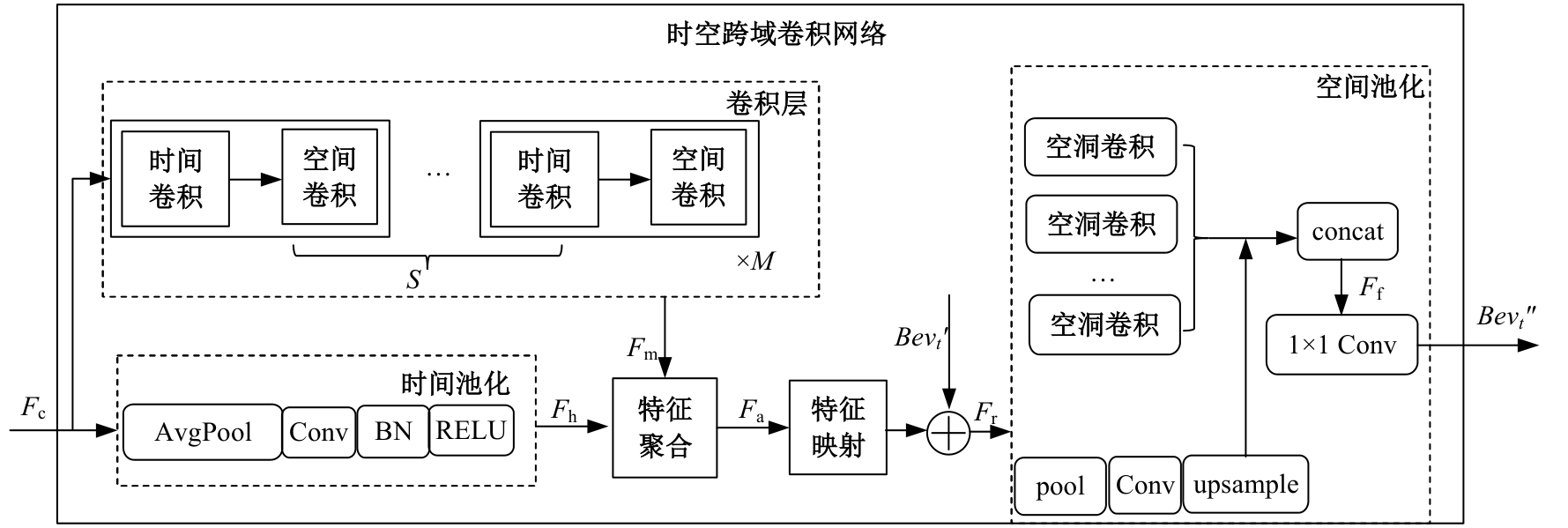

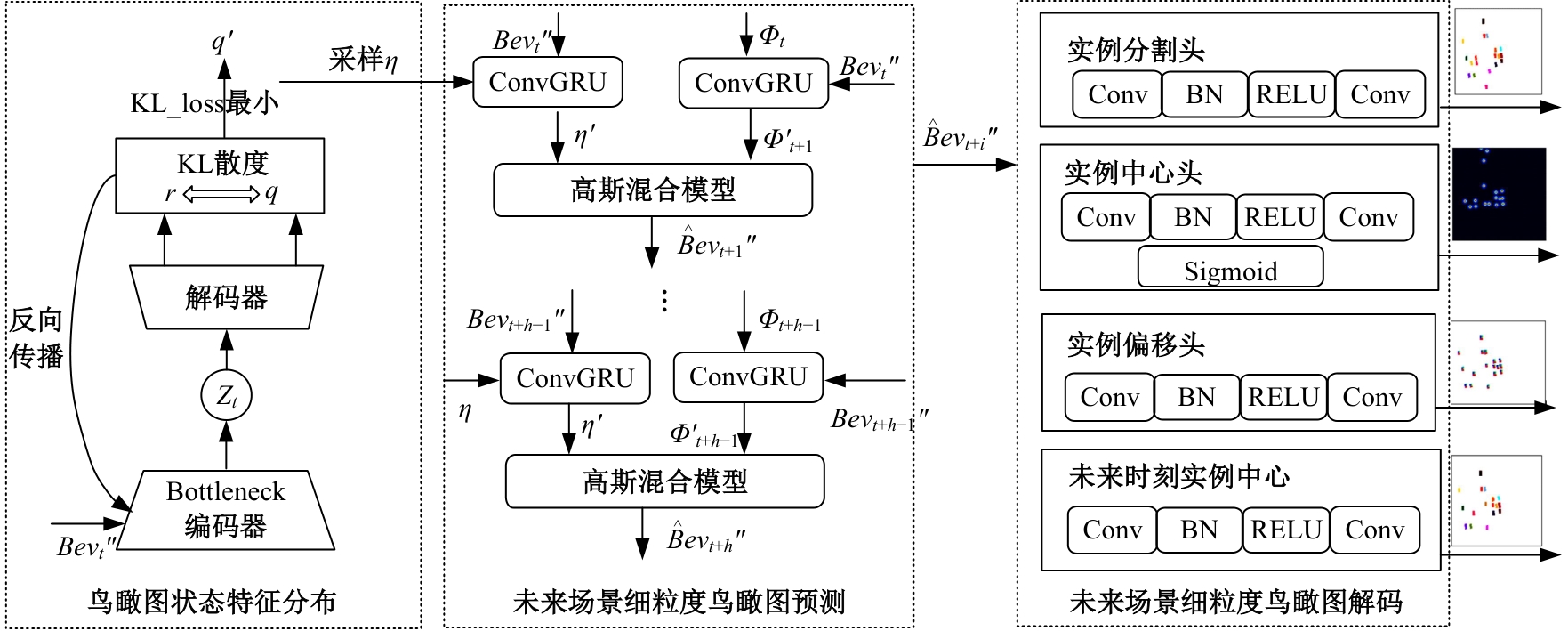

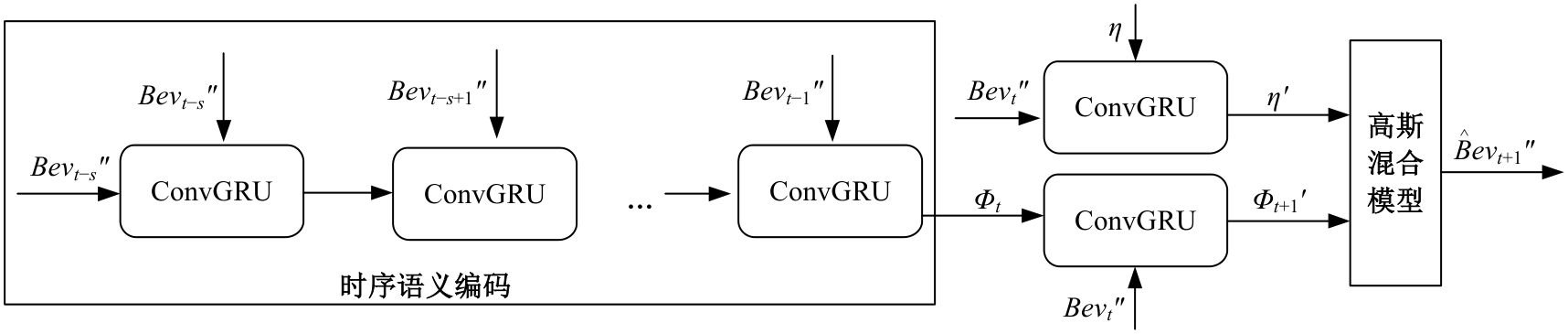

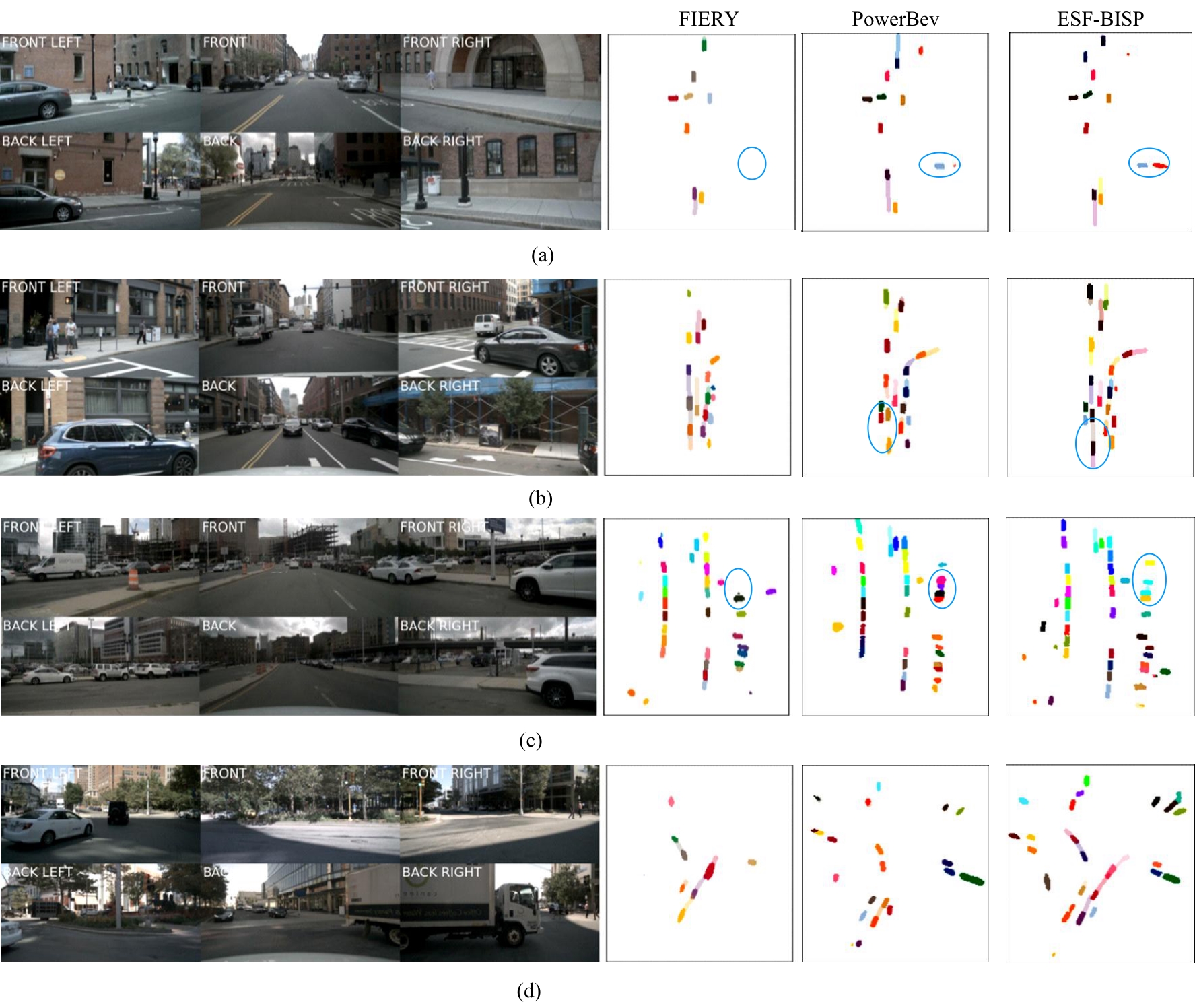

针对现有实例分割存在的难以识别被遮挡对象、对噪声和视角变化鲁棒性不够等问题,提出了一种融合多源时空信息的场景细粒度鸟瞰图生成方法(MSTFB)。该方法首先基于栅格化场景鸟瞰图,采用自注意力机制融合时序鸟瞰图特征,通过时空跨域卷积网络捕获实例间相对位置并聚合多尺度特征,得到场景细粒度鸟瞰图。在此基础上,又提出了一种融合时序编码和样本特征的鸟瞰图实例分割预测方法(ESF-BISP),采用ConvGRU对历史帧进行时序语义编码得到时序特征,通过条件变分自编码器生成当前帧细粒度鸟瞰图的状态特征分布并采样鸟瞰图的样本特征,再利用高斯混合模型融合鸟瞰图时序特征和样本特征,经解码得到未来帧场景细粒度鸟瞰图。在公开数据集nuScenes上的实验结果表明,MSTFB方法和基准算法LSS相比,车辆分割IoU指标提升了7.09%,能有效分割远端车辆和被遮挡车辆;ESF-BISP能更好地捕获场景中动态实例的变化,无论是用于实例分割,还是用于未来实例分割预测,其性能都显著优于基准算法。

中图分类号:

- TP391.41

| [1] | Wang X, Girdhar R, Yu S X, et al. Cut and learn for unsupervised object detection and instance segmentation[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2023: 3124-3134. |

| [2] | Hurtik P, Molek V, Hula J, et al. Poly-YOLO: higher speed, more precise detection and instance segmentation for YOLOv3[J]. Neural Computing and Applications, 2022, 34(10): 8275-8290. |

| [3] | 毛琳, 任凤至, 杨大伟, 等. 双向特征金字塔全景分割网络[J].吉林大学学报: 工学版,2022, 52(3): 657-665. |

| Mao Lin, Ren Feng-zhi, Yang Da-wei, et al. Two⁃way feature pyramid network for panoptic segmentation[J]. Journal of Jilin University(Engineering and Technology Edition), 2022, 52(3): 657-665. | |

| [4] | Ke L, Danelljan M, Li X, et al. Mask transfiner for high-quality instance segmentation[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2022: 4412-4421. |

| [5] | Cheng T H, Wang X G, Chen S Y, et al. Boxteacher: Exploring high-quality pseudo labels for weakly supervised instance segmentation[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2023: 3145-3154. |

| [6] | 霍光, 林大为, 刘元宁, 等. 基于多尺度特征和注意力机制的轻量级虹膜分割模型[J]. 吉林大学学报: 工学版, 2023, 53(9): 2591-2600. |

| Huo Guang, Lin Da-wei, Liu Yuan-ning, et al. Lightweight iris segmentation model based on multiscale feature and attention mechanism[J]. Journal of Jilin University(Engineering and Technology Edition), 2023, 53(9): 2591-2600. | |

| [7] | Deng L Y, Yang M, Li H, et al. Restricted deformable convolution-based road scene semantic segmentation using surround view cameras[J]. IEEE Transactions on Intelligent Transportation Systems, 2019, 21(10): 4350-4362. |

| [8] | Lu C Y, Wan de, Gerardus M J G, Dubbelman G.Monocular semantic occupancy grid mapping with convolutional variational encoder-decoder networks[J].IEEE Robotics and Automation Letters, 2019, 4(2):445-452. |

| [9] | Pan B, Sun J, Leung H Y T, et al. Cross-view semantic segmentation for sensing surroundings[J]. IEEE Robotics and Automation Letters, 2020, 5(3): 4867-4873. |

| [10] | Philion J, Fidler S. Lift, splat, shoot: Encoding images from arbitrary camera rigs by implicitly unprojecting to 3D[C]∥The 16th European Conference on Computer Vision, Glasgow, UK, 2020: 194-210. |

| [11] | Khalil Y H, Mouftah H T. End-to-end multi-view fusion for enhanced perception and motion prediction[C]∥IEEE 94th Vehicular Technology Conference, Piscataway, USA, 2021: 1-6. |

| [12] | Hendy N, Sloan C, Tian F, et al. FISHING net: Future inference of semantic heatmaps in grids[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2020. |

| [13] | Ma Y, Wang T, Bai X, et al. Vision-centric BEV perception: a survey[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024,46(12):1-20. |

| [14] | Akan A K, Güney F. Stretchbev: Stretching future instance prediction spatially and temporally[C]∥European Conference on Computer Vision, Tel Aviv, Israel, 2022: 444-460. |

| [15] | Li P I, Ding S X, Chen X Y L, et al. PowerBEV: a powerful yet lightweight framework for instance prediction in bird's-eye view[DB/OL]. [2023-10-22].. |

| [16] | Hu Y H, Yang J Z, Chen L, et al. Planning-oriented autonomous driving[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2023: 17853-17862. |

| [17] | Hu A, Murez Z, Mohan N, et al. FIERY: Future instance prediction in bird's-eye view from surround monocular cameras[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision,Piscataway,USA, 2021: 15273-15282. |

| [18] | Yuan F N, Zhang L, Xia X, et al. A gated recurrent network with dual classification assistance for smoke semantic segmentation[J]. IEEE Transactions on Image Processing, 2021, 30: 4409-4422. |

| [19] | Mao Y X, Zhang J, Xiang M C, et al. Multimodal variational auto-encoder based audio-visual segmentation[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision, Piscataway, USA, 2023: 954-965. |

| [20] | Tan M X, Le Q V. Efficientnet: Rethinking model scaling for convolutional neural networks[C]∥International Conference on Machine Learning, Long Beach, USA, 2019: 6105-6114. |

| [21] | Riaz F, Rehman S, Ajmal M, et al. Gaussian mixture model based probabilistic modeling of images for medical image segmentation[J]. IEEE Access, 2020, 8: 16846-16856. |

| [22] | Lyu S W, Fan Y B, Ying Y M, et al. Average top-k aggregate loss for supervised learning[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 44(1): 76-86. |

| [23] | Caesar H, Bankiti V, Lang A H, et al. nuscenes: A multimodal dataset for autonomous driving[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Piscataway, USA, 2020: 11621-11631. |

| [24] | Mandal S, Biswas S, Balas V E, et al. Lyft 3D object detection for autonomous vehicles[M]∥Rabindra Shaw,Artificial Intelligence for Future Generation Robotics: Amsterdam: Elsevier, 2021: 119-136. |

| [25] | Gong S, Ye X, Tan X Q, et al. GitNet: Geometric prior-based transformation for birds-eye-view segmentation[C]∥European Conference on Computer Vision, Tel Aviv, Israel, 2022: 396-411. |

| [1] | 张宇飞,王丽敏,赵建平,贾智尧,李明洋. 基于中心选择大逃杀优化算法的机器人逆运动学求解[J]. 吉林大学学报(工学版), 2025, 55(8): 2703-2710. |

| [2] | 李文辉,杨晨. 基于对比学习文本感知的小样本遥感图像分类[J]. 吉林大学学报(工学版), 2025, 55(7): 2393-2401. |

| [3] | 车翔玖,李良. 融合全局与局部细粒度特征的图相似度度量算法[J]. 吉林大学学报(工学版), 2025, 55(7): 2365-2371. |

| [4] | 王健,贾晨威. 面向智能网联车辆的轨迹预测模型[J]. 吉林大学学报(工学版), 2025, 55(6): 1963-1972. |

| [5] | 周丰丰,郭喆,范雨思. 面向不平衡多组学癌症数据的特征表征算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2089-2096. |

| [6] | 车翔玖,孙雨鹏. 基于相似度随机游走聚合的图节点分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2069-2075. |

| [7] | 车翔玖,武宇宁,刘全乐. 基于因果特征学习的有权同构图分类算法[J]. 吉林大学学报(工学版), 2025, 55(2): 681-686. |

| [8] | 张瑞峰,郭芳兆,李锵. 基于多尺度注意力信息复用网络的胸片图像分类[J]. 吉林大学学报(工学版), 2025, 55(11): 3686-3696. |

| [9] | 梁礼明,周珑颂,尹江,盛校棋. 融合多尺度Transformer的皮肤病变分割算法[J]. 吉林大学学报(工学版), 2024, 54(4): 1086-1098. |

| [10] | 拉巴顿珠,扎西多吉,珠杰. 藏语文本标准化方法[J]. 吉林大学学报(工学版), 2024, 54(12): 3577-3588. |

| [11] | 叶育鑫,夏珞珈,孙铭会. 增强现实环境中基于假想键盘的手势输入方法[J]. 吉林大学学报(工学版), 2024, 54(11): 3274-3282. |

| [12] | 车娜,朱奕明,赵剑,孙磊,史丽娟,曾现伟. 基于联结主义的视听语音识别方法[J]. 吉林大学学报(工学版), 2024, 54(10): 2984-2993. |

| [13] | 薛珊,张亚亮,吕琼莹,曹国华. 复杂背景下的反无人机系统目标检测算法[J]. 吉林大学学报(工学版), 2023, 53(3): 891-901. |

| [14] | 时小虎,吴佳琦,吴春国,程石,翁小辉,常志勇. 基于残差网络的弯道增强车道线检测方法[J]. 吉林大学学报(工学版), 2023, 53(2): 584-592. |

| [15] | 王振,杨宵晗,吴楠楠,李国坤,冯创. 基于生成对抗网络的序列交叉熵哈希[J]. 吉林大学学报(工学版), 2023, 53(12): 3536-3546. |

|

||