Journal of Jilin University(Engineering and Technology Edition) ›› 2025, Vol. 55 ›› Issue (3): 1037-1049.doi: 10.13229/j.cnki.jdxbgxb20230544

Previous Articles Next Articles

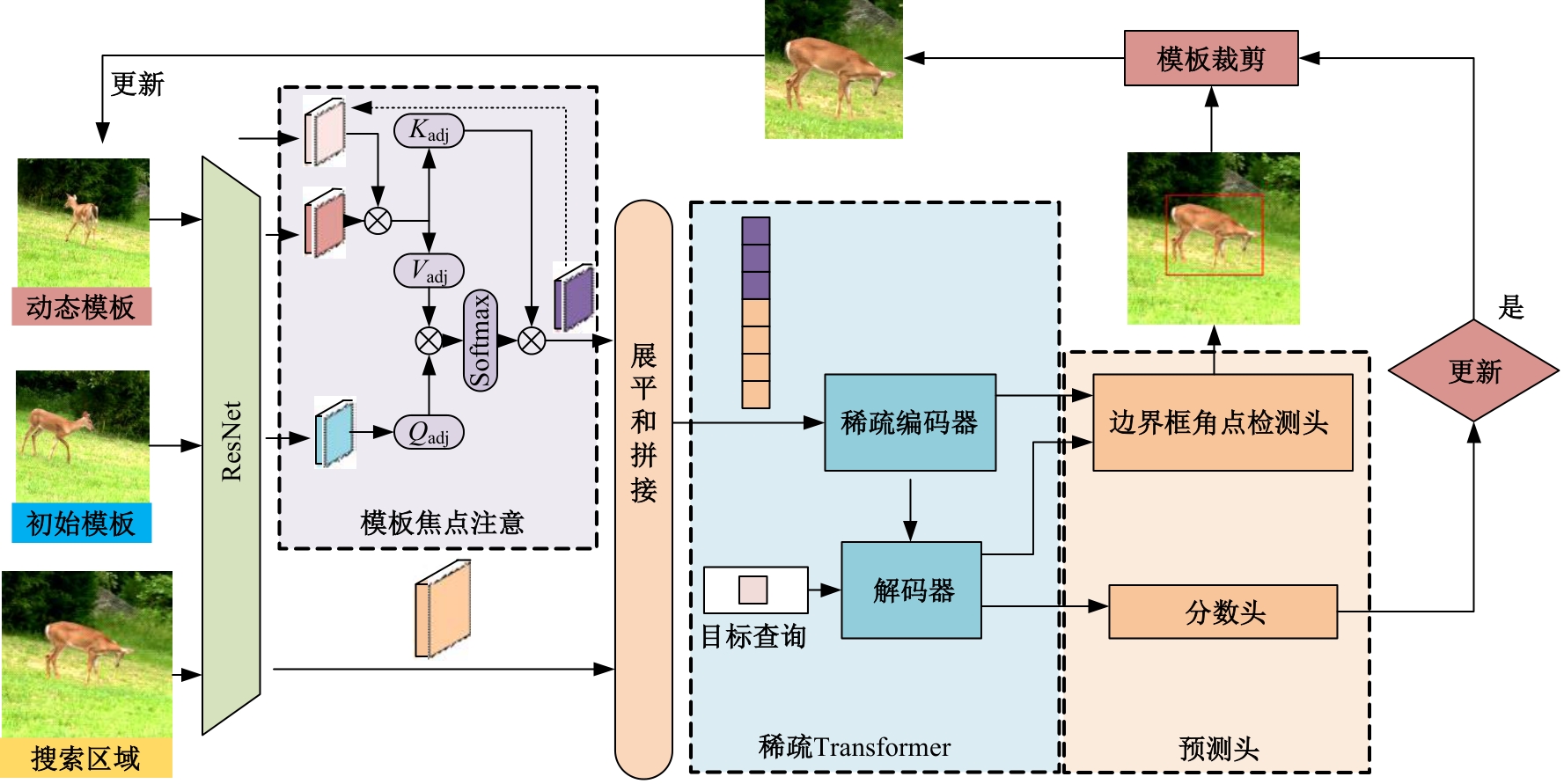

Spatiotemporal Transformer with template attention for target tracking

Guang-wen LIU1( ),Xin-yue XIE1,Qiang FU2,Hua CAI1(

),Xin-yue XIE1,Qiang FU2,Hua CAI1( ),Wei-gang WANG3,Zhi-yong MA3

),Wei-gang WANG3,Zhi-yong MA3

- 1.School of Electronic Information Engineering,Changchun University of Science and Technology,Changchun 130022,China

2.School of Opto-Electronic Engineer,Changchun University of Science and Technology,Changchun 130022,China

3.No. 2 Department of Urology,the First Hospital of Jilin University,Changchun 130061,China

CLC Number:

- TP391

| 1,1 | Marvasti-Zadeh S M, Cheng L, Ghanei-Yakhdan H, et al. Deep learning for visual tracking: a comprehensive survey[J]. IEEE Transactions on Intelligent Transportation Systems, 2021, 23(5): 3943-3968. |

| 2 | Huang K, Hao Q. Joint multi-object detection and tracking with camera-LiDAR fusion for autonomous driving[C]∥IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Pruge, Czech, 2021: 6983-6989. |

| 3 | Ye J, Fu C, Lin F, et al. Multi-regularized correlation filter for UAV tracking and self-localization[J]. IEEE Transactions on Industrial Electronics, 2021, 69(6): 6004-6014. |

| 4 | Bertinetto L, Valmadre J, Henriques J F, et al. Fully-convolutional siamese networks for object tracking[C]∥Computer Vision-ECCV 2016 Workshops, Amsterdam, Holland, 2016: 850-865. |

| 5 | Li B, Yan J, Wu W, et al. High performance visual tracking with siamese region proposal network[C]∥Proceedings of the IEEE Conference on Computer vision and Pattern Recognition,Salt Lake City, USA, 2018: 8971-8980. |

| 6 | Li B, Wu W, Wang Q, et al. Evolution of siamese visual tracking with very deep networks[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, USA,2019: 16-20. |

| 7 | Danelljan M, Bhat G, Shahbaz Khan F, et al. Eco: efficient convolution operators for tracking[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition,Montreal, Canada,2017: 6638-6646. |

| 8 | Carion N, Massa F, Synnaeve G, et al. End-to-end object detection with transformers[C]∥Computer Vision-ECCV 2020. Berlin: Springer, 2020: 213-229. |

| 9 | 张尧, 才华, 李心达, 等. 基于 Adaboost 首帧检测的时空上下文人脸跟踪算法[J]. 吉林大学学报: 理学版, 2020, 58(2): 314-320. |

| Zhang Yao, Cai Hua, Li Xin-da, et al. Spatio-temporal context face tracking algorithm based on adaboost first frame detection [J]. Journal of Jilin University (Science Edition), 2020,58 (2): 314-320. | |

| 10 | Yan B, Peng H, Fu J, et al. Learning spatio-temporal transformer for visual tracking[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision,Montreal, Canada,2021: 10448-10457. |

| 11 | Li B, Yan J, Wu W, et al. High performance visual tracking with siamese region proposal network[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, USA,2018: 8971-8980. |

| 12 | Li B, Wu W, Wang Q, et al. Evolution of siamese visual tracking with very deep networks[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA,2019: 15-20. |

| 13 | Zhu Z, Wang Q, Li B, et al. Distractor-aware siamese networks for visual object tracking[C]∥Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 2018: 103-119. |

| 14 | Zhang L, Gonzalez-Garcia A, Weijer J, et al. Learning the model update for siamese trackers[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision,Seoul,South Korea, 2019: 4010-4019. |

| 15 | 才华, 王学伟, 付强, 等. 基于动态模板更新的孪生网络目标跟踪算法[J]. 吉林大学学报: 工学版, 2022, 52(5): 1106-1116. |

| Cai Hua, Wang Xue-wei, Fu Qiang, et al.Siamese network target tracking algorithm based on dynamic template updating[J]. Journal of Jilin University (Engineering Edition), 2022, 52(5): 1106-1116. | |

| 16 | Zhao M, Okada K, Inaba M. Trtr: visual tracking with transformer[J/OL]. [2023-05-17]. |

| 17 | Carion N, Massa F, Synnaeve G, et al. End-to-end object detection with transformers[C]∥Computer Vision-ECCV 2020: 16th European Conference, Glasgow, UK, 2020: 213-229. |

| 18 | Wang N, Zhou W, Wang J, et al. Transformer meets tracker: exploiting temporal context for robust visual tracking[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA, 2021: 1571-1580. |

| 19 | Chen X, Yan B, Zhu J, et al. Transformer tracking[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition,Nashville,USA, 2021: 8126-8135. |

| 20 | Fu Z, Fu Z, Liu Q, et al. SparseTT: visual tracking with sparse transformers[J/OL]. [2023-05-17]. |

| 21 | Song Z, Yu J, Chen Y P P, et al. Transformer tracking with cyclic shifting window attention[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, USA, 2022: 8791-8800. |

| 22 | Vaswani A, Shazeer N, Parmar N, et al. Attention is all you need[J/OL]. [2023-05-17]. |

| 23 | Zhang Z L, Sabuncu M R. Generalized cross entropy loss for training deep neural networks with noisy labels[J/OL]. [2023-05-17]. |

| 24 | Fan H, Lin L, Yang F, et al. Lasot: a high-quality benchmark for large-scale single object tracking[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, USA, 2019: 5374-5383. |

| 25 | Huang L, Zhao X, Huang K. Got-10k: a large high-diversity benchmark for generic object tracking in the wild[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2019, 43(5): 1562-1577. |

| 26 | Lin T Y, Maire M, Belongie S, et al. Microsoft coco: common objects in context[J/OL] .[2023-05-17]. |

| 27 | Wu Y, Lim J, Yang M H. Object tracking benchmark[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2015, 37(9): 1834-1848. |

| 28 | Muller M, Bibi A, Giancola S, et al. Trackingnet: a large-scale dataset and benchmark for object tracking in the wild[J/OL] [2023-05-17]. |

| [1] | Hua CAI,Rui-kun ZHU,Qiang FU,Wei-gang WANG,Zhi-yong MA,Jun-xi SUN. Human pose estimation corrector algorithm based on implicit key point interconnection [J]. Journal of Jilin University(Engineering and Technology Edition), 2025, 55(3): 1061-1071. |

| [2] | Sheng-jie ZHU,Xuan WANG,Fang XU,Jia-qi PENG,Yuan-chao WANG. Multi-scale normalized detection method for airborne wide-area remote sensing images [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(8): 2329-2337. |

| [3] | Ming-hui SUN,Hao XUE,Yu-bo JIN,Wei-dong QU,Gui-he QIN. Video saliency prediction with collective spatio-temporal attention [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(6): 1767-1776. |

| [4] | Dian-wei WANG,Chi ZHANG,Jie FANG,Zhi-jie XU. UAV target tracking algorithm based on high resolution siamese network [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(5): 1426-1434. |

| [5] | Yu WANG,Kai ZHAO. Postprocessing of human pose heatmap based on sub⁃pixel location [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(5): 1385-1392. |

| [6] | Yun-long GAO,Ming REN,Chuan WU,Wen GAO. An improved anchor-free model based on attention mechanism for ship detection [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(5): 1407-1416. |

| [7] | Lin MAO,Hong-yang SU,Da-wei YANG. Temporal salient attention siamese tracking network [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(11): 3327-3337. |

| [8] | Wen-cai SUN,Xu-ge HU,Zhi-fa YANG,Fan-yu MENG,Wei SUN. Optimization of infrared-visible road target detection by fusing GPNet and image multiscale features [J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(10): 2799-2806. |

| [9] | Jing-hong LIU,An-ping DENG,Qi-qi CHEN,Jia-qi PENG,Yu-jia ZUO. Anchor⁃free target tracking algorithm based on multiple attention mechanism [J]. Journal of Jilin University(Engineering and Technology Edition), 2023, 53(12): 3518-3528. |

| [10] | Kan WANG,Hang SU,Hao ZENG,Jian QIN. Deep target tracking using augmented apparent information [J]. Journal of Jilin University(Engineering and Technology Edition), 2022, 52(11): 2676-2684. |

| [11] | Jie CAO,Xue QU,Xiao-xu LI. Few⁃shot image classification method based on sliding feature vectors [J]. Journal of Jilin University(Engineering and Technology Edition), 2021, 51(5): 1785-1791. |

| [12] | Tao XU,Ke MA,Cai-hua LIU. Multi object pedestrian tracking based on deep learning [J]. Journal of Jilin University(Engineering and Technology Edition), 2021, 51(1): 27-38. |

| [13] | Hong-wei ZHAO,Ming-zhao LI,Jing LIU,Huang-shui HU,Dan WANG,Xue-bai ZANG. Scene classification based on degree of naturalness and visual feature channels [J]. Journal of Jilin University(Engineering and Technology Edition), 2019, 49(5): 1668-1675. |

| [14] | CHE Xiang-jiu, WANG Li, GUO Xiao-xin. Improved boundary detection based on multi-scale cues fusion [J]. Journal of Jilin University(Engineering and Technology Edition), 2018, 48(5): 1621-1628. |

| [15] | XU Yan-yan, CHEN Hui, LIU Jia-ju, YUAN Jin-zhao. Cell processor stereo matching parallel computation [J]. 吉林大学学报(工学版), 2017, 47(3): 952-958. |

|

||