吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (11): 3697-3704.doi: 10.13229/j.cnki.jdxbgxb.20240223

• 计算机科学与技术 • 上一篇

融合深度主动学习的视觉目标检测模型

- 辽宁工业大学 电子与信息工程学院,辽宁 锦州 121001

Vision object detection model with deep active learning

Yu-dong CAO( ),Xin-lin LIAO,Xin CHEN,Xu JIA

),Xin-lin LIAO,Xin CHEN,Xu JIA

- School of Electronics and Information Engineering,Liaoning University of Technology,Jinzhou 121001,China

摘要:

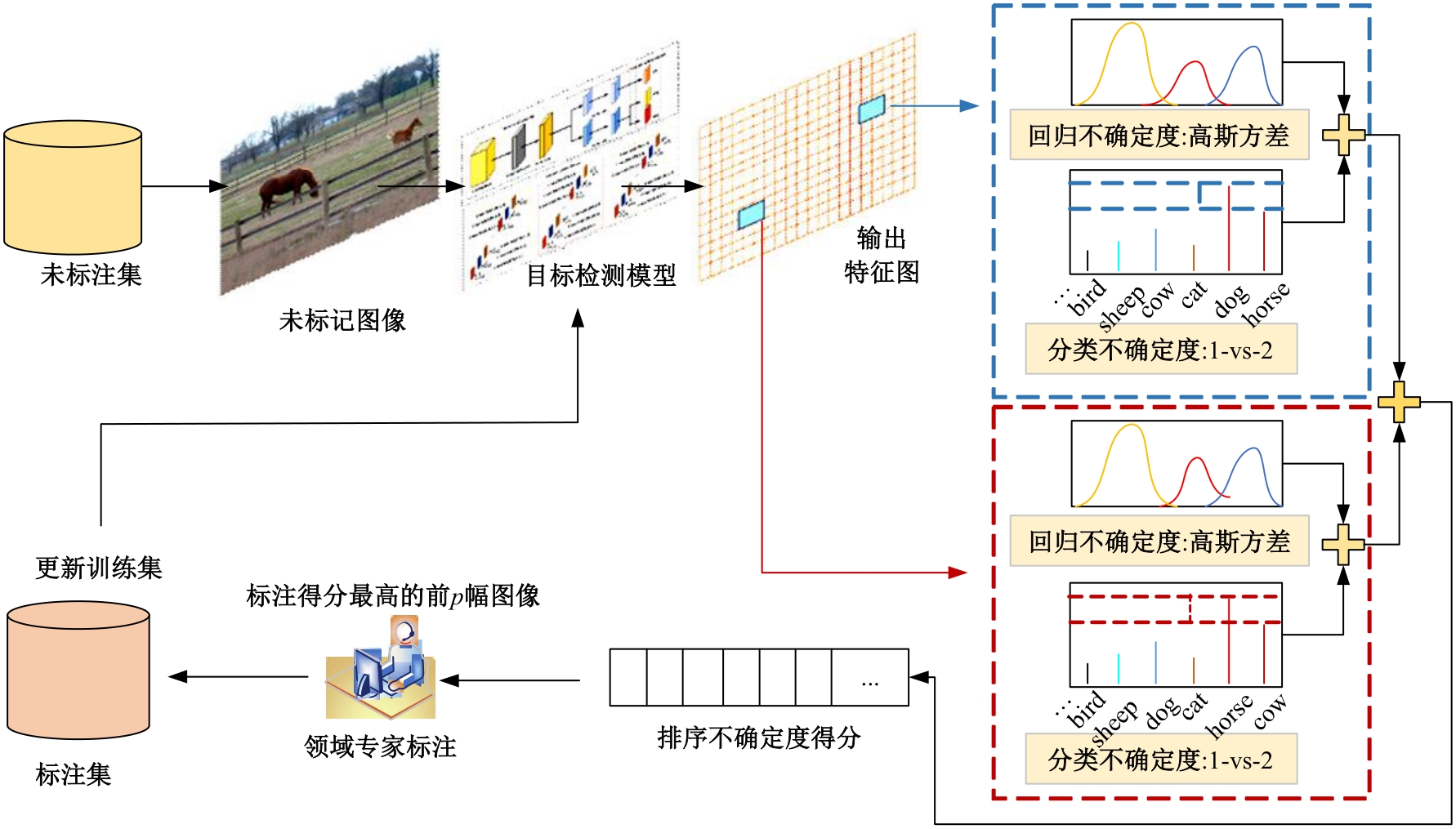

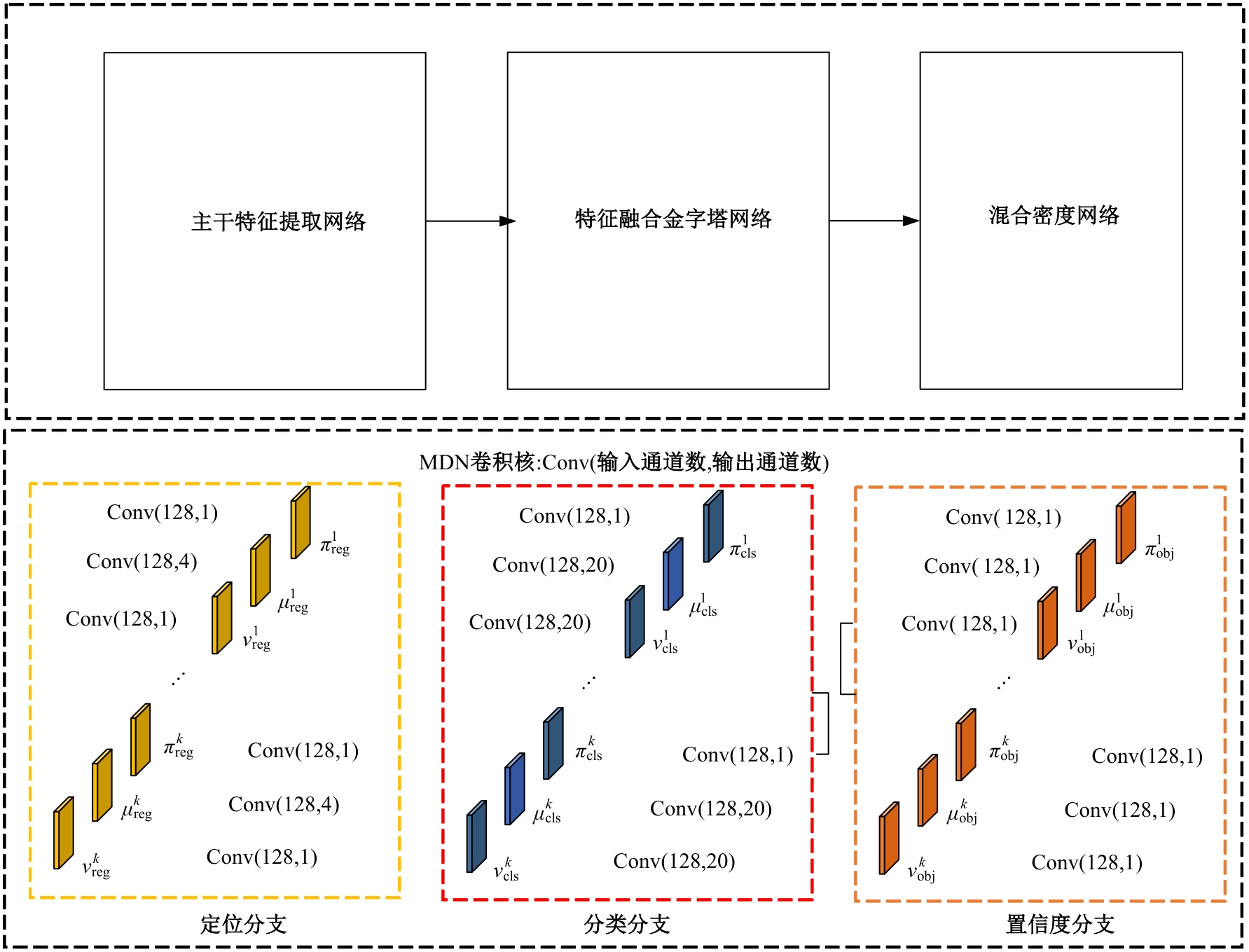

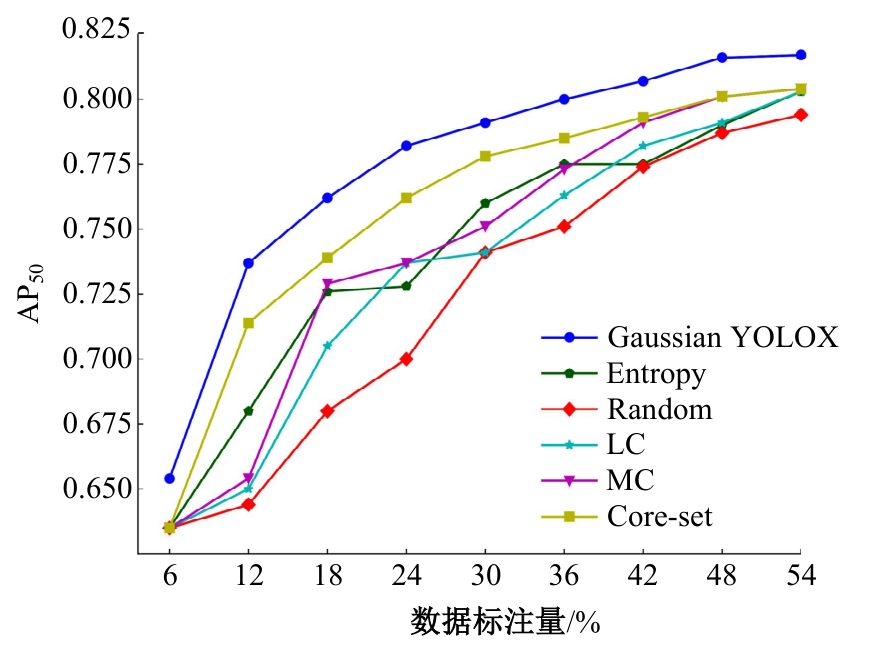

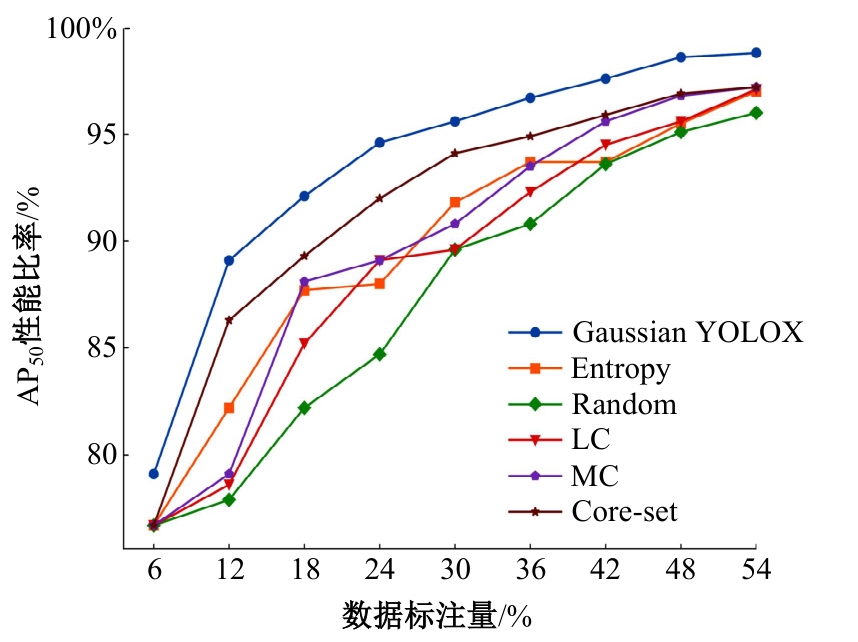

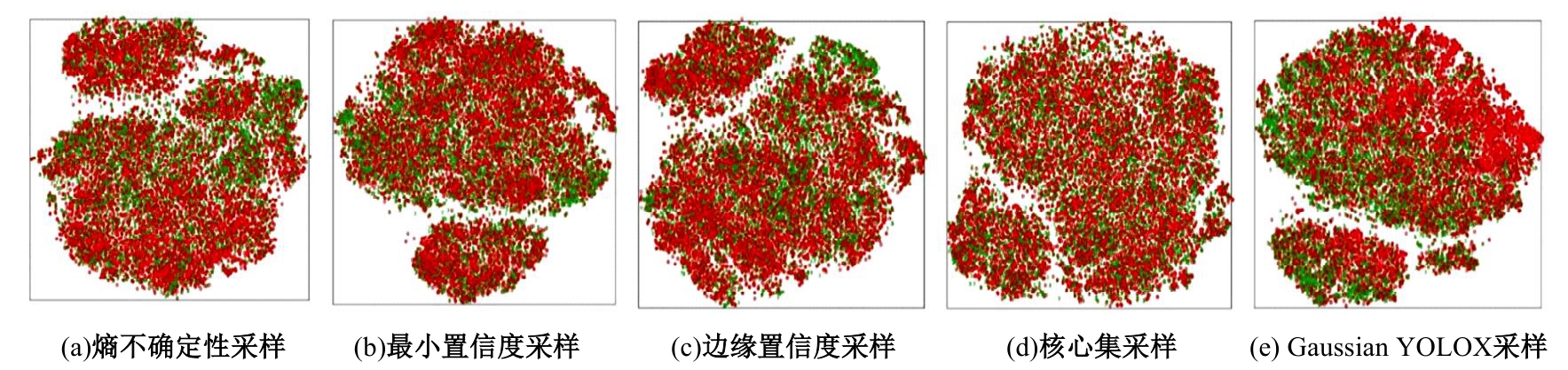

车辆自动驾驶对周边目标的感知是保障交通安全的重要手段,基于深度学习的目标检测模型被广泛应用,但是需要海量的标注数据进行训练。本文提出一种采用高斯混合分布估计未标注图像不确定度的主动视觉目标检测模型,以减少模型训练对标注数据的依赖。首先,采用混合密度网络作为检测头,以深度神经网络提取的图像特征为输入,估计目标预测框分类和定位的概率分布;其次,将目标预测框的分类得分值映射到概率空间,通过边缘不确定度计算目标的分类不确定度,用预测框定位方差度量目标的定位不确定度;最后,挑选最不稳定的样本进行标注。在VOC数据集上的结果表明:与其他典型的主动学习采样策略相比,本文模型取得了最优性能,仅用54%的数据标注量就能达到YOLOX监督学习98.8%的性能,节省近45%的数据标注量。

中图分类号:

- TP391

| [1] | 曲优, 李文辉. 基于锚框变换的单阶段旋转目标检测方法 [J]. 吉林大学学报:工学版, 2022, 52(1): 162-173. |

| Qu You, Li Wen-hui. Single-stage rotated object detection network based on anchor transformation[J]. Journal of Jilin University (Engineering and Technology Edition), 2022, 52(1): 162-173. | |

| [2] | 吴伟宁, 刘扬, 郭茂祖, 等. 基于采样策略的主动学习算法研究进展 [J]. 计算机研究与发展, 2012, 49(6): 1162-1173. |

| Wu Wei-ning, Liu Yang, Guo Mao-zu, et al. Advances in active learning algorithms based on sampling strategy[J]. Journal of Computer Research and Development, 2012, 49(6): 1162-1173. | |

| [3] | 李延超, 肖甫, 陈志, 等. 自适应主动半监督学习方法 [J]. 软件学报, 2020, 31(12): 3808-3822. |

| Li Yan-chao, Xiao Fu, Chen Zhi, et al. Adaptive active learning for semi-supervised learning[J]. Journal of Software, 2020, 31(12): 3808-3822. | |

| [4] | Roy S, Unmesh A, Namboodiri V P. Deep active learning for object detection[C]∥British Machine Vision Conference. Northumbria,UK: BMVC, 2018: 1-12. |

| [5] | Gal Y, Ghahramani Z. Bayesian convolutional neural networks with bernoulli approximate variational inference[J]. Computer Science,2015,12: 1-12. |

| [6] | Elhamifar E, Sapiro G, Yang A, et al. A convex optimization framework for active learning[C]∥Proceedings of the IEEE International Conference on Computer Vision. Piscataway, NJ: IEEE, 2013: 209-216. |

| [7] | Paul R, Feldman D, Rus D, et al. Visual precis generation using coresets[C]∥Proceedings of the 2014 IEEE International Conference on Robotics and Automation. Piscataway, NJ: IEEE, 2014: 1304-1311. |

| [8] | 张新生, 高新波, 王颖, 等. 乳腺图像微钙化簇主动学习检测新方法[J]. 西安电子科技大学学报, 2008(5): 871-877. |

| Zhang Xin-sheng, Gao Xin-bo, Wang Ying, et al. New method for microcalcification clusteres detection using active learning in the mammogram[J]. Journal of Xidian University, 2008(5): 871-877. | |

| [9] | Lakshminarayanan B, Pritzel A, Blundell C. Simple and scalable predictive uncertainty estimation using deep ensembles[C]∥Proceedings of the 31st International Conference on Neural Information Processing Systems. New York: Curran Associates Inc., 2017: 6405-6416. |

| [10] | Kao C C, Lee T Y, Sen P, et al. Localization-aware active learning for object detection[C]∥Asian Conference on Computer Vision. Heidelbery, Germany: Springer, 2018: 506-522. |

| [11] | Settles B. Active learning literature survey[R]. Madison: University of Wisconsin-Madison, 2010. |

| [12] | 吕佳, 傅屈寒. 基于改进主动学习和自训练的联合算法[J]. 北京师范大学学报:自然科学版, 2022, 58(1): 25-32. |

| Jia Lyv, Fu Qu-han. A joint algorithm by combined improved active learning and self-training[J]. Journal of Beijing Normal University (Natural Science), 2022, 58(1): 25-32. | |

| [13] | 徐艳. 基于主动学习的图像标注方法研究[D]. 锦州: 辽宁工业大学信息学院, 2014. |

| Xu Yan. Research on image annotation method based on active learning[D]. Jinzhou: School of Information, Liaoning University of Technology, 2014. | |

| [14] | Ge Z, Liu S T, Wang F, et al. YOLOX: Exceeding YOLO series in 2021[J/OL]. [2021-07-18]. |

| [15] | Bishop C M. Mixture density networks[EB/OL]. [2023-03-01]. |

| [16] | Choi S, Lee K, Lim S. Uncertainty-aware learning from demonstration using mixture density networks with sampling-free variance modeling[C]∥ IEEE International Conference on Robotics and Automation. Piscataway, NJ: IEEE, 2018: 6915-6922. |

| [17] | Choi J, Elezi I, Lee H J. Active learning for deep object detection via probabilistic modeling[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision. Piscataway, NJ: IEEE, 2021: 10264-10273. |

| [18] | Everingham M, Van G L, Williams C K I. The pascal visual object classes (VOC) challenge[J]. International Journal of Computer Vision, 2010, 88(2): 303-338. |

| [19] | Lin T Y, Maire M, Belongie S. Microsoft COCO: common objects in context[C]∥Proceedings of the European Conference on Computer Vision. Heidelberg, Germang: Springer, 2014: 740-755. |

| [20] | Yuan T N, Wan F, Fu M Y, et al. Multiple instance active learning for object detection[C]∥Proceedings of the Computer Vision and Pattern Recognition. Piscataway, NJ: IEEE, 2021: 5326-5335. |

| [21] | Liu W, Anguelov D, Erhan C, et al. SSD: Single shot multibox detector[C]∥Proceedings of the European Conference on Computer Vision. Heidelberg, Germang: Springer, 2016: 21-37. |

| [22] | 杨文柱, 田潇潇, 王思乐, 等. 主动学习算法研究进展 [J]. 河北大学学报:自然科学版, 2017, 37(2): 216-224. |

| Yang Wen-zhu, Tian Xiao-xiao, Wang Si-le, et al. Recent advances in active learning algorithms[J]. Journal of Hebei University (Natural Science Edition), 2017, 37(2): 216-224. | |

| [23] | Buur S. Active Learning[M]. California: Morgan & Claypool Publishers, 2012. |

| [24] | Sener O, Savarese S. Active learning for convolutional neural networks: A core-set approach[C]∥Proceedings of International Conference on Learning Representation. Vancouver, Canada: ICLR Press, 2018: 1-13. |

| [25] | Van D M L, Hinton G. Visualizing data using t-SNE[J]. Journal of Machine Learning Research, 2009, 9(11): 2579-2605. |

| [1] | 郭志荣,李刚. 基于高斯核密度估计的高速运动目标检测算法[J]. 吉林大学学报(工学版), 2025, 55(8): 2741-2745. |

| [2] | 徐慧智,郝东升,徐小婷,蒋时森. 基于深度学习的高速公路小目标检测算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2003-2014. |

| [3] | 田丽,贾煜辉. 改进YOLOv5s算法的高光谱遥感图像目标检测[J]. 吉林大学学报(工学版), 2025, 55(5): 1742-1748. |

| [4] | 郑利民,陈双,李刚. YOLOv5网络算法下交通监控视频违章车辆多目标检测[J]. 吉林大学学报(工学版), 2025, 55(2): 693-699. |

| [5] | 才华,郑延阳,付强,王晟宇,王伟刚,马智勇. 基于多尺度候选融合与优化的三维目标检测算法[J]. 吉林大学学报(工学版), 2025, 55(2): 709-721. |

| [6] | 徐慧智,蒋时森,王秀青,陈爽. 基于深度学习的车载图像车辆目标检测和测距[J]. 吉林大学学报(工学版), 2025, 55(1): 185-197. |

| [7] | 杨军,韩鹏飞. 采用神经网络架构搜索的高分辨率遥感影像目标检测[J]. 吉林大学学报(工学版), 2024, 54(9): 2646-2657. |

| [8] | 王宏志,宋明轩,程超,解东旋. 基于改进YOLOv5算法的道路目标检测方法[J]. 吉林大学学报(工学版), 2024, 54(9): 2658-2667. |

| [9] | 朱圣杰,王宣,徐芳,彭佳琦,王远超. 机载广域遥感图像的尺度归一化目标检测方法[J]. 吉林大学学报(工学版), 2024, 54(8): 2329-2337. |

| [10] | 游新冬,郭磊,韩晶,吕学强. 一种工件表面压印字符识别网络[J]. 吉林大学学报(工学版), 2024, 54(7): 2072-2079. |

| [11] | 高云龙,任明,吴川,高文. 基于注意力机制改进的无锚框舰船检测模型[J]. 吉林大学学报(工学版), 2024, 54(5): 1407-1416. |

| [12] | 陈仁祥,胡超超,胡小林,杨黎霞,张军,何家乐. 基于改进YOLOv5的驾驶员分心驾驶检测[J]. 吉林大学学报(工学版), 2024, 54(4): 959-968. |

| [13] | 张云佐,郭威,李文博. 遥感图像密集小目标全方位精准检测算法[J]. 吉林大学学报(工学版), 2024, 54(4): 1105-1113. |

| [14] | 王宏志,宋明轩,程超,解东旋. 基于改进YOLOv4-tiny算法的车距预警方法[J]. 吉林大学学报(工学版), 2024, 54(3): 741-748. |

| [15] | 程鑫,刘升贤,周经美,周洲,赵祥模. 融合密集连接和高斯距离的三维目标检测算法[J]. 吉林大学学报(工学版), 2024, 54(12): 3589-3600. |

|

||