吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (12): 4063-4071.doi: 10.13229/j.cnki.jdxbgxb.20250014

• 计算机科学与技术 • 上一篇

基于边界不确定性学习的图像篡改定位方法

- 1.吉林大学 计算机科学与技术学院,长春 130012

2.吉林大学 符号计算与知识工程教育部重点实验室,长春 130012

Image manipulation localization method based on boundary uncertainty learning

Hai-peng CHEN1,2( ),Hong-xin LIU1,2,Hui KANG1,2,Xue-jie LIU1(

),Hong-xin LIU1,2,Hui KANG1,2,Xue-jie LIU1( )

)

- 1.College of Computer Science and Technology,Jilin University,Changchun 130012,China

2.Key Laboratory of Symbolic Computation and Knowledge Engineering of Ministry of Education,Jilin University,Changchun 130012,China

摘要:

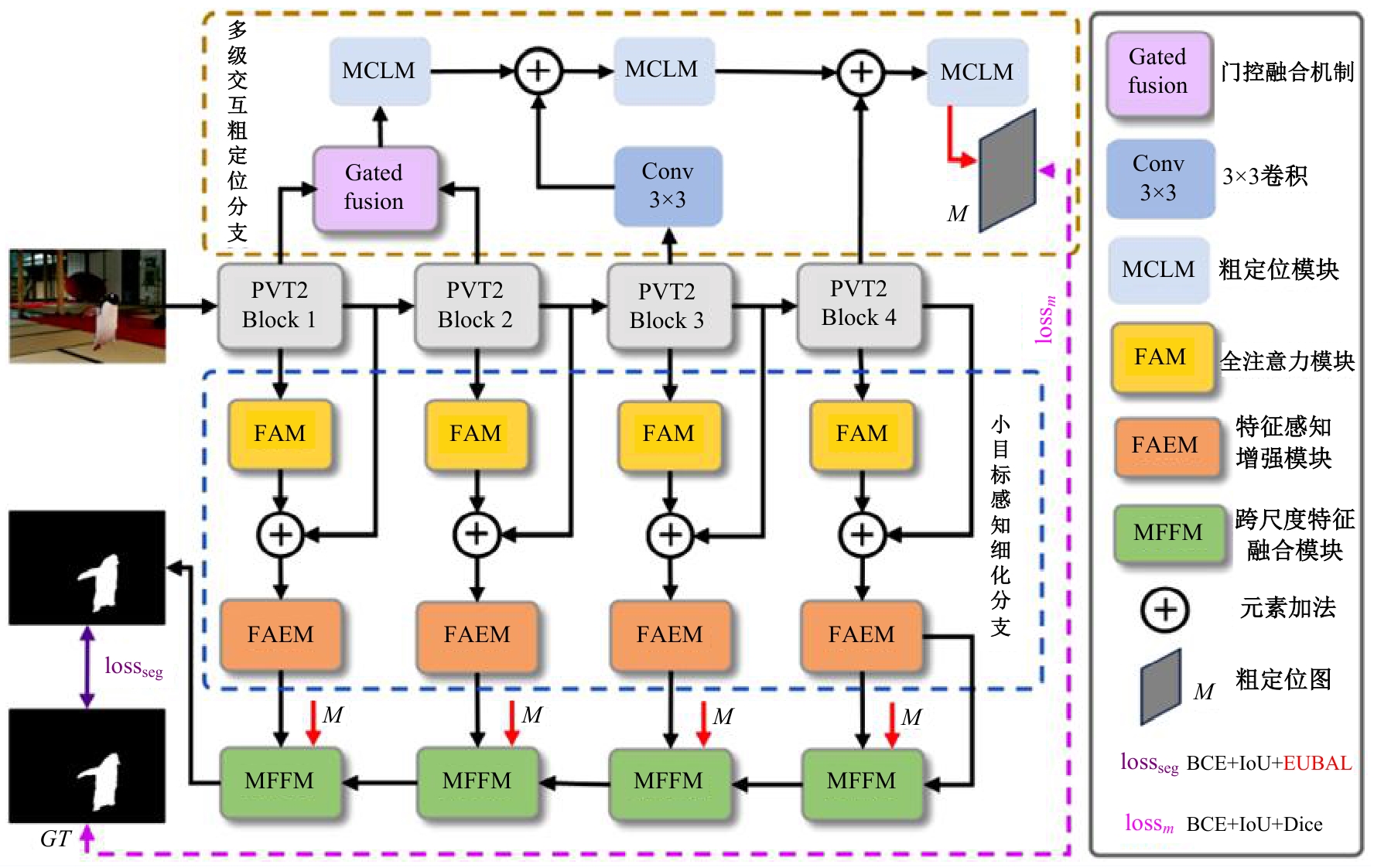

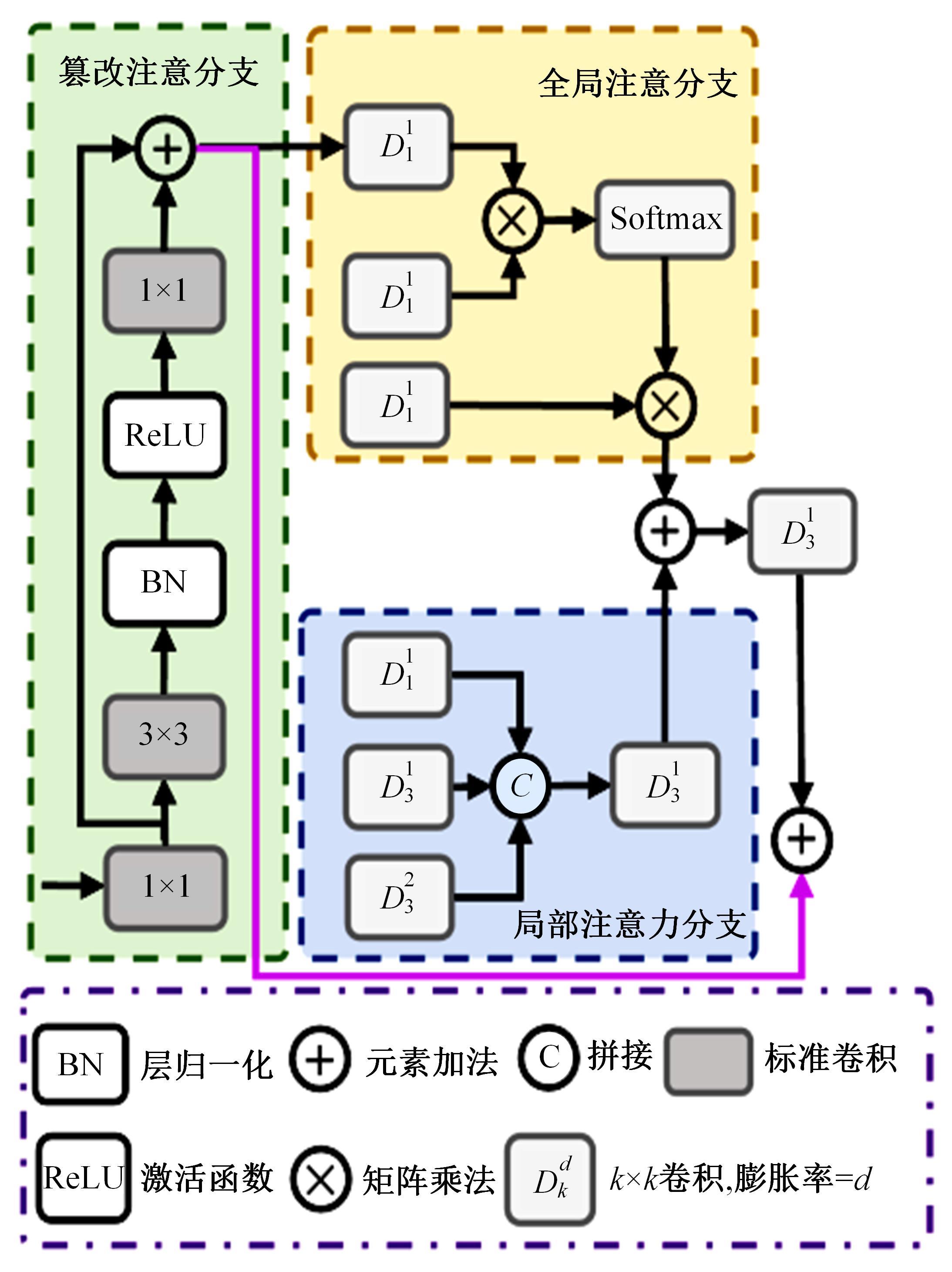

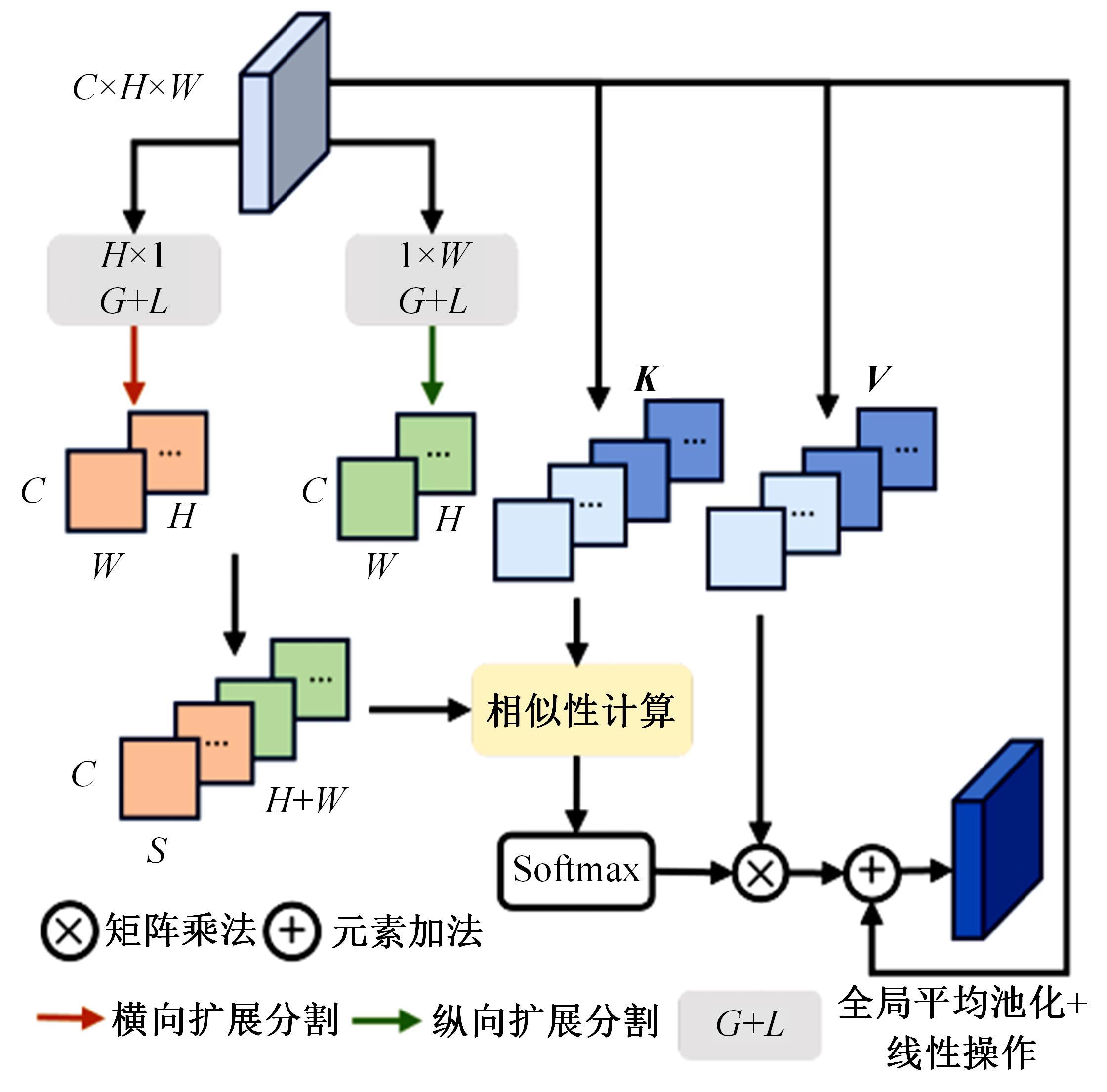

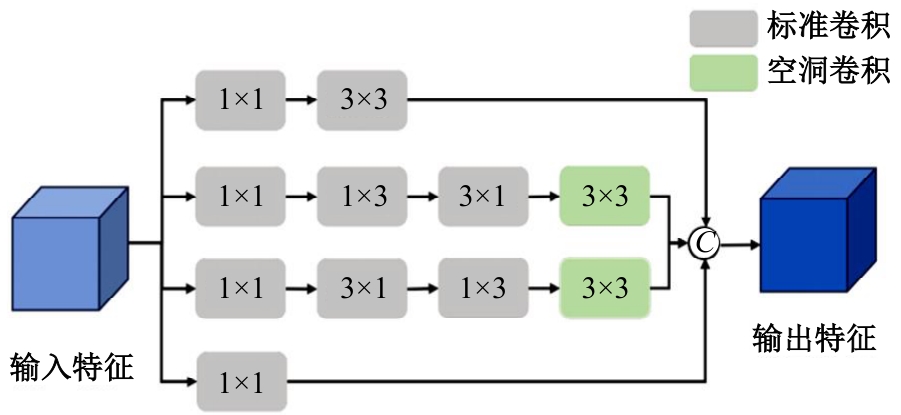

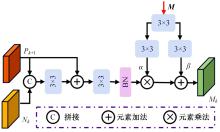

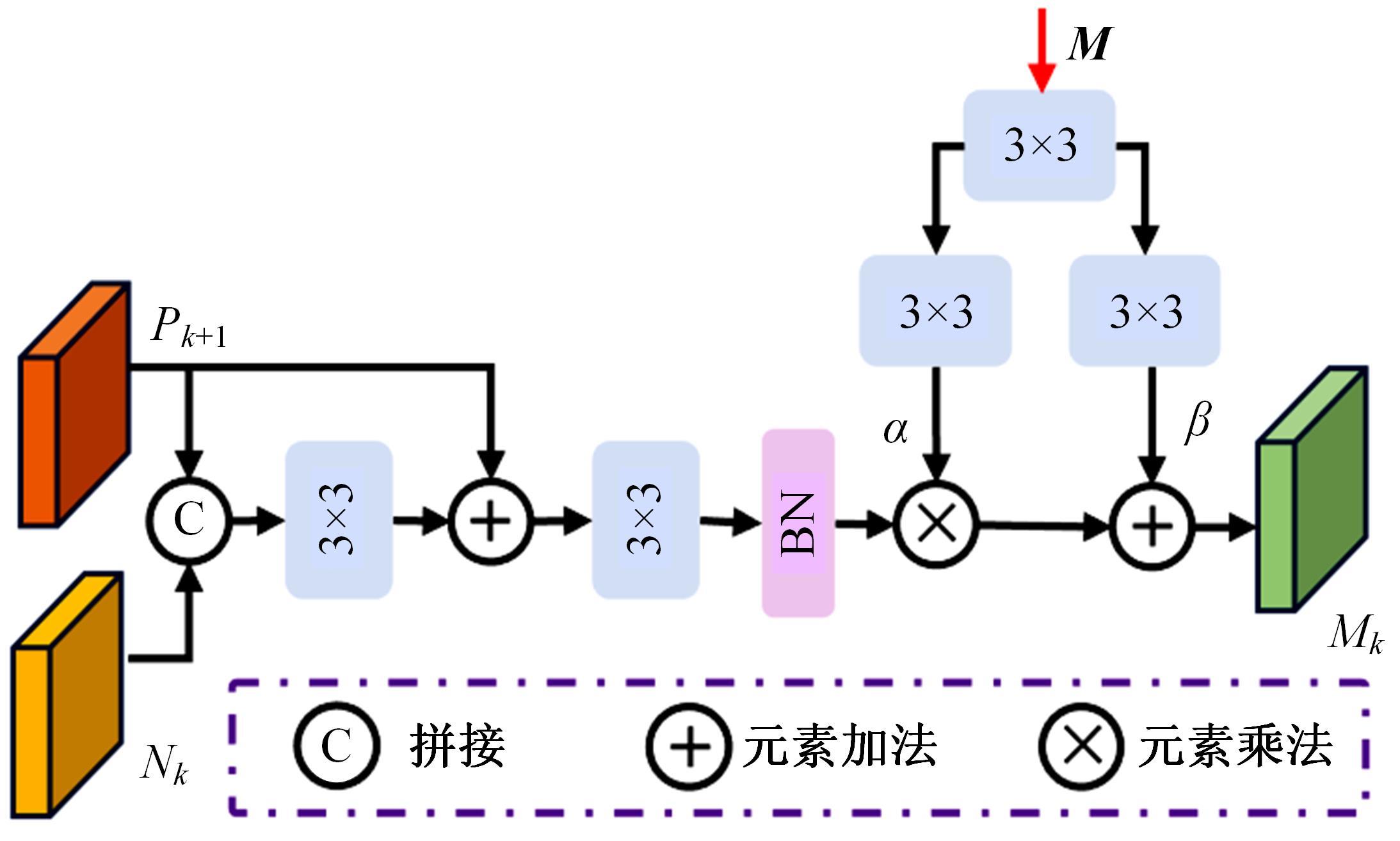

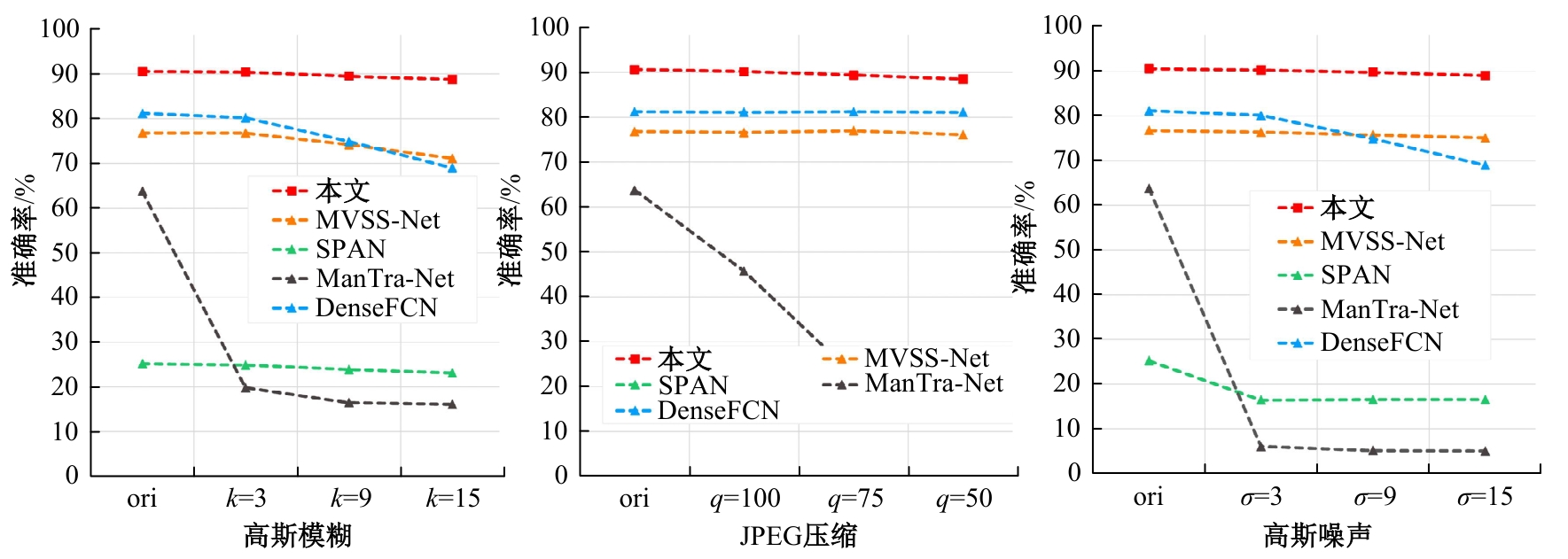

针对目前图像篡改定位方法提取特征尺度单一、小篡改区域易与背景混淆造成误检漏检、预测结果不确定性高等问题,提出了基于边界不确定性学习的图像篡改定位方法。首先,使用金字塔视觉变压器提取篡改图像基础特征。其次,利用多级交互粗定位分支生成粗定位图。再次,利用小目标感知细化分支提高小篡改区域感知定位能力。随后,利用多尺度特征融合模块实现多尺度特征的充分交互与融合。最后,提出基于熵的边界不确定性感知损失进行辅助监督,极大地降低了预测结果的不确定性。在5个常用公开图像篡改数据集上分别进行域内和跨域实验,结果表明,本文方法可精准定位篡改区域,并优于其他方法。

中图分类号:

- TP391

| [1] | 钟辉, 康恒, 吕颖达, 等. 基于注意力卷积神经网络的图像篡改定位算法[J]. 吉林大学学报: 工学版, 2021, 51(5): 1838-1844. |

| Zhong Hui, Kang Heng, Ying-da Lyu, et al. Image manipulation localization algorithm based on channel attention convolutional neural networks[J]. Journal of Jilin University (Engineering and Technology Edition), 2021, 51(5): 1838-1844. | |

| [2] | Shi Z, Chen H, Zhang D. Transformer-auxiliary neural networks for image manipulation localization by operator inductions[J]. IEEE Transactions on Circuits and Systems for Video Technology, 2023, 33(9): 4907-4920. |

| [3] | 石泽男, 陈海鹏, 张冬, 等. 预训练驱动的多模态边界感知视觉 Transformer[J]. 软件学报, 2023, 34(5): 2051-2067. |

| Shi Ze-nan, Chen Hai-peng, Zhang Dong, et al. Pretraining-driven multimodal boundary-aware vision transformer[J]. Journal of Software, 2023, 34(5): 2051-2067. | |

| [4] | Liu Y, Zhu X, Zhao X, et al. Adversarial learning for constrained image splicing detection and localization based on atrous convolution[J]. IEEE Transactions on Information Forensics and Security, 2019, 14(10): 2551-2566. |

| [5] | Chen B, Tan W, Coatrieux G, et al. A serial image copy-move forgery localization scheme with source/target distinguishment[J]. IEEE Transactions on Multimedia, 2020, 23: 3506-3517. |

| [6] | Zhang Y, Fu Z, Qi S, et al. Localization of inpainting forgery with feature enhancement network[J]. IEEE Transactions on Big Data, 2022, 9(3): 936-948. |

| [7] | Popescu A C, Farid H. Exposing digital forgeries in color filter array interpolated images[J]. IEEE Transactions on Signal Processing, 2005, 53(10): 3948-3959. |

| [8] | Bianchi T, Piva A. Image forgery localization via block-grained analysis of JPEG artifacts[J]. IEEE Transactions on Information Forensics and Security, 2012, 7(3): 1003-1017. |

| [9] | Mahdian B, Saic S. Using noise inconsistencies for blind image forensics[J]. Image and Vision Computing, 2009, 27(10): 1497-1503. |

| [10] | Chen X, Dong C, Ji J, et al. Image manipulation detection by multi-view multi-scale supervision[C]//Proceedings of the IEEE/CVF. International Conference on Computer Vision. Montreal, QC, Canada, 2021: 14165-14173. |

| [11] | Shi C, Wang C, Zhou X, et al. DAE-net: Dual attention mechanism and edge supervision network for image manipulation detection and localization[J]. IEEE Transactions on Instrumentation and Measurement, 2024(73): No.5028112. |

| [12] | Wang W, Xie E, Li X, et al. Pvt v2: Improved baselines with pyramid vision transformer[J]. Computational Visual Media, 2022, 8(3): 415-424. |

| [13] | Park T, Liu M Y, Wang T C, et al. Semantic image synthesis with spatially-adaptive normalization[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach, CA: USA, 2019: 2337-2346. |

| [14] | Pang Y, Zhao X, Xiang T Z, et al. Zoom in and out: A mixed-scale triplet network for camouflaged object detection[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. New Orleans, LA: USA, 2022: 2160-2170. |

| [15] | Dong J, Wang W, Tan T. Casia image tampering detection evaluation database[C]∥2013 IEEE. China Summit and International Conference on Signal and Information Processing.Beijing: China, 2013: 422-426. |

| [16] | Guan H, Kozak M, Robertson E, et al. MFC datasets: Large-scale benchmark datasets for media for ensic challenge evaluation[C]∥2019 IEEE.Winter Applications of Computer Vision Workshops (WACVW). Waikoloa, HI: USA, 2019: 63-72. |

| [17] | Wen B, Zhu Y, Subramanian R, et al. COVERAGE—a novel database for copy-move forgery detection[C]∥2016 IEEE. International Conference on Image Processing (ICIP).Phoenix, AZ: USA, 2016: 161-165. |

| [18] | Hsu Y F, Chang S F. Detecting image splicing usinggeometry invariants and camera characteristics consistency[C]∥2006 IEEE. International Conference on Multimedia and Expo. Toronto, ON: Canada, 2006: 549-552. |

| [19] | Novozamsky A, Mahdian B, Saic S. IMD2020 a largescale annotated dataset tailored for detecting manipulated images[C]∥Proceedings of the IEEE/CVF. Winter Conference on Applications of Computer Vision Workshops. Snowmass Village, CO: USA, 2020: 71-80. |

| [20] | Wu Y, AbdAlmageed W, Natarajan P. Mantra-net manipulation tracing network for detection and localization of image forgeries with anomalous features[C]∥Proceedings of the IEEE/CVF. Conference on Computer Vision and Pattern Recognition. Long Beach, CA: USA, 2019: 9543-9552. |

| [21] | Hu X, Zhang Z, Jiang Z, et al. SPAN spatial pyramid attention network for image manipulation localization[C]∥Proceedings of the European Conference on Computer Vision (ECCV). Glasgow: UK, 2020: 312-328. |

| [22] | Zhuang P, Li H, Tan S, et al. Image tampering localization using a dense fully convolutional network[J]. IEEE Transactions on Information Forensics and Security, 2021, 16: 2986-2999. |

| [23] | Zhuo L, Tan S, Li B, et al. Self-adversarial training incorporating forgery attention for image forgery localization[J]. IEEE Transactions on Information Forensics and Security, 2022, 17: 819-834. |

| [24] | Xia X, Su L C, Wang S P, et al. DMFF-net: Double-stream multilevel feature fusion network for image forgery localization[J]. Engineering Applications of Artificial Intelligence, 2024, 127: No.107200. |

| [1] | 艾青林,刘元宵,杨佳豪. 基于MFF-STDC网络的室外复杂环境小目标语义分割方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2681-2692. |

| [2] | 张宇飞,王丽敏,赵建平,贾智尧,李明洋. 基于中心选择大逃杀优化算法的机器人逆运动学求解[J]. 吉林大学学报(工学版), 2025, 55(8): 2703-2710. |

| [3] | 朴燕,康继元. RAUGAN:基于循环生成对抗网络的红外图像彩色化方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2722-2731. |

| [4] | 刘琼昕,王甜甜,王亚男. 非支配排序粒子群遗传算法解决车辆位置路由问题[J]. 吉林大学学报(工学版), 2025, 55(7): 2464-2474. |

| [5] | 车翔玖,李良. 融合全局与局部细粒度特征的图相似度度量算法[J]. 吉林大学学报(工学版), 2025, 55(7): 2365-2371. |

| [6] | 李文辉,杨晨. 基于对比学习文本感知的小样本遥感图像分类[J]. 吉林大学学报(工学版), 2025, 55(7): 2393-2401. |

| [7] | 庄珊娜,王君帅,白晶,杜京瑾,王正友. 基于三维卷积与自注意力机制的视频行人重识别[J]. 吉林大学学报(工学版), 2025, 55(7): 2409-2417. |

| [8] | 赵宏伟,周伟民. 基于数据增强的半监督单目深度估计框架[J]. 吉林大学学报(工学版), 2025, 55(6): 2082-2088. |

| [9] | 王健,贾晨威. 面向智能网联车辆的轨迹预测模型[J]. 吉林大学学报(工学版), 2025, 55(6): 1963-1972. |

| [10] | 陈海鹏,张世博,吕颖达. 多尺度感知与边界引导的图像篡改检测方法[J]. 吉林大学学报(工学版), 2025, 55(6): 2114-2121. |

| [11] | 周丰丰,郭喆,范雨思. 面向不平衡多组学癌症数据的特征表征算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2089-2096. |

| [12] | 车翔玖,孙雨鹏. 基于相似度随机游走聚合的图节点分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2069-2075. |

| [13] | 刘萍萍,商文理,解小宇,杨晓康. 基于细粒度分析的不均衡图像分类算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2122-2130. |

| [14] | 王友卫,刘奥,凤丽洲. 基于知识蒸馏和评论时间的文本情感分类新方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1664-1674. |

| [15] | 赵宏伟,周明珠,刘萍萍,周求湛. 基于置信学习和协同训练的医学图像分割方法[J]. 吉林大学学报(工学版), 2025, 55(5): 1675-1681. |

|

||