吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (10): 3296-3308.doi: 10.13229/j.cnki.jdxbgxb.20240111

• 计算机科学与技术 • 上一篇

融合对比学习和生成对抗网络的图像去雾算法

- 1.长安大学 信息工程学院,西安 710064

2.浪潮数字企业技术有限公司 中央大客户事业部,济南 250101

Image dehazing algorithm based on contrast learning and generative adversarial network

Xiang-long LUO1( ),Xin-yu WEI1,Mao-jun ZHAO2,Ruo-chen LIU1

),Xin-yu WEI1,Mao-jun ZHAO2,Ruo-chen LIU1

- 1.College of Information Engineering,Chang’an University,Xi’an 710064,China

2.Inspur Digital Enterprise Technology Limited,Centralized Key Account Division,Ji’nan 250101,China

摘要:

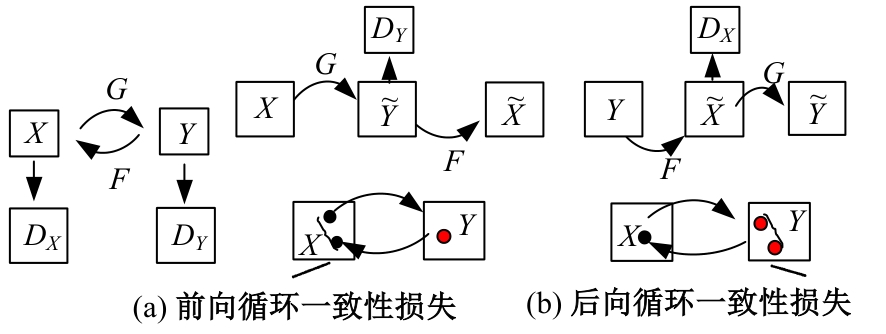

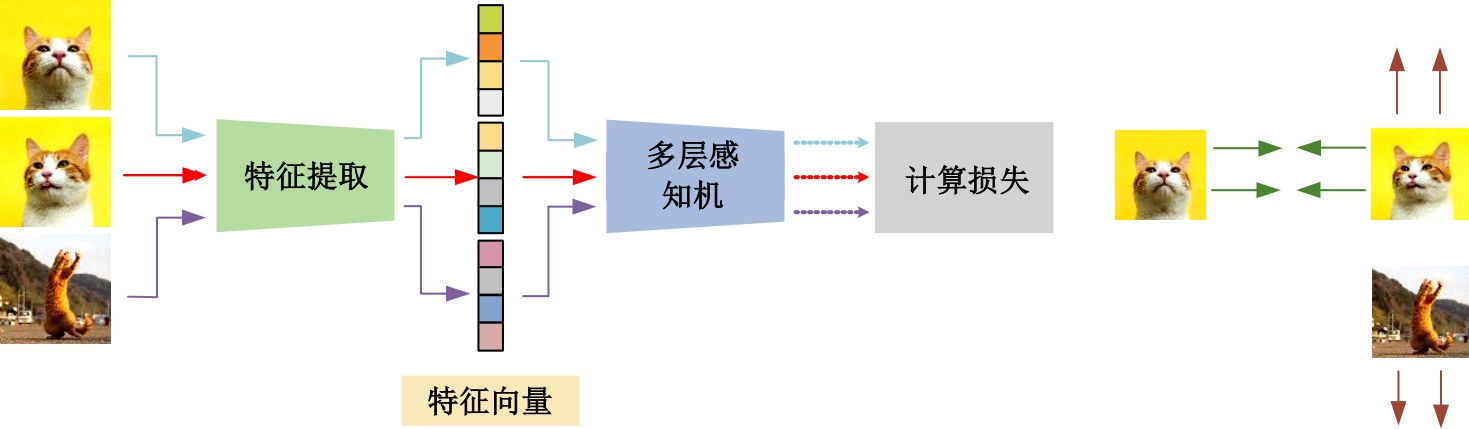

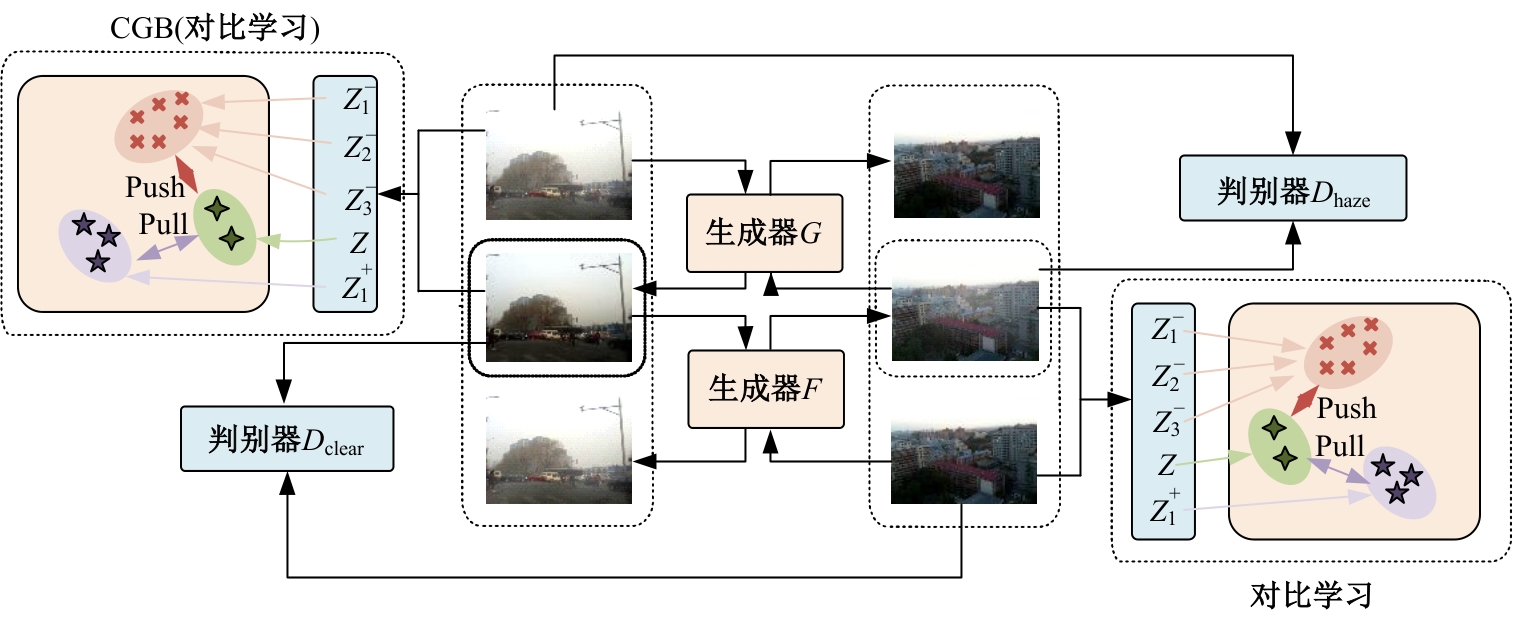

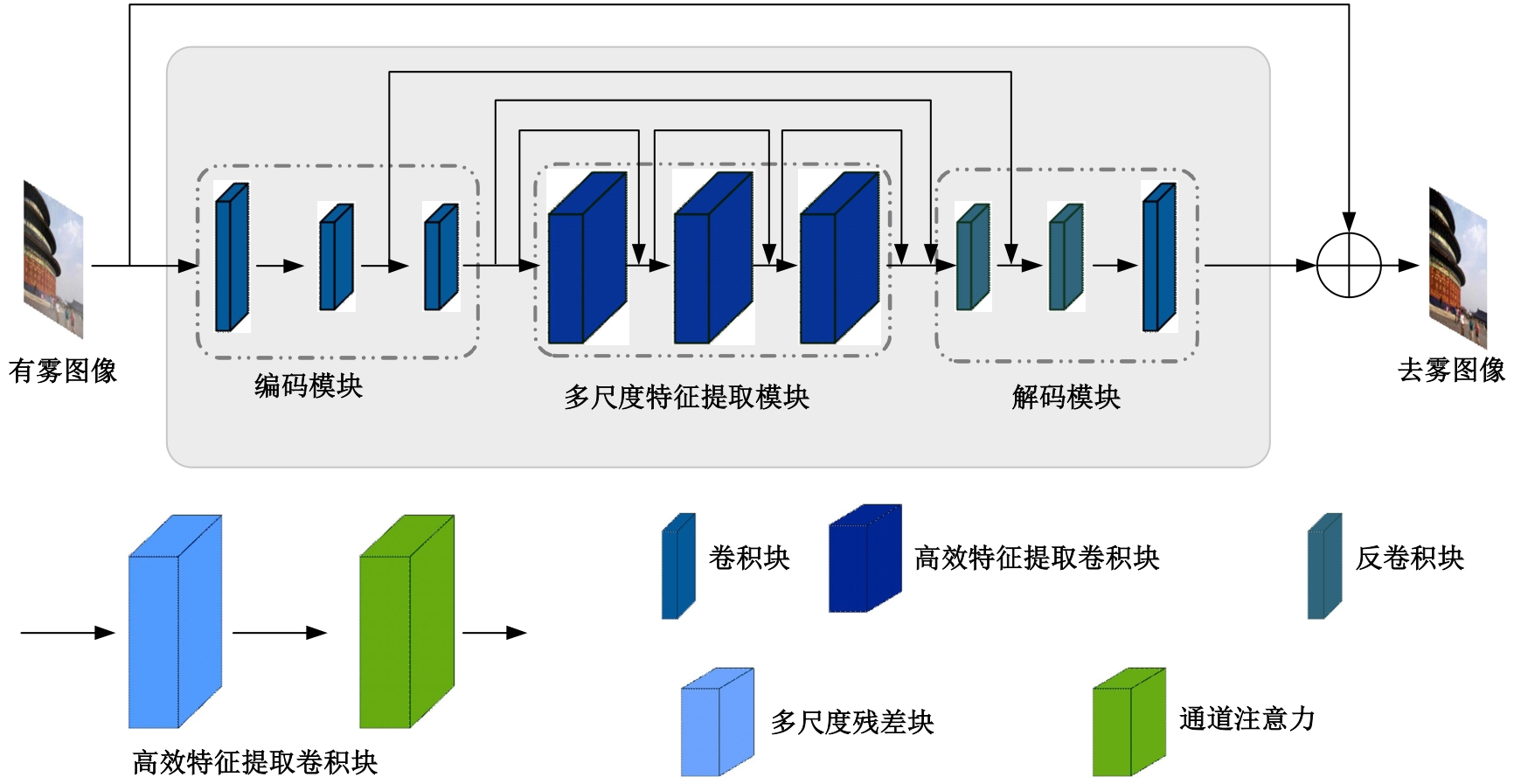

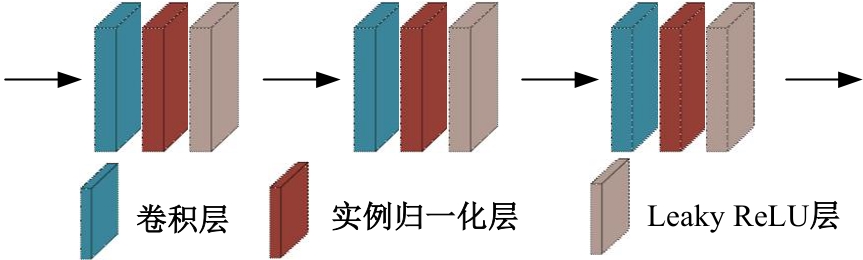

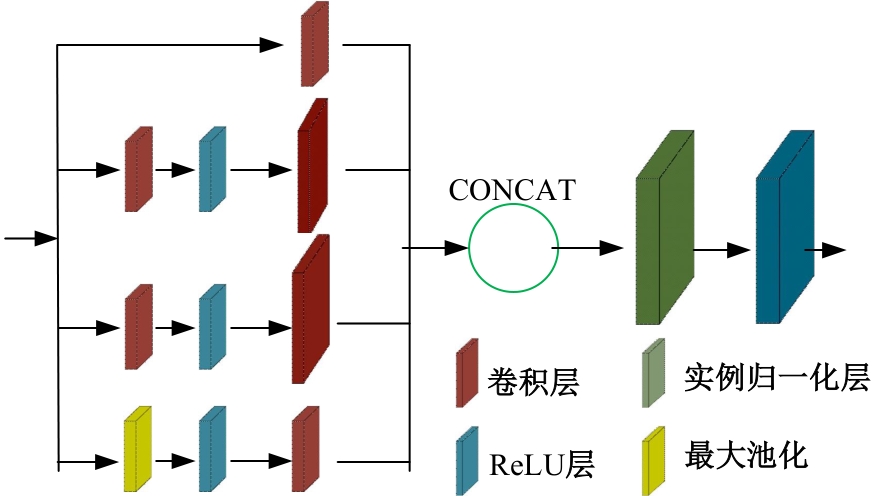

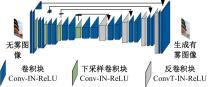

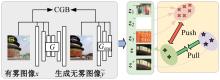

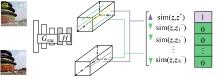

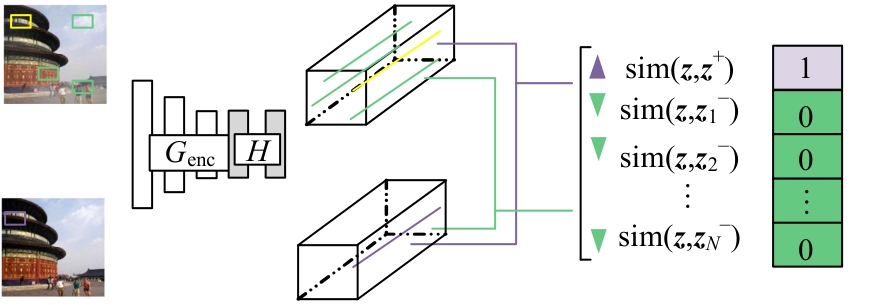

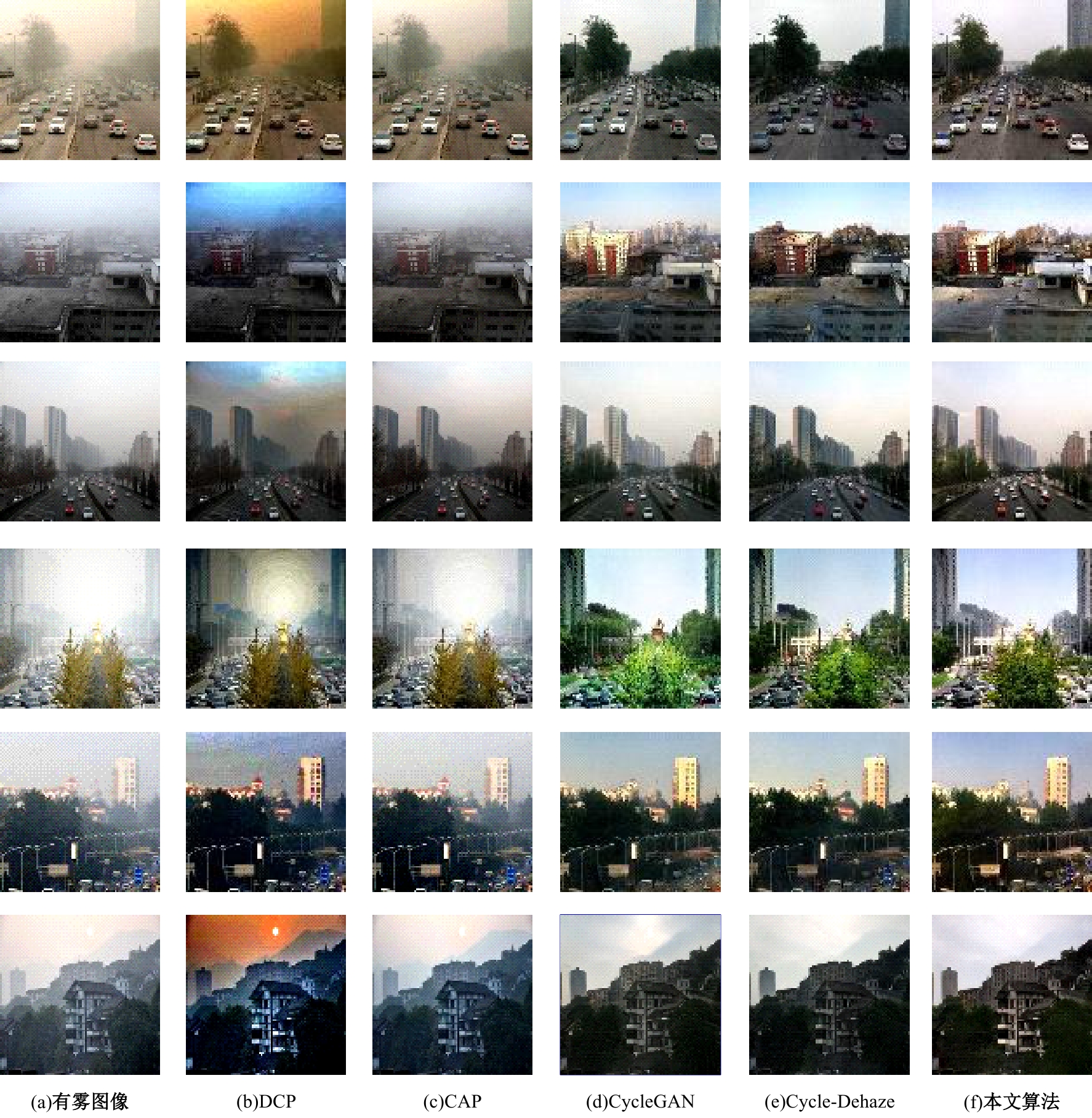

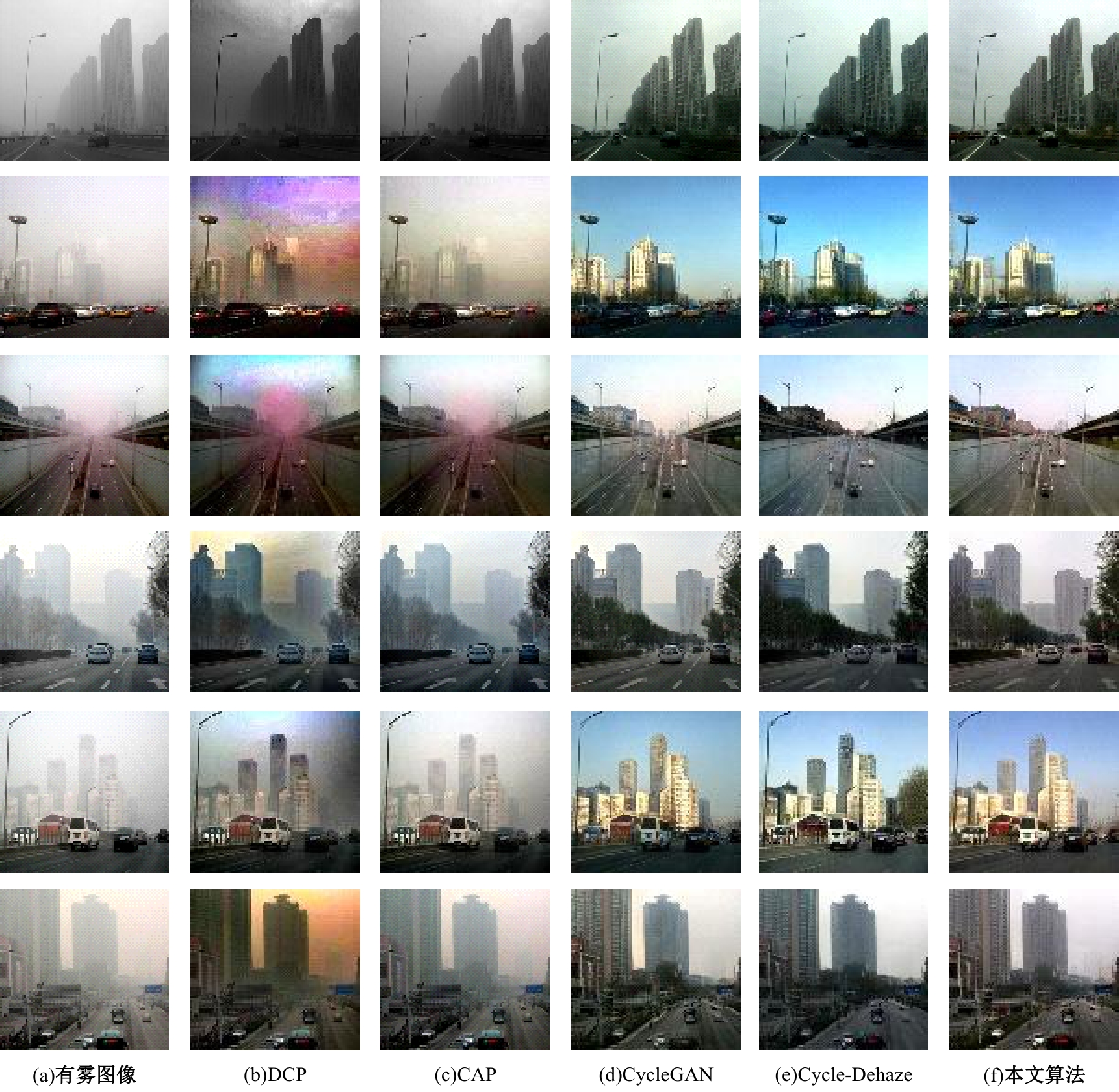

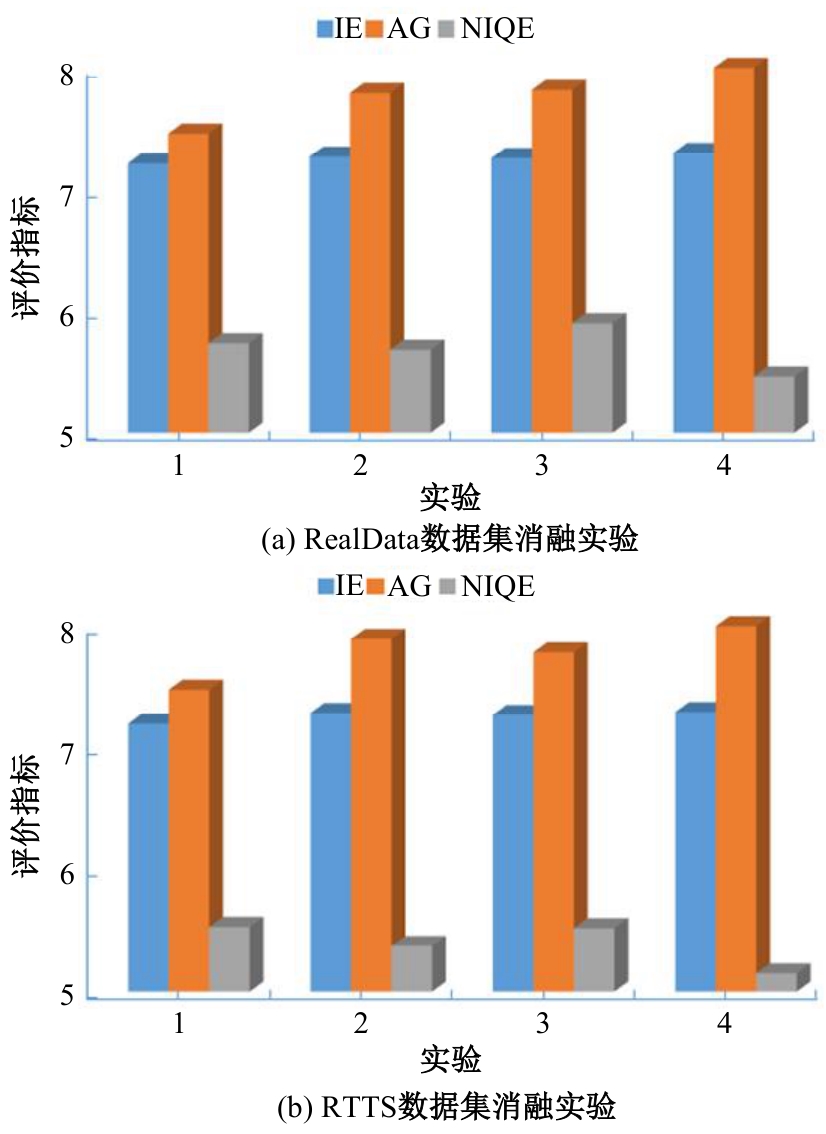

针对目前去雾算法依赖有雾、无雾图像对的局限,以及监督学习导致的成本消耗等问题,提出了一种基于对比学习和循环一致性生成对抗网络的图像去雾算法。首先,通过非成对的有雾图像和清晰图像训练循环一致性生成对抗网络,提高图像去雾算法在真实场景中的应用价值,缓解去雾算法的域偏移问题;其次,设计对比指导分支学习图像的潜在特征分布,隐式约束不同样本在深度特征空间中的嵌入信息,深入挖掘有雾图像和清晰图像的相似特征,拉近图像相似特征的距离,保留两类图像间的互信息,维持图像内容的一致性,提高网络去雾性能;然后,引入频率损失函数,约束生成器的输出,降低频域信息损失,进一步保留图像的内容和结构信息,减少去雾图像的模糊和失真,提高生成图像的质量和清晰度。实验结果表明,本文模型相比目前主流的基于深度学习的传统去雾算法,信息熵和平均梯度均有所提高,细节信息更丰富,是一种有效的图像去雾算法。

中图分类号:

- TP391

| [1] | Bansode N V, Ingale V, Ingale S. An appropriate histogram equalization technique for thermal image enhancement of neck region[C]∥IEEE Pune Section International Conference(PuneCon), Pune, India: IEEE,2023:1-5. |

| [2] | 李英, 李欣玥, 王佳琦, 等. 基于Retinex去雾算法的水射流辅助激光加工特征图像融合算法[J]. 中国激光, 2023, 50(24): 67-76. |

| Li Ying, Li Xin-yue, Wang Jia-qi,et al. Water jet assisted laser processing feature image fusion algorithm based on Retinex defogging algorithm[J]. Chinese Journal of Lasers, 2023, 50(24): 67-76. | |

| [3] | Dai W, Ren X M. Defogging algorithm for road environment landscape visual image based on wavelet transform[C]∥International Conference on Networking, Informatics and Computing(ICNETIC), Palermo, Italy, 2023: 587-591. |

| [4] | Cantor A E J. Optics of the atmosphere: scattering by molecules and particles[J]. IEEE Journal of Quantum Electronics, 1978, 14(9): 698-699. |

| [5] | Nayar S K, Narasimhan S G. Vision in bad weather [C]∥Proceedings of the Seventh IEEE International Conference on Computer Vision, Kerkyra, Greece, 1999: 820-827. |

| [6] | He K M, Sun J, Tang X O. Single image haze removal using dark channel prior[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2011, 33(12): 2341-2353. |

| [7] | Tang J L, Zhang Z D, Niu L H, et al. Research on image defogging algorithms based on color attenuation prior[J]. Journal of Physics: Conference Series, 2022, 2216(1): No. 012080. |

| [8] | 牛宏侠, 王春智, 梁乐观, 等. 基于改进暗通道先验的沙尘图像清晰化算法[J]. 吉林大学学报: 理学版,2023, 61(6): 1407-1418. |

| Niu Hong-xia, Wang Chun-zhi, Liang Le-guan, et al. Sand and dust image clarification algorithm based on improved dark channel prior[J]. Journal of Jilin University(Science Edition), 2023, 61(6): 1407-1418. | |

| [9] | 许懿娜, 王义, 黄华平, 等. 一种改进暗通道先验的航空影像快速去雾方法[J]. 遥感信息, 2023, 38(6):36-41. |

| Xu Yi-na, Wang Yi, Huang Hua-ping, et al. A fast defogging method for aerial images with improved dark channel prior[J]. Remote Sensing Information, 2023, 38(6):36-41. | |

| [10] | 王勇, 边宇霄, 李新潮, 等. 基于多尺度编码-解码神经网络的图像去雾算法[J]. 吉林大学学报:工学版, 2024, 54(12):3626-3636. |

| Wang Yong, Bian Yu-xiao, Li Xin-chao, et al. Image defogging algorithm based on multi-scale encoding-decoding neural network[J]. Journal of Jilin University(Engineering and Technology Edition), 2024, 54(12):3626-3636. | |

| [11] | 李永福, 崔恒奇, 朱浩, 等. 一种基于改进AOD-Net的航拍图像去雾算法[J]. 自动化学报, 2022, 48(6): 1543-1559. |

| Li Yong-fu, Cui Heng-qi, Zhu Hao, et al. An improved AOD-Net based defogging algorithm for aerial images[J]. Acta Automatica Sinica, 2022, 48(6): 1543-1559. | |

| [12] | Goodfellow I, Pouget-Abadie J, Mirza M, et al. Generative adversarial networks[J]. Communications of the ACM, 2020, 63(11): 139-144. |

| [13] | 屠杭垚, 王万良, 陈嘉诚, 等. 基于条件生成对抗网络的图像翻译综述[J]. 计算机辅助设计与图形学学报, 2024, 36(1): 14-32. |

| Tu Hang-yao, Wang Wan-liang, Chen Jia-cheng, et al. A review of image translation based on conditional generative adversarial networks[J]. Journal of Computer Aided Design and Graphics, 2024, 36(1): 14-32. | |

| [14] | Wang P Y, Zhu H Q, Huang H, et al. TMS-GAN: A twofold multi-scale generative adversarial network for single image dehazing[C]∥IEEE Transactions on Circuits and Systems for Video Technology. IEEE, 2022, 32(5): 2760-2772. |

| [15] | Engin D, Genç A, Kemal Ekenel H. Cycle-dehaze: Enhanced CycleGAN for single image dehazing[C]∥ IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, USA,2018: 825-833. |

| [16] | Zhang X J, Hu Q, Wei Y N, et al. CycleGAN image defogging method based on residual dual attention mechanism[C]∥Electronic Information Engineering and Computer Science(EIECS), Changchun, China: 2023: 234-237. |

| [17] | Zhong M Q, Wang X, Wang J, et al. A remote sensing image defogging method based on improved CycleGAN network[C]∥International Conference on Computer Vision, Image and Deep Learning, Zhuhai,China, 2023: 113-116. |

| [18] | 但志平, 方帅领, 孙航, 等. 基于双判别器异构CycleGAN框架下多阶通道注意力校准的室外图像去雾[J]. 电子学报, 2023, 51(9): 2558-2571. |

| Dan Zhi-ping, Fang Shuai-ling, Sun Hang, et al. Outdoor image defogging based on dual discriminator heterogeneous CycleGAN framework with multi-order channel attention calibration[J]. Acta Electronica Sinica, 2023, 51(9): 2558-2571. | |

| [19] | 钱旭淼, 段锦, 刘举, 等. 基于注意力特征融合的图像去雾算法[J]. 吉林大学学报:理学版, 2023, 61(3): 567-576. |

| Qian Xu-miao, Duan Jin, Liu Ju, et al. Image defogging algorithm based on attention feature fusion[J]. Journal of Jilin University(Science Edition), 2023, 61(3):567-576. | |

| [20] | Li B Y, Ren W Q, Fu D P, et al. Benchmarking single-image dehazing and beyond[J]. IEEE Transactions on Image Processing, 2019, 28(1): 492-505. |

| [21] | Zhu J Y, Park T, Isola P, et al. Unpaired image-to-image translation using cycle-consistent adversarial networks[C]∥IEEE International Conference on Computer Vision(ICCV), Venice, Italy,2017: 2223-2232. |

| [22] | Shao Y J, Li L, Ren W Q, et al. Domain adaptation for image dehazing [C]∥IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA, 2020: 2808-2817. |

| [23] | Wu H Y, Qu Y Y, Lin S H, et al. Contrastive learning for compact single image dehazing[C]∥IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, USA,2021: 10551-10560. |

| [24] | Chen X, Pan J S, Jiang K, et al. Unpaired deep image deraining using dual contrastive learning[C]∥IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR), New Orleans, USA, 2022: 2017-2026. |

| [25] | Isola P, Zhu J Y, Zhou T H, et al. Image-to-image translation with conditional adversarial networks[C]∥IEEE International Women in Engineering(WIE) Conference on Electrical and Computer Engineering, Honolulu,USA,2017: 1125-1134. |

| [26] | Jiang L M, Dai B, Wu W, et al. Focal frequency loss for image reconstruction and synthesis[C]∥IEEE/CVF International Conference on Computer Vision, Montreal, Canada, 2021: 13899-13909. |

| [27] | Qin X, Wang Z L, Bai Y C, et al. FFA-Net: Feature fusion attention network for single image dehazing [C]∥AAAI Conference on Artificial Intelligence, New York, USA: 2020: 11908-11915. |

| [28] | Das S, Saiful Islam M, Ruhul Amin M. GCA-Net: Utilizing gated context attention for improving image forgery localization and detection[C]∥IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops(CVPRW), New Orleans,USA,2022:81-90. |

| [1] | 朴燕,康继元. RAUGAN:基于循环生成对抗网络的红外图像彩色化方法[J]. 吉林大学学报(工学版), 2025, 55(8): 2722-2731. |

| [2] | 李文辉,杨晨. 基于对比学习文本感知的小样本遥感图像分类[J]. 吉林大学学报(工学版), 2025, 55(7): 2393-2401. |

| [3] | 袁靖舒,李武,赵兴雨,袁满. 基于BERTGAT-Contrastive的语义匹配模型[J]. 吉林大学学报(工学版), 2025, 55(7): 2383-2392. |

| [4] | 文斌,彭顺,杨超,沈艳军,李辉. 多深度自适应融合去雾生成网络[J]. 吉林大学学报(工学版), 2025, 55(6): 2103-2113. |

| [5] | 刘广文,赵绮莹,王超,高连宇,才华,付强. 基于渐进递归的生成对抗单幅图像去雨算法[J]. 吉林大学学报(工学版), 2025, 55(4): 1363-1373. |

| [6] | 季渊,虞雅淇. 基于密集卷积生成对抗网络与关键帧的说话人脸视频生成优化算法[J]. 吉林大学学报(工学版), 2025, 55(3): 986-992. |

| [7] | 赵宏,马宇轩,宋馥荣. 基于Diff-AdvGAN的图像对抗样本生成方法[J]. 吉林大学学报(工学版), 2025, 55(12): 4052-4062. |

| [8] | 杨燕,沈汪良. 多尺度细节增强与分层抑噪的图像去雾算法[J]. 吉林大学学报(工学版), 2025, 55(12): 4010-4023. |

| [9] | 蔡晓东,黄业洋,董丽芳. 基于增强正例与层间负例的语义相似性模型[J]. 吉林大学学报(工学版), 2025, 55(11): 3705-3714. |

| [10] | 张曦,库少平. 基于生成对抗网络的人脸超分辨率重建方法[J]. 吉林大学学报(工学版), 2025, 55(1): 333-338. |

| [11] | 温晓岳,钱国敏,孔桦桦,缪月洁,王殿海. TrafficPro:一种针对城市信控路网的路段速度预测框架[J]. 吉林大学学报(工学版), 2024, 54(8): 2214-2222. |

| [12] | 赖丹晖,罗伟峰,袁旭东,邱子良. 复杂环境下多模态手势关键点特征提取算法[J]. 吉林大学学报(工学版), 2024, 54(8): 2288-2294. |

| [13] | 郭昕刚,何颖晨,程超. 抗噪声的分步式图像超分辨率重构算法[J]. 吉林大学学报(工学版), 2024, 54(7): 2063-2071. |

| [14] | 王勇,边宇霄,李新潮,徐椿明,彭刚,王继奎. 基于多尺度编码-解码神经网络的图像去雾算法[J]. 吉林大学学报(工学版), 2024, 54(12): 3626-3636. |

| [15] | 吕卫,韩镓泽,褚晶辉,井佩光. 基于多模态自注意力网络的视频记忆度预测[J]. 吉林大学学报(工学版), 2023, 53(4): 1211-1219. |

|

||