吉林大学学报(工学版) ›› 2025, Vol. 55 ›› Issue (7): 2383-2392.doi: 10.13229/j.cnki.jdxbgxb.20240913

• 计算机科学与技术 • 上一篇

基于BERTGAT-Contrastive的语义匹配模型

- 东北石油大学 计算机与信息技术学院,黑龙江 大庆 163318

Semantic matching model based on BERTGAT-Contrastive

Jing-shu YUAN( ),Wu LI,Xing-yu ZHAO,Man YUAN

),Wu LI,Xing-yu ZHAO,Man YUAN

- School of Computer and Information Technology,Northeast Petroleum University,Daqing 163318,China

摘要:

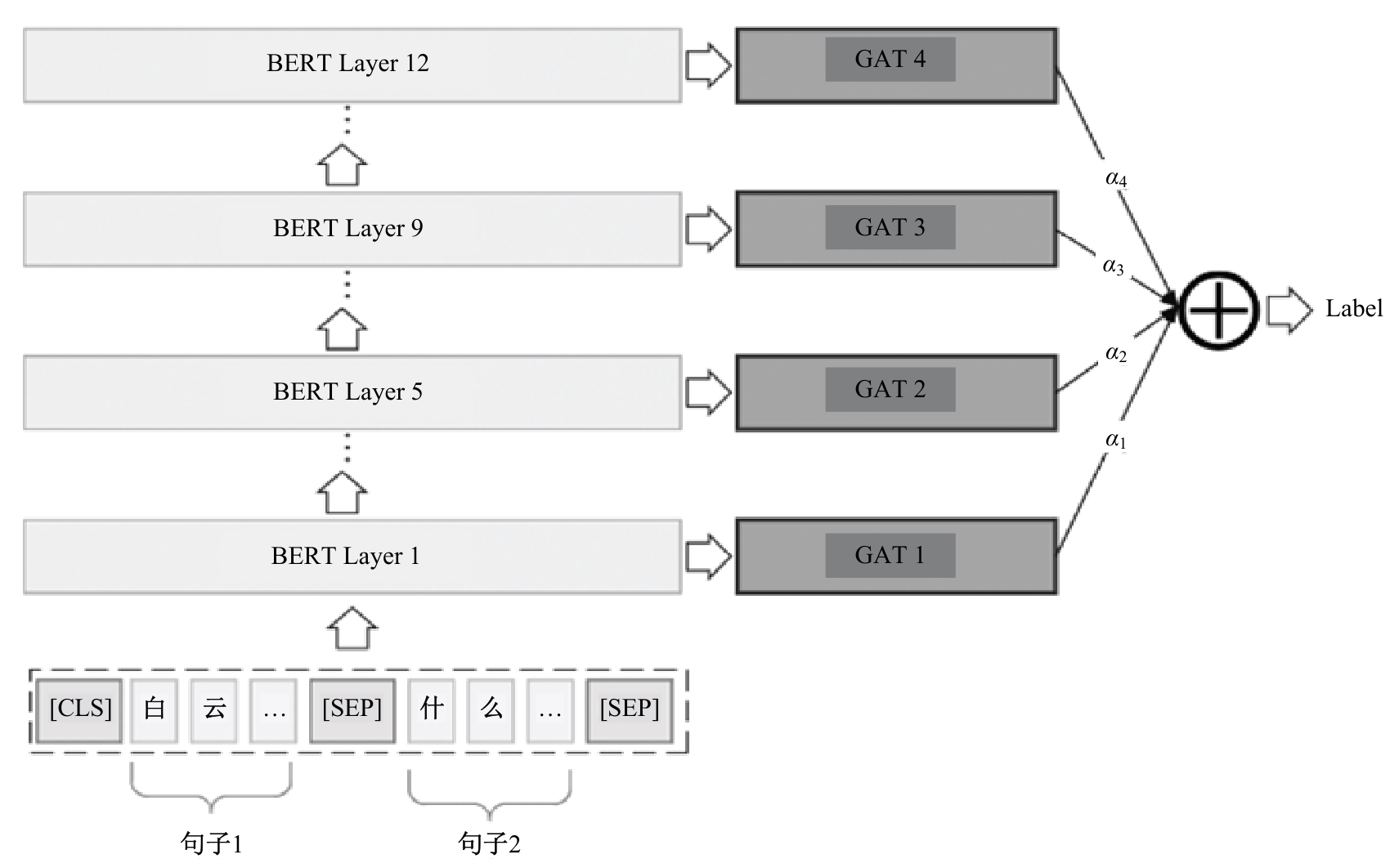

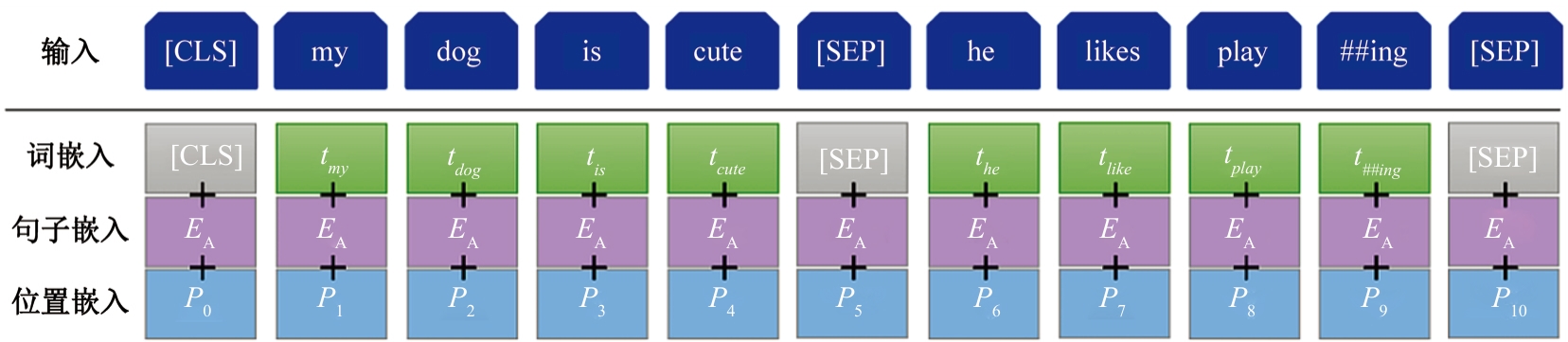

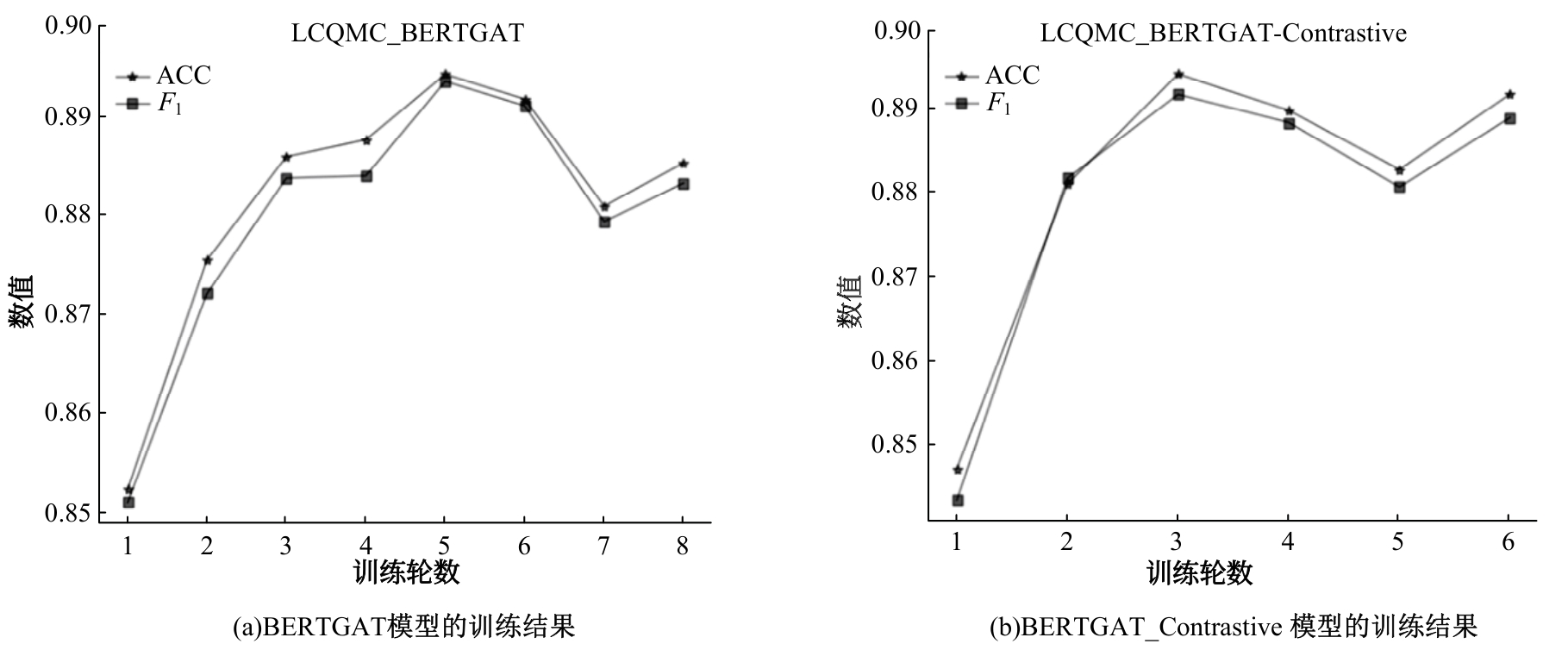

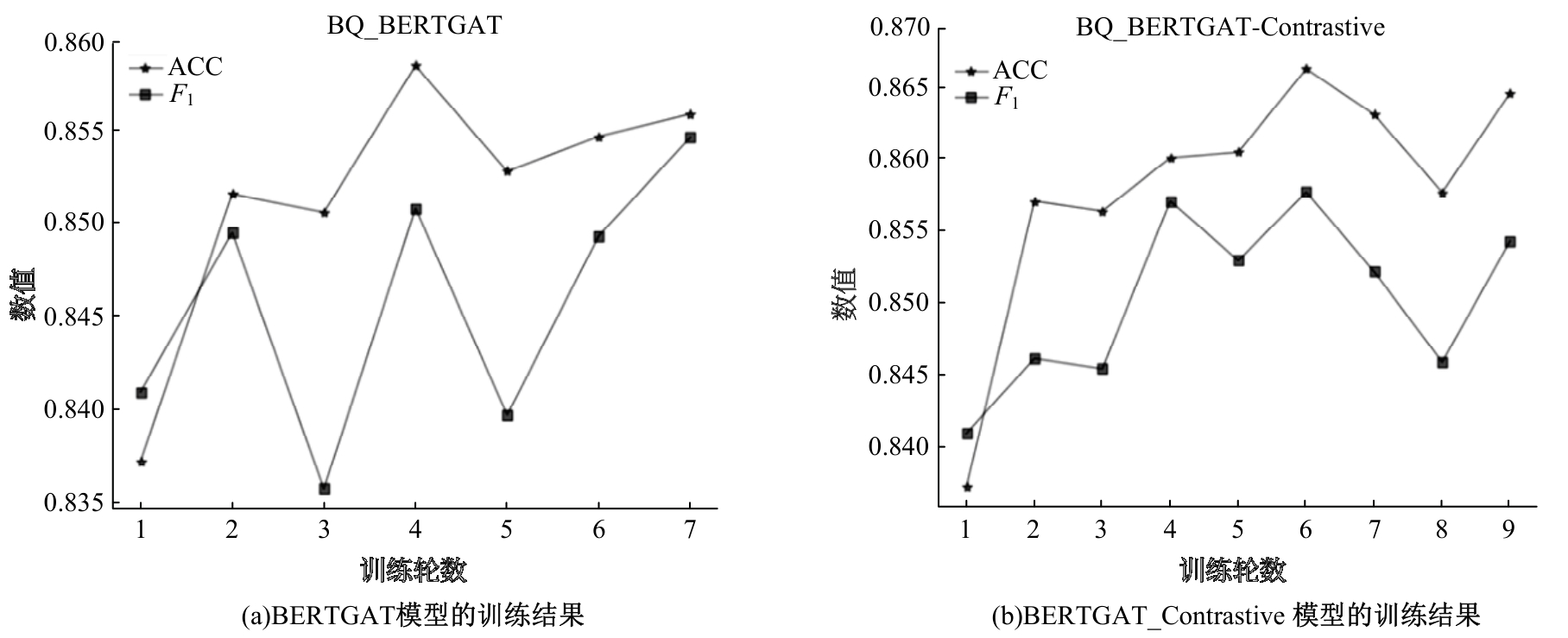

针对仅使用BERT最后一层信息进行预测导致丢失一些文本的词法、句法和语义信息的问题,提出了一种基于BERT与图注意力网络(GAT)的BERTGAT模型。首先,通过利用BERT多个中间层的隐藏状态矩阵和注意力矩阵分别作为对应数量GAT的节点特征矩阵和邻接矩阵,并采用动态权重策略对不同的GAT层进行加权,再应用激活函数判断句子间的相似性。其次,为了使BERTGAT模型能够更好地学习到句子对之间的语言表征,在BERTGAT的基础上引入了对比学习方法,提出了BERTGAT-Contrastive模型,增强了模型对文本之间语义相似性的识别能力。最后,通过在LCQMC和BQ数据集上进行实验,结果表明:本文提出的模型与对比学习方法相比效果更显著,准确率和F1值均有明显提升。

中图分类号:

- TP391.1

| [1] | 徐若卿. 融合知识图谱和语义匹配的医疗问答系统[J].现代电子技术, 2024, 47(8): 49-54. |

| Xu Ruo-qing. Medical question answering system integrating knowledge graph and semantic matching[J]. Modern Electronic Technique, 2019, 47(8): 49-54. | |

| [2] | 黄翔. 基于中心句语义相似度的信息检索方法研究[D].武汉: 华中师范大学计算机学院, 2021. |

| Huang Xiang. Research on information retrieval method based on semantic similarity of topic sentences [D]. Wuhan: School of Computer Science, Central China Normal University, 2021. | |

| [3] | 于敬, 石京京, 刘文海. 基于文本语义匹配的物品相关推荐算法[J]. 电子技术与软件工程, 2022(7): 206-211. |

| Yu Jing, Shi Jing-jing, Liu Wen-hai. Article related recommendation algorithm based on text semantic matching[J]. Electronic Technology and Software Engineering, 2022(7): 206-211. | |

| [4] | 李佳歆. 基于序列模型的文本语义匹配方法研究[D].重庆: 重庆邮电大学计算机科学与技术学院, 2018. |

| Li Jia-xin. Research on text semantic matching method based on sequence model[D].Chongqing: School of Computer Science and Technology, Chongqing University of Posts and Telecommunications, 2018. | |

| [5] | 王娜娜. 基于词袋模型的图像分类方法研究[D]. 兰州: 兰州理工大学计算机与通信学院, 2018. |

| Wang Na-na. Research on image classification based on bag of words model[D]. Lanzhou:Computer and Communication, Lanzhou University of Technology, 2018. | |

| [6] | 张莹, 亚森·艾则孜, 吴顺祥. 利用N-gram和语义分析的维吾尔语文本相似性检测方法[J]. 计算机应用研究, 2019, 36(9): 2722-2725, 2729. |

| Zhang Ying, Ai-ze-zi Yasen, Wu Shun-xiang. A uyghur text similarity detection method using N-gram and semantic analysis[J]. Applied Research of Computers,2019, 36(9): 2722-2725, 2729. | |

| [7] | 杨名. 面向复杂语境的文档级关系抽取方法研究[D]. 大连: 大连海事大学信息科学技术学院, 2023. |

| Yang Ming. Research on document-level relation extraction for complex context[D].Dalian: School of Information Science and Technology, Dalian Maritime University, 2023. | |

| [8] | Huang P S, He X, Gao J, et al. Learning deep structured semantic models for web search using clickthrough data[C]∥Proceedings of the 22nd ACM International Conference on Information & Knowledge Management, Francisco, USA, 2013: 2333-2338. |

| [9] | Shen Y, He X, Gao J, et al. A latent semantic model with convolutional-pooling structure for information retrieval[C]∥Proceedings of the 23rd ACM International Conference on Conference on Information and Knowledge Management, Shanghai, China, 2014: 101-110. |

| [10] | Wang J, Luo L, Wang D. Research on Chinese short text classification based on Word2Vec[J]. Computer Systems & Applications, 2018, 27(5): 209-215. |

| [11] | 翁兆琦, 张琳. 基于多角度信息交互的文本语义匹配模型[J]. 计算机工程, 2021, 47(10): 97-102. |

| Weng Zhao-qi, Zhang Lin. Text semantic matching model based on multi-angle information interaction[J]. Computer Engineering, 2019, 47(10): 97-102. | |

| [12] | 徐文峰, 杨艳, 张春凤. 融合实体上下文特征的深度文本语义匹配模型[J]. 武汉大学学报: 理学版, 2020, 66(5): 483-494. |

| Xu Wen-feng, Yang Yan, Zhang Chun-feng. Deep text semantic matching model combining entity context features[J]. Journal of Wuhan University (Science Edition), 2019, 66(5): 483-494. | |

| [13] | 张贞港, 余传明. 基于知识增强的文本语义匹配模型研究[J]. 情报学报, 2024, 43(4): 416-429. |

| Zhang Zhen-gang, Yu Chuan-ming. Research on text semantic matching model based on knowledge enhancemen[J]. Journal of Information Technology, 2024, 43(4): 416-429. | |

| [14] | 赵云肖, 李茹, 李欣杰, 等. 基于汉字形音义多元知识和标签嵌入的文本语义匹配模型[J]. 中文信息学报, 2024, 38(3): 42-55. |

| Zhao Yun-xiao, Li Ru, Li Xin-jie, et al. Text semantic matching model based on multiple knowledge of Chinese character phonology and tag embedding[J]. Journal of Chinese Information Processing, 2019,38(3): 42-55. | |

| [15] | 臧洁, 周万林, 王妍. 融合多头注意力机制和孪生网络的语义匹配方法[J]. 计算机科学, 2023, 50(12):294-301. |

| Zang Jie, Zhou Wan-lin, Wang Yan. Semantic matching method combining multi-head attention mechanism and twin network[J]. Computer Science,2023, 50(12): 294-301. | |

| [16] | 于碧辉, 王加存. 孪生网络中文语义匹配方法的研究[J].小型微型计算机系统, 2021, 42(2): 231-234. |

| Yu Bi-hui, Wang Jia-cun. Research on Chinese semantic matching in twin networks[J]. Small and Micro Computer Systems, 2019, 42(2): 231-234. | |

| [17] | 陈源, 丘心颖. 结合自监督学习的多任务文本语义匹配方法[J]. 北京大学学报: 自然科学版, 2022, 58(1): 83-90. |

| Chen Yuan, Qiu Xin-ying. Multi-task text semantic matching method combined with self-supervised learning[J]. Journal of Peking University (Natural Science Edition), 2012, 58(1): 83-90. | |

| [18] | 王明, 李特, 黄定江. 基于多维特征表示的文本语义匹配[J]. 华东师范大学学报: 自然科学版, 2022(5): 126-135. |

| Wang Ming, Li Te, Huang Ding-jiang. Text semantic matching based on multidimensional feature representation[J]. Journal of East China Normal University (Natural Science Edition), 2022(5): 126-135. | |

| [19] | 赵小虎, 赵成龙. 基于多特征语义匹配的知识库问答系统[J]. 计算机应用, 2020, 40(7): 1873-1878. |

| Zhao Xiao-hu, Zhao Cheng-long. Knowledge base question answering system based on multi-feature Semantic matching[J]. Journal of Computer Applications, 2020, 40(7): 1873-1878. | |

| [20] | Xia T, Wang Y, Tian Y, et al. Using prior knowledge to guide bert´s attention in semantic textual matching tasks[DB/OL].[2024-07-25]. |

| [21] | Wang Z Q, Li P, Zhang H, et al. Multi-passage BERT: a globally normalized BERT model for open-domain question answering[C]∥Proceedings of the Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing(EMNLP-IJCNLP),Hong Kong, China, 2019: 4892-4903. |

| [22] | Alberti C, Lee K, Collins M, et al. A bert base-line for the natural questions[J/OL]. [2024-07-25]. arXiv preprint arXiv:1901.08634 |

| [23] | 宋爽, 陆鑫达. 基于BERT与图像自注意力机制的文本匹配模型[J]. 计算机与现代化, 2021(11): 12-16, 21. |

| Song Shuang, Lu Xin-da. Text matching model based on BERT and image Self-attention mechanism[J]. Computer and Modernization, 2021(11): 12-16, 21. | |

| [24] | 陈岳林, 高铸成, 蔡晓东. 基于BERT与密集复合网络的长文本语义匹配模型[J]. 吉林大学学报: 工学版,2024, 54(1): 232-239. |

| Chen Yue-lin, Gao Zhu-cheng, Cai Xiao-dong. Long text semantic matching model based on BERT and dense composite network[J]. Journal of Jilin University (Engineering and Technology Edition), 2024, 54(1): 232-239. | |

| [25] | 王钦晨, 段利国, 王君山, 等. 基于BERT字句向量与差异注意力的短文本语义匹配策略[J]. 计算机工程与科学, 2024, 46(7): 1321-1330. |

| Wang Qin-chen, Duan Li-guo, Wang Jun-shan, et al. Short text semantic matching strategy based on BERT sentence vector and differential attention[J]. Computer Engineering and Science, 2019, 46(7): 1321-1330. | |

| [26] | Jawahar G, Sagot B, Seddah D. What does BERT learn about the structure of language?[C]∥ACL57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 2019: 729-739. |

| [27] | Gao T, Yao X, Chen D. Simcse: simple contrastive learning of sentence embeddings[J/OL].[2024-07-26]. arXiv preprint arXiv: 2104.08821 |

| [28] | Liu X, Chen Q, Deng C, et al. Lcqmc: a large-scale chinese question matching corpus[C]∥Proceedings of the 27th International Conference on Computational Linguistics, 2018: 1952-1962. |

| [29] | Chen J, Chen Q, Liu X, et al. The bq corpus: a large-scale domain-specific chinese corpus for sentence semantic equivalence identification[C]∥Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, 2018: 4946-4951. |

| [30] | Lai Y, Feng Y, Yu X, et al. Lattice CNNS for matching based chinese question answering[C]∥Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, USA, 2019: 6634-6641. |

| [31] | 刘东旭, 段利国, 崔娟娟, 等. 融合义原相似度矩阵与字词向量双通道的短文本语义匹配策略[J]. 计算机科学, 2024(12): 250-258. |

| Liu Dong-xu, Duan Li-guo, Cui Juan-juan, et al. A short text semantic matching strategy combining semantic similarity matrix and word vector[J]. Computer Science, 2024(12): 250-258. | |

| [32] | Chen L, Zhao Y, Lyu B, et al. Neural graph matching networks for Chinese short text matching[DB/OL]. [2024-07-26]. |

| [33] | Zhang X, Li Y, Lu W, et al. Intra-correlation encoding for chinese sentence intention matching[DB/OL].[2024-07-27]. |

| [1] | 徐慧智,郝东升,徐小婷,蒋时森. 基于深度学习的高速公路小目标检测算法[J]. 吉林大学学报(工学版), 2025, 55(6): 2003-2014. |

| [2] | 文斌,丁弈夫,杨超,沈艳军,李辉. 基于自选择架构网络的交通标志分类算法[J]. 吉林大学学报(工学版), 2025, 55(5): 1705-1713. |

| [3] | 李健,刘欢,李艳秋,王海瑞,关路,廖昌义. 基于THGS算法优化ResNet-18模型的图像识别[J]. 吉林大学学报(工学版), 2025, 55(5): 1629-1637. |

| [4] | 张汝波,常世淇,张天一. 基于深度学习的图像信息隐藏方法综述[J]. 吉林大学学报(工学版), 2025, 55(5): 1497-1515. |

| [5] | 李振江,万利,周世睿,陶楚青,魏巍. 基于时空Transformer网络的隧道交通运行风险动态辨识方法[J]. 吉林大学学报(工学版), 2025, 55(4): 1336-1345. |

| [6] | 赵孟雪,车翔玖,徐欢,刘全乐. 基于先验知识优化的医学图像候选区域生成方法[J]. 吉林大学学报(工学版), 2025, 55(2): 722-730. |

| [7] | 刘元宁,臧子楠,张浩,刘震. 基于深度学习的核糖核酸二级结构预测方法[J]. 吉林大学学报(工学版), 2025, 55(1): 297-306. |

| [8] | 徐慧智,蒋时森,王秀青,陈爽. 基于深度学习的车载图像车辆目标检测和测距[J]. 吉林大学学报(工学版), 2025, 55(1): 185-197. |

| [9] | 李路,宋均琦,朱明,谭鹤群,周玉凡,孙超奇,周铖钰. 基于RGHS图像增强和改进YOLOv5网络的黄颡鱼目标提取[J]. 吉林大学学报(工学版), 2024, 54(9): 2638-2645. |

| [10] | 张磊,焦晶,李勃昕,周延杰. 融合机器学习和深度学习的大容量半结构化数据抽取算法[J]. 吉林大学学报(工学版), 2024, 54(9): 2631-2637. |

| [11] | 乔百友,武彤,杨璐,蒋有文. 一种基于BiGRU和胶囊网络的文本情感分析方法[J]. 吉林大学学报(工学版), 2024, 54(7): 2026-2037. |

| [12] | 郭昕刚,何颖晨,程超. 抗噪声的分步式图像超分辨率重构算法[J]. 吉林大学学报(工学版), 2024, 54(7): 2063-2071. |

| [13] | 张丽平,刘斌毓,李松,郝忠孝. 基于稀疏多头自注意力的轨迹kNN查询方法[J]. 吉林大学学报(工学版), 2024, 54(6): 1756-1766. |

| [14] | 孙铭会,薛浩,金玉波,曲卫东,秦贵和. 联合时空注意力的视频显著性预测[J]. 吉林大学学报(工学版), 2024, 54(6): 1767-1776. |

| [15] | 陆玉凯,袁帅科,熊树生,朱绍鹏,张宁. 汽车漆面缺陷高精度检测系统[J]. 吉林大学学报(工学版), 2024, 54(5): 1205-1213. |

|

||